CHAPTER 3

Courage is willingness to take the risk once you know the odds.

Optimistic overconfidence means you are taking the risk because you don't know the odds. It's a big difference.

— Daniel Kahneman

Opportunity

I took a Mayan Studies class in college, where I learned about ancient archaeological sites throughout Mexico and South America. I began dreaming of a self-powered tour of the Yucatán Peninsula by bike. Soon, I recruited a classmate who later became my wife. After graduating from college, we flew into Cancun Airport. We pedaled south to Tulum and then set course for Coba, Chichen Itza, and Merida.

I remember how I anticipated riding my bike, isolated in the seldom-visited parts of the Mayan Riviera. At that time, I couldn't stomach wasting money to purchase a mattress, much less a bedframe. On the floor at night, I lay staring at the ceiling, envisioning a casual morning ride that gradually revealed historic monuments shrouded in the clearing mist. In my dreams, the majestic Mayan ruins were covered in the cold morning dew, cresting just beyond my evaporating sightline, still silent save for the chirping local fauna.

Just after graduating, we made the trip. Later I secured a great job, we bought a house, and eventually we got married in Tulum. But I was still hungry for adventure. In my naïve view, the only way to replicate the euphoria of travel was more travel. I thought it was the only thing that would ever make me feel happy.

About that time, the article “Happiness Is Love – and $75,000” surfaced on the web, referencing research from Dr. Angus Deaton and Dr. Daniel Kahneman. They had determined that happiness results from the fulfillment of two abstract psychological states – emotional well-being and life evaluation.1

From a life evaluation perspective, I had accomplished the goals I had set. I was financially secure, and in a sense, emotionally fulfilled. I was living a fast-paced corporate life while producing strong results at work. I was a Hilton Diamond member, Emerald Club, United Premier, and Southwest A-list. The travel status gave me a false sense of self-importance.

Meanwhile, I strained my relationships, traveling 80% of the year. My stress levels were through the roof. I had not seen a friend in months, and I rarely exercised. In all my success at work, I was lonely and unhappy.

I began to search for happiness, which resulted in a case for more adventure travel. I eventually concluded that a career break would reduce my stress, boost creativity, amplify life satisfaction, and ultimately increase my productivity. I was unwilling to consider that this move could be a taboo career mistake. I compiled a convincing mountain of evidence and resolved an unwavering commitment to travel once more.

And so, more than a decade ago, my wife and I decided to embark upon a round-the-world (RTW) trip. Usually, when we tell the story, it goes something like this: “We sold everything that didn't fit in a small travel suitcase and hit the road.” Only, that is not how it happened.

We debated for years. Then we started saving money, albeit misaligned on why we were saving in the first place. We might have been saving for a down payment on a new home, or to enroll in graduate school, or for retirement. Eventually, we sold our home and rented a single room in a small trailer park in Westminster, Colorado. We reduced our monthly costs and strained our marriage to the brink of divorce. Eventually, we hit the road, but we did so with an agreement that we would evaluate whether or not we should continue in marriage after the first month on the road.

In hindsight, we decided to travel out of optimistic overconfidence more so than courage. We did the best we could, but our thinking and decision processes were flawed. In the end, I'm confident the RTW decision was the right decision for us. However, the process we followed to make the decision was a painful experience. It's fair to say we lacked the emotional skills to navigate the conflict we experienced during our decision. We faltered in thinking through or communicating basic financial concepts such as cash-based budgeting, sunk cost, and opportunity cost. I looked extensively for information that justified a decision to travel and entirely neglected any opposing views.

On the one hand, we learned that American culture sets a default path through life that many will follow. On the other hand, we also learned that there are several things you can do to improve choice architecture and decision processes. All these insights apply equally to your role as a cybersecurity leader and business decision-maker.

“I have heard CISOs frequently exclaim, they have enormous accountability and responsibility, but they lack the authority to get things done. It comes down to architecting the choices your business makes by blending perspectives enough to get the best outcome.”2

To improve your ability to integrate cybersecurity perspectives into business decisions, we will explore the scientific method, consider decision science, and identify strategies to strengthen choice architecture. Then, in the Application section of the chapter, we will apply these tools to real-world decisions that every CISO must make. Finally, in Part II – Communication and Education and Part III – Cybersecurity Leadership, we'll explore how cybersecurity teams can play a positive role in forming business culture and decisions.

Principle

Yogi Berra once said, “In theory, there is no difference between theory and practice. In practice, there is.” The quote is both funny and true. I mention the quote now because we're starting with a brief discussion on the scientific method. Rest assured, I have no illusion that the scientific method is more theory than practice for most business managers. I believe that Eric Reis got it right in his book The Lean Startup. The key is small-batch thinking – where you use the scientific method to iterate through and refine your hypothesis. The point is not perfect science but instead expedited learning. Once you have the basics down, you can execute the process in just a few minutes. Done right, you will find a balance between complete analysis and the application of your new learnings. Done wrong, you can find yourself making quick irreversible decisions that have a very high cost. In modern lexicon, this is essentially “fail fast, fail often.”

Scientific Method

According to professional decision-maker Ray Dalio, “most of the processes that go into everyday decision making are subconscious and more complex than is widely understood.” Here, we have intentionally set aside the sub-conscious and emotional components of decision-making. We'll address those elements under the heading of Decision Science below. Instead, this section presents the scientific method, which is a structured approach to learning.

To place the scientific method in the context of decision-making, consider Ray Dalio's perspective that “while there is no one best way to make decisions, there are some universal rules for good decision making. They start with recognizing: 1) the biggest threat to good decision making is harmful emotions, and 2) decision making is a two-step process (first learning, and then deciding).”3

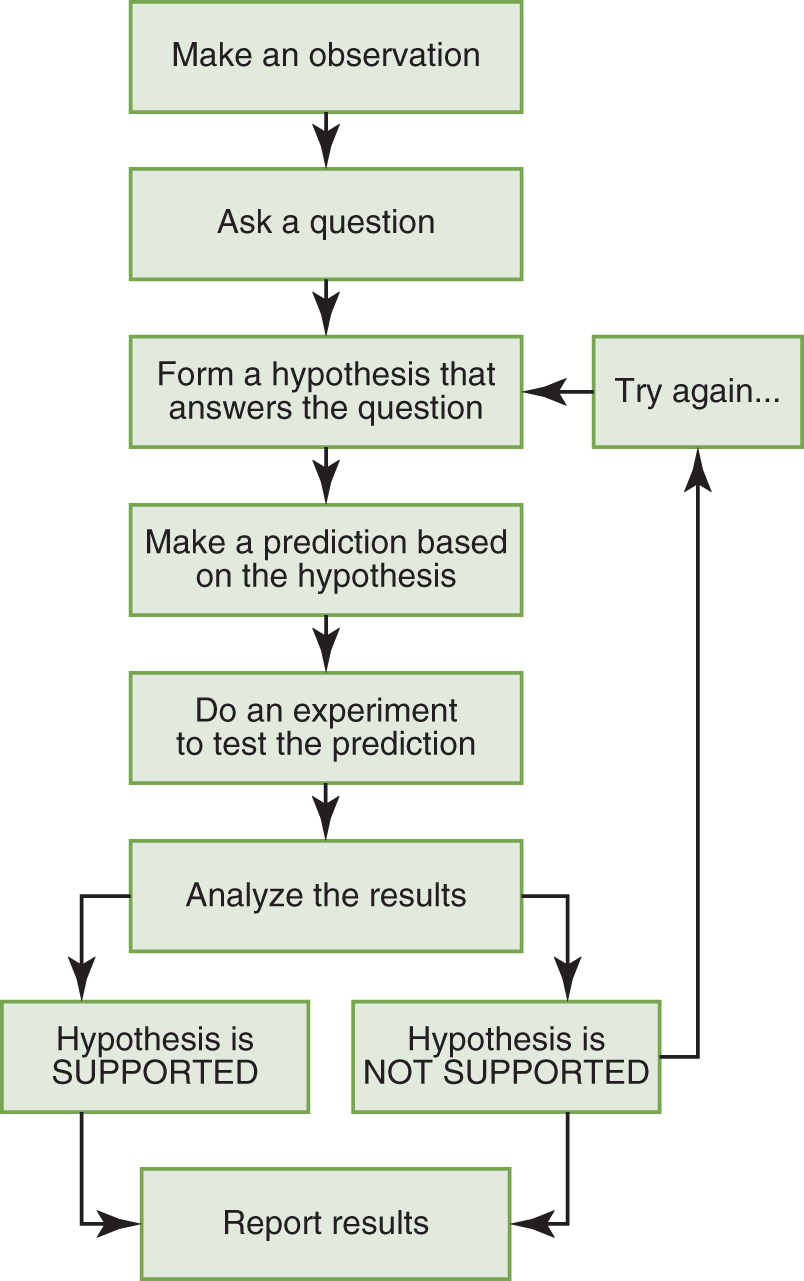

Indeed, the scientific method is “a process for experimentation used to explore observations and answer questions…The goal is to discover cause and effect relationships by asking questions, carefully gathering and examining the evidence, and combining the available information into a logical answer.”4 Figure 3.1 illustrates the scientific method5:

FIGURE 3.1 Flowchart of The Scientific Method

Source: Rice University / CC BY 4.0.

Business decisions rarely follow such a structured approach. Even resource-rich companies, flush with cash and surrounded by data, may skip the rigor required to establish and test a hypothesis. Further, it is all too common that confirmation bias corrupts the analysis phase. As discussed in Chapter 1 – Financial Principles, technical, statistical, or business comprehension issues affect both the presenter and the audience.

Nevertheless, you can control your role in the decision process by keeping the scientific method present as you apply discipline and diligence to an objective and structured learning methodology.

Decision Science

No discussion of decision science should exclude Nobel Prize–winning psychologist Daniel Kahneman. His research supplied the foundation of behavioral economics and derived a new way of thinking about human error. At a minimum, we recommend a review of his 2011 work, Thinking, Fast and Slow.

If you are not up for the full read, you are in luck! Conveniently, many findings from Kahneman and his research partner, Amos Tversky, have been summarized here: https://yourbias.is/.

Decision Processes

This section mirrors the structure of Decisive, a book authored by Chip and Dan Heath. In Decisive, the Heaths outline strategies to improve decision-making using their mnemonic WRAP, summarized below.

Building upon Kahneman's insights, their work reveals that despite thorough analysis, the quality of executive decisions is often lacking. More importantly, “when the researchers compared whether process or analysis was more important in producing good decisions (that increased revenues, profits, and market share), they found that ‘process mattered more than analysis – by a factor of six.'”6 Indeed, to improve your decision quality, you need adequate data and robust analysis, but the process is paramount. That is to say; a robust process makes a world of difference.

If you think about a normal decision process, it usually proceeds in four steps. The book Decisive explains that there is a villain that afflicts each of these steps, as follows:

· “You encounter a choice. But narrow framing makes you miss options.

· You analyze your options. But the confirmation bias leads you to gather self-serving information.

· You make a choice. But short-term emotion will often tempt you to make the wrong one.

· Then you live with your choice. But you'll often be overconfident about how the future will unfold.”7

At this point, we have enumerated the primary decision-making stumbling blocks. As any tenured cybersecurity pro knows, the natural companion to a gap analysis is a remediation roadmap. Here are the questions we can ask to improve upon the decision-making process:

· “Widen Your Options. How can you expand your set of choices?

· Reality-Test Your Assumptions. How can you get outside your head and collect information that you can trust?

· Attain Distance Before Deciding. How can you overcome short-term emotions and conflicted feelings to make the best choice?

· Prepare to Be Wrong. How can we plan for an uncertain future so that we give our decisions the best chance to succeed?”8

Many of the challenges we face in cybersecurity programs stem from a failure of imagination, diligent research, or a willingness to acknowledge the human element. Individual behavior is ultimately an accumulation of choice. Naturally, there is a difference between split-second judgment and deliberate thinking, as Kahneman points out. Regardless, we must recognize and leverage both intuitive and intentional thinking patterns to our advantage.

Employ the tactics below to engage your cognitive self, confront your biases, and consciously decide. Doing so will dramatically improve the odds that you make wise decisions. Further, identifying when others fall victim to cognitive biases and nudging them in a positive direction will go a long way to alleviate common points of contention that often plague cybersecurity leaders.

WIDEN YOUR OPTIONS

Here are a few things you can do to avoid selecting from a narrow set of options:

· Blend Prevention and Promotion for a wiser decision. “Psychologists have identified two contrasting mindsets that affect our motivation and our receptiveness to new opportunities: a ‘prevention focus,' which orients us toward avoiding negative outcomes, and a ‘promotion focus,' which orients us toward pursuing positive outcomes.”9 No doubt, professional risk managers will lean towards preventative thinking. Still, it is essential to use both when considering your interaction with the business.

· Be wary of “whether or not” decisions. For example, don't be limited to deciding whether or not to allow the business to migrate data to the cloud. Perhaps some alternatives are smaller in risk and investment, such as beginning to build your cloud expertise on low-risk workloads. Further, research shows that selecting from two options yields significantly stronger business outcomes.

· Avoid faux options. As a complement to avoiding whether or not decisions, you must be aware of fake alternatives. Often a decision is between one good option and one poor alternative. Reject these imposter options. For example, We can either implement DLP or completely lose control of all data in our data warehouse.

· Remove options. Force yourself to remove the options available and consider what you might do despite the limited options. What would I do if forced to operate an entire security program with no additional hires? (Here, we eliminate hiring as an option.) By removing the obvious choices, you must consider alternatives. Are there ways to recruit additional support from other executives in the business? Can you reframe responsibilities? Is there an option to outsource fractional components of your program? Would you be able to hire unpaid interns, develop skills in existing staff, or stop performing an activity altogether?

· Consider Opportunity Cost. Opportunity cost is the loss of gain from other options by selecting the one at hand. Note that a security initiative that goes unfunded faces a preferred opportunity cost more often than not. By choosing a platform technology, I may lock myself in from accessing best-of-breed tooling. By hiring a security engineer, a business cannot hire another sales professional, make additional investments in operations support, or fund a new initiative.

· Explore multiple options in parallel, not in serial. Using the multi-track method avoids ego issues because no one identifies their ego with a solution they worked to develop. You often learn the shape of the problem, and it offers a built-in fallback plan. For example, hire two firms to design your IAM program's Phase I architecture. Then observe where their approaches converge and what differences exist. Select the best ideas. I detest Request for Proposal (RFP) processes in most cases, but they can serve as a method to multi-track a solution. I caution that a rigid process may limit opportunities for firms to differentiate.

· Push for “this AND that” rather than “this or that.” It's possible to find a third alternative that satisfies both sides of an argument more often than you think. Often the key is to clarify what you want and separate that from the chosen strategy to achieve it. “We need two more app sec pros, and a Static Application Security Testing (SAST) tool” is really about ensuring secure code. The secure code outcome may come by other means. For example, perhaps the DevOps team integrates four mutually approved lightweight open-source tools into the DevOps toolchain and agrees to use an inherently more secure development framework rather than adopting an SAST tool. Additionally, they commit to respond within a defined service level objective to Dynamic Application Security Testing (DAST) performed on a subset of sensitive code modules. If defect density is reduced, but you don't get the tooling or staff you want – does it matter? I say it does not.

· Find someone who has already solved your problem. If your company is large, there might be someone else who has already solved your issue. Look for internal bright spots. Perhaps the software development group resolves vulnerabilities much more efficiently than your infrastructure team. Why is that? Alternatively, explore solutions in other businesses by visiting industry groups, Information Sharing and Analysis Centers (ISACs), public slack channels, meetup groups or otherwise. Still at a loss? Consider exploring adjacent disciplines (legal, human resources, etc.). For example, others have encountered and navigated making decisions in the face of uncertainty, achieving business alignment, and establishing strong executive accountability. Design flaws and user adoption are no stranger to our counterparts in the IT organization. Marketing our capabilities and services to our organizations can benefit from engaging – no surprise here – our Marketing teams. Another example is reviewing literature on leadership during World War II. I found a surprising number of instructive lessons about leadership and communication. Dwight D. Eisenhower made greater use of press conferences than any previous president, and Winston Churchill exploited words to achieve a great many things. So frequency of communication and precise, simple language can be a powerful combination.

REALITY-TEST YOUR ASSUMPTIONS

Once you have explored a broad set of options, you need to choose between the alternatives. Before you do, it's essential to identify the tendency toward confirmation bias and challenge your thinking. After all, “open-mindedness is motivated by the genuine worry that you might not be seeing your choices optimally. It is the ability to effectively explore different points of view and different possibilities without letting your ego or your blind spots get in your way. It requires you to replace your attachment to always being right with the joy of learning what's true.”10 One way to ensure you are in pursuit of the truth is to spark constructive disagreement intentionally. Here are a few other ideas:

· Gather more trustworthy information with disconfirming questions. When we implemented our Unified Endpoint Management platform, I asked the salesman plenty of questions about the platform's ability to restrict specific executables, report upon patch levels, perform zero-touch hardware deployments, and validate USB blocks. Of course, the answer was ‘Yes, we can do that.' I took their word because they were in the top right quadrant in analyst reports. But I should have asked better questions like “Can this be managed with a team of one? If so, can you put me in touch with a reference customer?” or “What problems or challenges during implementation do customers typically encounter?” Research shows that specific penetrating questions are much more likely to receive honest answers. However, if there is a power differential or an apparent discrepancy in levels of expertise, you need to tread lightly. As an executive team member, you likely wield a degree of authority. In cases where there is an apparent power dynamic, you are better off asking open-ended questions.

· Consider the opposite.11 Once you have drawn a conclusion, stop and ask yourself what would have to be true to invalidate this decision? This technique aims to shift your pattern of thinking from a defensive mindset to one of problem-solving. It “is particularly useful in organizations where dissent is unwelcome, where people who challenge the prevailing ideas are accused of failing to be ‘team players.'”12 For example, I took a stance that a particular tooling suite was not optimal to protect our organization. There were many business and nontechnical reasons this position was contentious. Before we were willing to replace the tooling, we collectively needed to convince ourselves that we were not the cause of the challenges we had encountered. So, I championed an excellence program rather than immediately replacing the platform. After exerting a diligent effort to make our partnership work, our entire business hesitantly decided to find an alternative.

· Construct small experiments. You can construct micro experiments to test your assumptions. Rapidly improve your hypothesis by applying the scientific method to the smallest scope available. Don't let waterfall thinking or perfection be your enemy. Consider Kickstarter, a site that allows creators to validate the value hypothesis of their creative work. In many cases, a complete product isn't available, but instead, Kickstarter requires projects to show backers a prototype of what they're making. If there's a community of users who value the new creation, the project will likely get funded.13

ATTAIN DISTANCE BEFORE DECIDING

Now that you have broadly explored alternatives and embraced the joy of learning what is true, it's essential to quiet short-term emotion, gain some perspective, and validate alignment with core priorities. Here are a few techniques to do just that:

· 10/10/10 is a strategy developed by Suzy Welch to keep short-term emotions in check. It's simple, just ask yourself, “How will this decision impact me in ten minutes, ten days, and ten years?” Adding this temporal component to decision-making can help manage the overactive short-term emotional impact of a decision.

· Advise Your Best Friend. A quick shift in perspective can work wonders. By taking a moment to gain an observer's perspective, you may simplify your decision. Attaining distance can make you see the core issues and help you clear the fog of loss-aversion or mere-exposure phenomena that commonly lead to status-quo bias. In 2014 I interviewed at an insurance company in Nevada that still hadn't permitted wireless networks in their corporate office. Suffice it to say, status-quo can play an influential role in both adopting new cybersecurity controls as well as in shedding them.

· Honor Your Core Priorities. Clarifying core priorities can help you navigate challenging decisions in a leadership role. Are you committed to continuous improvement or fortified defenses? Does your business have a culture that favors failing forward, or is the risk appetite too small for that type of experimentation? Is a small step in the right direction enough for today, knowing there's more to come, or are you fighting for complete control? For example, is your business prepared to accept the risks of operating applications on top of Kubernetes clusters and Docker container images? Should your business's digital transformation slow down and wait for the cybersecurity team to learn these technologies, identify, adopt, and operationalize security products? In most cases, you can take these questions back to the leaders pressing to adopt new technology and frame their decisions in a context that considers the company's risk appetite or priorities. In Chapter 12 – Negotiations, we'll talk about adapting SRE error budgets to security paradigms or leveraging calibrated “How” questions to recruit others into the problem solving.

PREPARE TO BE WRONG

Preparing to be wrong when considering a range of outcomes can help you explore a broader set of possibilities, overcome your overconfidence, and stack the deck in your favor. There are several specific techniques to consider. Doing so will help you anticipate and respond to both good and bad outcomes. Finally, it's worth having a clear understanding of when to reconsider a previous decision. Techniques that can help you respond to uncertainty include:

· Limit Test. My experience in becoming a calibrated subject matter expert, following the practices of quantitative risk management promoted by Hubbard Decision Research (https://hubbardresearch.com/) and the FAIR Institute (https://www.fairinstitute.org/), validates the research that overconfidence is the norm. Considering a range of outcomes that includes the worst case and the best case can significantly improve forecast ability. Following this pattern expands our thinking and places our best guess on a continuum of possibilities. This examination of outer bounds helps us avoid anchoring, recency bias, framing, and overconfidence. Naturally, considering upside and downside risks is an integral part of a cybersecurity leader's job.

· Premortem. Prospective hindsight is when you assume a particular outcome and then explore why it might be right. For example, it's two years later, and your attempt to implement privileged access management has failed. Why did it fail? Asking this question in advance is a different way to manage the risks of a project. Had we done so in our attempt to roll out a password management tool, we would likely have paid much closer attention to user adoption and culture within our engineering teams.

· Preparade. Naturally, you also need to consider the opposite of a failed program or project. What if your efforts are wildly successful? We'll see more on this in the application section of the chapter.

· Set a Trigger. At some point, grit becomes stupidity. Persistence is certainly a valued trait in leadership, but don't let your commitment to a decision prevent you from confronting the brutal facts. Having an objective trigger to reconsider a decision forces a level of awareness. When you first consider adding Identity Governance and Administration (IGA), you might learn that the return on investment (ROI) only makes sense with 500 or more users. Set a trigger to reconsider investment in IGA when you can justify an ROI. Ideally, you can establish the threshold in a broader governance forum such as the Risk Committee, Audit Committee, or Executive Leadership Team. That way, you are sure to plan for an investment before your identity challenges become too complicated. Meanwhile, dust off your python and PowerShell skills and prepare for some intense spreadsheet manipulation!

Choice Architecture

The section above offers techniques to mitigate the well-researched phenomenon of loss aversion, status quo, confirmation, and other biases in the process of decision making. Equipped with these strategies and an awareness of decision-making pitfalls, we turn our attention to choice architecture. This section will lean heavily on the work Nudge by Richard H. Thaler and Cass R. Sunstein. As they define a few terms, “A choice architect has the responsibility for organizing the context in which people make decisions. Further, a nudge, as we will use the term, is any aspect of choice architecture that alters people's behavior in a predictable way without forbidding any options or significantly changing their economic incentives.”14

My experience with implementing cybersecurity across several organizations matches the following description. Most people want to do the right thing. Yet, they might forget or avoid implementing security if it is too complicated or otherwise feel pressed for time. When given adequate encouragement and support, most people will gladly comply with security requirements. So, when are nudges helpful, and what are the most beneficial ways CISOs structure choices and nudge their organizations?

According to Thaler and Sunstein, nudges can be most helpful when making a decision that offers benefits now and costs later. Often people (and organizations) struggle with self-control and accountability. As the time between a choice and its consequences increases, so does the accountability gap. Further, when the frequency of action is low, the complexity of a decision is high, or the feedback offered along the way is limited, a nudge might help. Finally, look to nudge when the relationship between a choice and the experience that follows is ambiguous.

To understand how this all applies to the CISO, let's use the mnemonic tool NUDGES in a cybersecurity context:

· iNcentives

· Understand mappings

· Defaults

· Give feedback

· Expect error

· Structure of complex choices

INCENTIVES

To explore incentives in a structured way, let's consider the six sources of influence model published by Vital Smarts. Motivation and ability are the foundation of the model. They subdivide these domains into three distinct categories: personal, social, and structural, which in turn reflect separate and highly developed bodies of literature: psychology, sociology, and organizational theory.

“The first two domains, Personal Motivation and Ability, relate to sources of influence within an individual (motives and abilities) that determine their behavioral choices. The next two, Social Motivation and Ability, relate to how other people affect an individual's choices. The final two, Structural Motivation and Ability, encompass the role of non-human factors, such as compensation systems, space, and technology.”15

Figure 3.2 presents the entire model:

The authors of Influencer found in their research that successful influencers increased their chances of success tenfold by combining strategies from all Six Sources of Influence. However, the point here is that incentives are a necessary but insufficient source of influence required to modify behaviors. Next, we can explore personal, social, and structural motivations with a choice architect perspective.

FIGURE 3.2 The Six Sources of Influence

Source: Grenny, J., Maxfield, D., and Shimberg, A., How to 10X Your Influence. Used with permission.

PERSONAL INCENTIVES – MAKE THE UNDESIRABLE DESIRABLE

In my estimation, the personal category of the motivation domain contains several components. The first is how much you enjoy the task. Making all aspects of security enjoyable seems unlikely. A close alternative, however, is to make it easy to perform. The people in your organization will opt for the path of least resistance. Simple, intuitive processes are best. An example of this approach is the emerging trend toward passwordless login methods.

Additional strategies the Vital Smarts framework surfaces include:

1. Allow for choice – replace judgment with empathy and lectures with questions. Ask thought-provoking questions and listen, allowing others to discover on their own what they must do.

2. Create direct experiences – this can remove the fear of the unknown, reinforce understanding, and improve compassion.

3. Tell meaningful stories – help people understand the consequences of their actions vicariously.

4. Make it a game – identify a scoreboard that reflects the individual's direct actions and make the score visible.

SOCIAL INCENTIVES – HARNESS PEER PRESSURE

There are several things that cybersecurity leaders can do to overdetermine success. One of those things is to harness the power of peer pressure. Some examples:

· Minor tweaks in language can have an outsized impact on your success. For example, rather than telling people what to do, describe for them what others are doing. Psychologists call this practice positive injunctive norming. To get users to take annual security training and secure code training, we used to send reminder emails weekly. Now, we send fewer reminders phrased like this “Please join the majority (78%) of your peers who have already completed the secure code training.” We also publish the metrics in aggregate and share them with multiple department managers at once to encourage friendly competition.

· Offer guidance on scheduling and include visuals or links to prime people with cues and make their choice easier. In our reminders, we've started adding content such as this: Don't wait till the last minute. Schedule 1 hour in your calendar this week to be sure you have completed the training well before the 10/31 completion date. Click here to get started.

· “The ‘mere-measurement effect' refers to the finding that when people are asked what they intend to do, they become more likely to act in accordance with their answers. This finding can be found in many contexts. If people are asked whether they intend to eat certain foods, to diet, or to exercise, their answers to the questions will affect their behavior.”16 This year, we added a few free-text answer boxes in the Security Awareness quiz to ask users how they intend to support the security program in the coming year. We hope to prompt our colleagues to describe when and how they plan to do it and expect this will further establish a personal commitment to the security initiatives this year.

· Recruiting thought leaders as security champions can be an excellent method for changing culture and reinforcing the desired behavior. The right security champions align social incentives to encourage positive norms in the workplace.

· Finally, don't confuse (or miss) Dr. Cialdini's Principles of Persuasion if you want to dig deeper (https://www.influenceatwork.com/principles-of-persuasion/).

STRUCTURAL INCENTIVES – DESIGN REWARDS AND DEMAND ACCOUNTABILITY

Today most businesses include incentive-based pay and management by objective approaches. When you examine more traditional literature on managing human capital, you might conclude that incentives play a huge role in corporate performance or successful transformation.

However, as outlined in Influencer, “in a well-balanced change effort, rewards come third. Influencers first ensure that vital behaviors connect to intrinsic satisfaction. Next, they line up social support. They double-check both of these areas before they finally choose extrinsic rewards to motivate behavior.” Expanding upon this, the authors explain it is best not to “use incentives to compensate for your failure to engage personal and social motivation.” Instead, “ensure that the rewards come soon, are gratifying, and are clearly tied to vital behaviors. When you do so, even small rewards can be used to help people overcome some of the most profound and persistent problems.”17

Indeed, new management theories, such as those presented by Daniel Pink in his book Drive, bring purely economic incentives into question. Pink postulates that it is better to motivate teams intrinsically using three key factors: Autonomy, Mastery, and Purpose.

UNDERSTAND MAPPINGS

To help people make better decisions, we must make information on their options more comprehensible. That means we need to create a tighter relationship between choice and experience where it is ambiguous. The Risk-Adjusted Value Model described in Chapter 2 – Business Strategy Tools serves this purpose.

Rather than describing the technical risk of failing to patch timely, you can relate patch failures to the probability of an outage, which leads to lost revenue on an e-commerce website. Instead of describing the failure of a SOC2 control, you can explain the probable impact on revenue. In that way, executives can decide if they want to take the 30% risk of lost revenue resulting from unpatched systems or an 80% chance that the team will attract three fewer clients because of adverse audit findings.

This decision is much easier to make than resolving the need for more staff to have 1,000 critical vulnerabilities instead of 2,000 on our production website.

DEFAULTS

As we all know, it is preferred to set the best possible defaults. In the world of technology, the question is who determines the definition of “best”? For years, we've accommodated insecure defaults from operating system vendors who felt that their products' easy and rapid adoption was the highest priority. That is in stark contrast to the security viewpoint. The good news is that the future looks bright. For instance, we can easily default to new approaches, including Center for Internet Security (CIS – https://www.cisecurity.org/) hardened images in both the Amazon and Microsoft Azure Marketplace.

I know what you are thinking. We still have to address the cloud management plane, containers, APIs, and other attack surfaces, but hey – cheap, available, hardened images is a start! The other thing you might have rightfully objected to is that we don't have any reason to believe all developers will immediately decide on their own to use these readily available images. And you are right. That's where forcing functions come in!

No one is immune to post-completion errors. Have you ever sent a beautifully crafted email only to have forgotten the attachment or left your gas cap at the gas station after filling the fuel tank of your car? These are common mistakes resulting from failing to complete a secondary task after completing the primary. A forcing function mitigates this type of human error. Now, email platforms prompt the user when no attachment is present, and car manufacturers have attached the gas cap to the car. As choice architects, we can implement forcing functions.

A large part of digital transformation is automation. With more robust continuous integration/continuous deployment (CI/CD) pipelines implemented, dozens of opportunities to place forcing functions to avoid security control omissions arise. An alternative to using CIS hardened images is to run a lightweight hardening script such as the DevSec Hardening Framework (https://github.com/dev-sec) to prevent deployment of unhardened systems.

Indeed, moving fast in a DevOps world is far more rewarding for developers and can produce healthier outcomes for security teams as well. Pipeline enhancement is just one example of how we can properly align incentives. When we cannot start with the best possible defaults, we can always lean on a forcing function.

GIVE FEEDBACK

One of the foundational assumptions in a strong security culture is that the cybersecurity team doesn't own the risk. The business does. Institutionalize this by providing feedback to business managers on how their teams are performing. You must indicate good or bad and empower the team with the necessary information to pinpoint how to make improvements where they are needed.

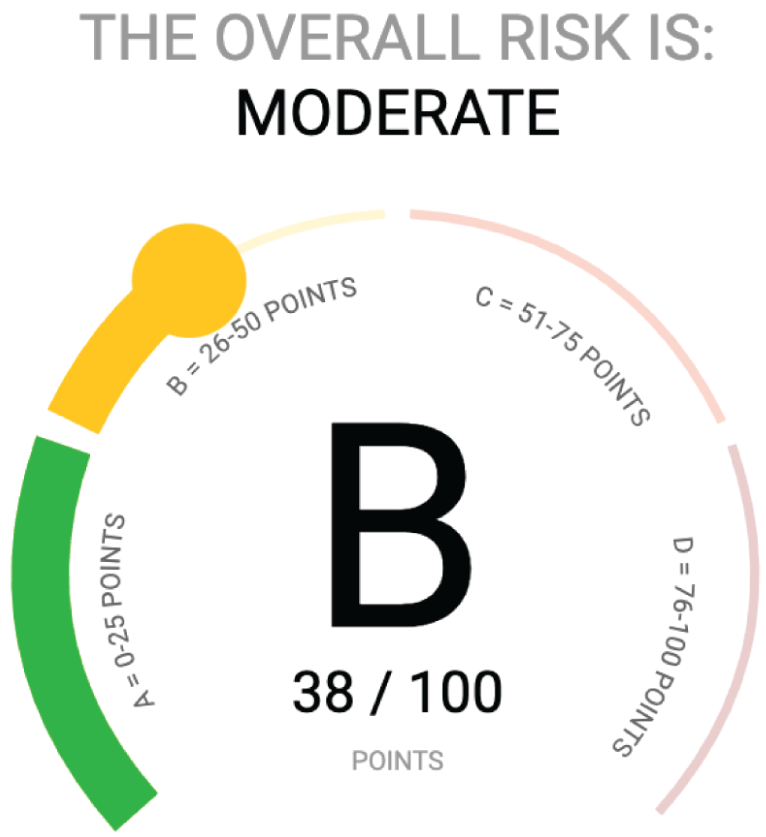

VULNERABILITY MANAGEMENT INDEX

Let's compare two alternative dashboards from NopSec that you might provide to a manager.18 In Figure 3.3, you get a clear indication of the overall picture, but you don't have any supporting details. In this case, if I am a product manager with a resource-constrained team, I might decide to delay remediation for any of the vulnerabilities detected until a future sprint. After all, my boss will be okay with a B or C in security if my end users are happy and benefiting from the timely release of new features.

FIGURE 3.3 Letter Grade

Source: Xu, L., “NopSec Unified VRM.” Used with permission.

FIGURE 3.4 Vulnerability Details

Source: Xu, L., “NopSec Unified VRM.” Used with permission.

In contrast, Figure 3.4 gives vulnerability counts but doesn't put them in the context of any expectations. Are 14 urgent vulnerabilities acceptable or not? How quickly do I need to remediate these? What happens if I don't?

SECURITY CULTURE INDEX

Occasionally, unhealthy team subcultures can create challenges for security programs. Resistant subcultures are common for new security leaders inheriting contentious programs built under former leadership. If you find yourself in this position, you can rely upon other forms of feedback to cut through the politics. For example, you could distribute a security culture metric to help managers understand the prevalence of security tool adoption in their team and the broader organization. Documenting the frequency of policy exceptions can also illuminate conflict lurking in subcultures.

Here's a sample of what you might include in such a measure, but don't be afraid to use your imagination and improve upon this list:

· Password Manager active user? { Yes / No }

· Phishing program contributor/active user? { Yes / No }

· Security Awareness completed on-time? { Yes / No }

· Secure Code Training completed on-time? { Yes / No }

· Anti-virus exceptions required/requested { Yes / No }

· Encryption exception required { Yes / No }

· Patching exception required { Yes / No }

· Firewall custom rules required { Yes / No }

EXPECT ERROR

It can be helpful to try to empathize with those you hope to influence. Doing so can help establish stimulus-response compatibility. In most cases, when stimulus-response compatibility challenges arise, the root cause is a design failure. Endless security prompts for insecure websites or out-of-date certificates can make users numb to alerts. Similarly, accusing people of intentionally undermining the security program will make them defensive and resistant to other security enhancements you propose.

Users have come to expect that firewalls are the problem, anti-virus programs slow down their machines, and all security is painful. Overcoming these biases is not easy and requires attention to human-centered design. Security teams often fail to empathize with colleagues, but a little empathy when designing controls yields collaborative relationships and willing participants that you need to shift culture.

STRUCTURE COMPLEX CHOICES

“As the choices become more numerous and vary on more dimensions, people are more likely to adopt simplifying strategies.” Therefore, “as alternatives become more numerous and more complex, choice architects have more to think about and more work to do, and are much more likely to influence choices (for better or for worse).”19

Helping people decompose a challenge or place realistic brackets around the range of possible outcomes can improve their decisions. The advantage of serving in this capacity is that you frame the analysis and set an anchor. Don't underestimate the impact of structuring complex choices.

That means you can help remind people that a risk of a breach might have a more significant range of potential impact on the business or a higher probability of occurring than they think. If you have run a calibration exercise, you can usually remind people of their calibration training experience.

Technical teams are often overconfident in the under-estimated timelines required to apply security after the fact. Helping them anchor on more realistic or even slightly conservative estimates will ensure they are successfully delivering secure computing without compromising their delivery timelines.

“In many domains, the evidence shows that, within reason, the more you ask for, the more you tend to get. Lawyers who sue cigarette companies often win astronomical amounts, in part because they have successfully induced juries to anchor on multimillion-dollar figures. Clever negotiators often get amazing deals for their clients by producing an opening offer that makes their adversary thrilled to pay half of the very high amount.”20

Application

Up to this point in the chapter, we have covered a lot of material. Let's quickly summarize and then begin to examine a few practical examples. So far, you have learned that:

· the Scientific Method provides a structured approach to learning,

· the WRAP framework improves decision-making processes,

· WRAP also enhances your ability to guide others as they navigate complex decisions,

· the Six Sources of Influence model offers a structured view into incentives and reveals other essential points of leverage in building influence strategies, and

· as a choice architect, you can use the NUDGES framework to encourage a security-friendly organizational culture.

Case Study 1 – Decision Science

In my experience, staffing strategy plays a crucial role in determining how cybersecurity teams interact with an organization. When first sizing up the staffing approach, you need to consider several factors. The most impactful considerations include the capabilities you intend to build (or continue to support), the scope in which you will apply those capabilities, and the distribution of the responsibilities between the cybersecurity team and the other parts of your organization.

Assume for a moment that you inherit a new team. There are two existing technical cybersecurity engineers managing a program primarily comprised of vulnerability scanning and security logging. The existing management team would like a more structured and mature approach that will permit them to offer assurances to their new Board of Directors that cybersecurity risk is a properly managed risk.

After a structured learning effort during the first three months of employment, you have analyzed the business using a framework of your choosing. Presumably, the framework you selected is relevant to your industry. You now have in your hands a maturity mapping and gap analysis. What you learn is that the most needed capabilities are nascent. What's more, many of the foundational processes that typically support information assurance practices are missing. For example, general vendor management and procurement procedures lack definition, change management is weak, business continuity is a paper tiger, and centralized inventory is nonexistent. Further, there is no Enterprise Risk Management function, internal audit, or privacy officer.

You suggest to your CFO that you will need significant investment to be successful. He advises you that the current three-year forecast doesn't contemplate significant staffing increases, especially for a non-revenue generating function. The expectation is that SG&A costs will decrease in the next two years.

What might you do in this scenario? Stop for a moment, and attempt to answer the question.

Let's apply the WRAP framework and see where we end.

First, we are going to explore the options available by using some techniques to Widen the Options.

You might consider whether or not you should remain with the company. You may have even told yourself that you'd never end up in that scenario because you are careful to ask specific questions in the interview process. Admittedly, “abandon ship” might be a first emotional response, but we hope you'll persist. It is not unusual for a new security leader to stretch into their first leadership role. Assume you just moved across the country or find yourself in a down economy and have to make the best of the situation. Now what?

Don't let sham options dominate your thinking. Your alternatives are much broader than to quit or begrudgingly stay in a hopeless situation. Once you realize the expansive list of options available, I'm confident you will be intrinsically motivated to find a path to success.

You can start by exploring a combination of prevention and promotion thinking. Is there a way that your efforts could save the business money and at the same time capture some of those savings to further fund your initiatives? I've used Information Governance processes to identify significant savings in legal hold and data backup expenditures in the past. On a separate occasion, I successfully framed a security remediation initiative in the name of tech debt reduction that resulted in dozens of decommissioned systems. The project had licensing, storage, operational, staffing, and security implications. Imagine the delight of my business as we realized the cost optimization benefits while we reduced our risk!

As options vanish, you force greater creativity. Earlier, we suggested finding ways to ensure the broader organization takes a more prominent role in fulfilling the security mission. While convincing other teams to take ownership of security outcomes is slower, the cultural shift yields more resilient business processes. Pause to consider the projects you can execute with existing staff that enable other managers and business executives to become the primary owners of security and compliance outcomes. One specific strategy is to draft and socialize a cybersecurity program charter. Explain the three lines of defense, and articulate who will own essential security engineering or operations activities.

Expand your business knowledge, take your concerns back to your CFO, and ask for help understanding the reasoning behind the rejection of your funding request. What alternatives is she evaluating? Why is cash allocation better applied elsewhere? With an improved understanding, perhaps you can improve cost performance or add business value in other ways. For example, you might offer to help implement cloud deployment guardrails that restrict instance types, explaining the positive impact this can have on cost engineering efforts performed by FinOps in a cloud environment.

Maybe you decide to define vendor management and procurement procedures, taking the opportunity to add a few security checks into the process. That way, you maintain awareness of purchases happening around the business and insert the security perspective needed to balance risk and reward. If intellectual property is a vital part of your enterprise value, perhaps you can help explain the need to ensure open-source licenses are carefully considered as your product teams develop software. An early investment in tooling to aid with open-source software governance makes sense to guarantee you avoid surprises during M&A diligence later on. The last thing your CFO or CTO wants to discover is an open-source license such as AGPL, or GPL 3.0, was integrated into your intellectual property requiring re-work or forcing you to release your code under the same license.21

The varied approaches discussed help you push for “this and that.” If none of this works, reach out to your network and seek out someone who has already solved this problem. You might discover the challenges you are facing are surprisingly common. Maybe a little empathy will help diffuse your frustration and expand your perspective.

By now, we can assume you've decided to stay, and you've identified a couple of opportunities to align your program with business objectives. You've built some goodwill and enhanced relationships in the process of widening your options. You may even be pleasantly surprised by the commitment of resources other than cash that might be available to help your efforts.

In setting up your roadmap for the next year, you identify a set of projects you think will benefit the business most, and now you must Reality-test Your Assumptions before moving forward. To do so, you head out to actively gather information about your proposed roadmap by asking disconfirming questions. You discover that the engineering teams are not ready to support your aggressive plans because they thought you would reduce the amount of work they had to do, not increase it! They suggest you should anticipate making less progress given their staffing constraints.

Now it's your turn to consider their feedback carefully. Remember that someone has the unenviable responsibility of deciding to accept the risk of not doing what you suggest. It's your responsibility to facilitate an informed decision, and keep in mind that it might very well be in the shareholders' best interest to do less than you recommend! Security practitioners provide one point of view, but it's essential to place yourself in a decision-maker position and consider other demands. Considering the opposite is one of the most productive moments of validation to this point in your journey.

You feel confident that you should make progress and that perhaps you overestimated what you might accomplish in the first year on the job. Finance and engineering seem to validate what lies ahead. Cross-functional feedback is causing a sense of frustration and hopelessness. You begin to question the motivations for hiring you in the first place. Are you there to make a difference or just to serve as a scapegoat?

It's time to attain distance before deciding upon your next move. So, to put short-term emotion into perspective, you apply 10/10/10. It becomes clear that quitting in a dramatic style would probably make you feel vindicated in the near term. But in 10 days, you will be out on the job market, and what will you say in the interview process about your exceedingly short time with your employer? Certainly, omitting the role from your resume would be unethical. You decide that in 10 years you will be better off having identified a way forward, despite the complications, than if you evacuate every time the going gets tough.

You've been in the workforce long enough to know that no matter what happens, it's not likely that your projections of the future are 100% accurate. To acknowledge this fact, you decide to boundary test the possible outcomes. You imagine a worst-case and best-case scenario. Worst case, you stay way too long, and you are miserable for it. Best case, you learn a lot about yourself, business practices, and your ability to connect with a deep and meaningful personal purpose in a more intimate way than you ever thought possible.

You set a trigger to reevaluate your situation in 12 months. In the end, it turns out you make more progress than you thought, and you are glad you took the time to WRAP your head around the decision.

Case Study 2 – Choice Architecture

As time goes on, it appears that attackers become increasingly efficient and lean more toward attacks such as phishing and credential theft.22 To help, many cybersecurity teams empower all employees to report phishing in more convenient and automated ways. Of course, this improves phishing defenses beyond what purely technological approaches can accomplish.

This section will explore how security leaders can serve as choice architects to defend against one of the most prevalent attack patterns, phishing. To do so we will employ the NUDGES framework.

iNcentives

Attackers know that sending unsolicited emails with clickbait to our end users is an effective way to steal credentials. Indeed, data breaches often stem from phishing. In this case, we can determine the vital behavior, clicking on links or opening attachments from unsolicited senders, and measure it using a simulated phishing program. The following sections describe the application of the six sources model to the challenge of behavioral change in support of phishing defense as summarized Table 3.1.

PERSONAL

Before phishing reporter tools were broadly available, it was challenging for users to report phishing attempts. Sending the necessary information and including key header and attachment artifacts is not intuitive. So, the first step in driving a successful phishing response is to make it easy to report suspected phishing.

Beyond enabling people with tools, we must consider how to make phishing defense more desirable. We can provide opportunities for direct experience by sending simulation emails. People get additional training when they fail to avoid phishing tests, and they experience all the emotions that accompany clicking an untrusted link. Perhaps they feel fear or shame, which is not the point. They must confront the fact that, as individuals, we are all vulnerable to phishing.

With your organization's support, you can take the direct experience one step further by including social engineering and phishing as part of penetration testing. As a leader, I have called for unrestricted “gloves-off” testing. I have also made the timing of the testing blind to our security organization as much as possible. No target is off-limits, not even our CEO. When phishing is successful, pen testers are permitted to pivot and attack further. Some employees benefit from the experience of having their email accounts compromised. They get to observe how clever the attack patterns in the wild appear. They learn first-hand how attackers send modified versions of their own office documents embedding malicious macros to attack their colleagues. For those who don't experience the attack directly, we've invited affected team members to tell their story during our Annual Security Awareness Training, extending a vicarious experience to a broader audience in our organization.

TABLE 3.1 The Six Sources of Influence Applied to Phishing Defense

|

Motivation |

Ability |

|

|

Personal |

Direct Experience & Gamification |

Phishing Reporter Tool |

|

Social |

Reset Norms – Un-discussible becomes Discussible |

Social Accountability |

|

Structural |

Gift Certificates |

n/a |

Many modern phishing simulation tools can show who in the company was the first to report the phishing. In my current role, we've gamified reporting a periodic phishing simulation email. The user who reports the phish in the least amount of time wins a small reward. These days, most simulations get reported in less than 20 seconds, and our employees have taken to bragging rights for those with the fastest time.

SOCIAL

Embedding the program into our social fabric has dramatically improved performance. One of the first phishing simulations we performed was entirely believable. We only leveraged information readily available on the internet. We even included the photograph of one of our employees and invited the company to his Halloween party. Over 30% of the company clicked the link. I was horrified but later realized that the very personal nature and high failure rate inspired the transition of phishing defense from an undiscussed topic to a very openly discussed issue.

During the idle chat before one executive session, a key executive described how the fake spa invite from “Your Special Admirer” had come just a few days before his wedding anniversary. He clicked, expecting a cool gift from his spouse only to find out that it was a security training exercise. As an opinion leader in the company, he vowed that would be the first and only time the InfoSec department would “get him” with the phishing simulation.

This open conversation and commitment to do better has proved valuable across his team and the broader organization. Most sales organizations perform poorly in my experience, but our entire Go-To-Market team is often the first to spot and report a phish and frequently has a very low click rate.

STRUCTURAL

A year or so after starting our program, confident that we had connected vital behaviors to intrinsic satisfaction, we augmented our practices to include awarding the fastest phishing reporter each month with a $25 Amazon Gift Card. People love it!

Understand Mappings

The lack of a phishing program dramatically increases the probability that your organization will experience a data breach. However, describing attack probabilities to our CFO isn't what secured investment in this new capability.

Holistically, we needed to improve spam and malware detection in our email systems. We also required staff and tooling to enhance our phishing response. To obtain funding, I asked, “How many deals are we willing to lose or customers are we ready to churn as a result of failing to manage phishing risk?” In that way, the decision wasn't about reducing the number of phishing emails, the safety of links and attachments in email, or encouraging widespread organizational behavior change. We simply focused on explaining how this new capability would protect critical value creation activities in our business. The added angle of loss aversion resulting from the precise format of the question was no accident.

Defaults

Of course, we equip all new employees with pre-installed software, including the phishing reporter. We have also taken up the practice of actively inviting all new employees to a Slack channel where we answer cybersecurity policy questions and provide other updates. More than 75% of the organization remains in the Slack channel, and a large number of team members engage in answering questions about the policy, program capabilities, and best practices. The engagement takes workload from my team and helps institutionalize the knowledge of our organization. Before we had the phishing reporter tools installed and configured, we encouraged people to report suspected phishing in the slack channel.

Give Feedback

Today, we publish click rates and other vital behavior measures to the entire organization every month. To continue to harness the power of social influence, we are now exploring additional means to encourage accountability, including publishing reporter metrics by team and issuing a challenge to those teams with lower security culture engagement. Simultaneously, we are contemplating aggregate click reporting to elevate awareness of poor-performing teams with higher click rates or repeat offenders.

Expect Error

As we implemented our simulated phishing program, we delayed deploying an easy method to report phishing. When we finally released the button, we had cultivated a skeptical, click-wary culture eager to ensure the attachments and links they opened were safe. As a result, we experienced an overwhelming volume of reported emails. Over time, click rates were down, but reporting was so high we were forced to implement automation and supplement our team with outsourcing.

We learned that if we didn't respond to the reporting of phishing emails that our user community did not engage. However, as we built the capability to respond to every reported email, engagement rose precipitously.

Structure of Complex Choices

When choices are numerous or complex, we look to simplify. To help our employees, we've authored a few rhyming rules of thumb to streamline phishing identification:

· Be wary of urgent and scary.

· Sender unknown, leave it alone.

· Stop and think about attachments and links.

· When in doubt, report and wait it out.

· Don't dig in your trash – if it's in your spam filter, there's a reason.

FIGURE 3.5 Overall Phishing Responses Over Time

Source: Cofense Phishing Detection and Response Platform. Used with permission.

In summary, you can see the cumulative impact of investments in our phishing influence plan over time reflected in our Cofense Phishing Detection and Response Platform (Figure 3.5).23 Using the NUDGES model and the Six Sources of Influence framework, our organizations' ability to identify and respond to phishing has markedly improved. Indeed, benchmarks indicate our employees exhibit world-class phishing awareness. You can see inflection points in our metrics as we improved our influence strategy over time. Movement in the data matches the timing of when we implemented the many tactics discussed above.

Key Insights

· Decision-making is a two-step process that starts with learning and ends with a decision.

· Use the Scientific Method to enhance learning: begin with a problem statement, document a hypothesis, establish a learning plan, clarify your assumptions, and finally state your conclusion and provide a concise recommendation.

· Decision process is more important than complete analysis: have a defined process such as WRAP to ensure you do not fall victim to common cognitive errors that afflict us as humans.

· As a leader you can also refer to the WRAP framework to guide others in making complex decisions.

· Vary your sources of influence: the Six Sources of Influence model offers a structured view into incentives and reveals other essential points of leverage in building influence strategies.

· As a choice architect, you can use the NUDGES framework to encourage a security-friendly organizational culture. By architecting choices, you will ensure your company makes incrementally smarter decisions that build up over time.

Notes

1. 1 Robison, J., “Happiness Is Love – and $75,000,” Gallup, 2011. Accessed November 14, 2020. https://news.gallup.com/businessjournal/150671/happiness-is-love-and-75k.aspx.

2. 2 Lewis, J., “The CISO as a Choice Architect: A Conversation with Malcolm Harkins,” Rain Capital, August 13, 2020. Accessed September 3, 2020. https://www.raincapital.vc/blog/2020/8/13/the-ciso-as-a-choice-architect-a-conversation-with-malcolm-harkins.

3. 3 Dalio, R., Principles. Simon & Schuster, 2017.

4. 4 Science Buddies, “Steps of the Scientific Method.” Accessed October 8, 2020. https://www.sciencebuddies.org/science-fair-projects/science-fair/steps-of-the-scientific-method.

5. 5 OpenStax, “The Process of Science,” 2021. http://cnx.org/contents/443e8445-8894-4d58-a72d-43a5cc541fc4@13.

6. 6 Heath, C., and Heath, D., Decisive. Crown Publishing Group, a division of Penguin Random House LLC, 2013.

7. 7 Heath, C., and Heath, D., Decisive.

8. 8 Heath, C., and Heath, D., Decisive.

9. 9 Heath, C., and Heath, D., Decisive.

10. 10 Dalio, R., Principles.

11. 11 Venables, P., “The Most Important Mental Models for CISOs – Simple Steps for Outsize Effects,” Risk & Cybersecurity – Thoughts from the Field, September 27, 2020. Accessed November 14, 2020. https://www.philvenables.com/post/the-most-important-mental-models-for-cisos-simple-steps-for-outsize-effects.

12. 12 Heath, C., and Heath, D., Decisive.

13. 13 Kickstarter, “Our Rules – Kickstarter.” Accessed November 14, 2020. https://www.kickstarter.com/rules?ref=learn_faq.

14. 14 Thaler, R.H., and Sunstein, C.R., Nudge: Improving Decisions About Health, Wealth, and Happiness, Yale University Press, 2008.

15. 15 Grenny, J., Maxfield, D., and Shimberg, A., How to 10X Your Influence, Vital Smarts, 2013.

16. 16 Thaler R.H., and Sunstein, C.R., Nudge: Improving Decisions About Health, Wealth, and Happiness.

17. 17 Grenny, J., Patterson, K., Maxfield, D., McMillian, R., and Switzler A., Influencer: The New Science of Leading Change, McGraw-Hill Education, 2013.

18. 18 Xu, L., “NopSec Unified VRM,” 2020.

19. 19 Thaler R.H., and Sunstein, C.R., Nudge: Improving Decisions About Health, Wealth, and Happiness.

20. 20 Thaler R.H., and Sunstein, C.R., Nudge: Improving Decisions About Health, Wealth, and Happiness.

21. 21 Synopsys Editorial Team, “Top Open Source Licenses and Legal Risk,” Synopsys, October 21, 2019. Accessed November 14, 2020. https://www.synopsys.com/blogs/software-security/top-open-source-licenses/.

22. 22 Data Breach Investigations Report, 2020. https://enterprise.verizon.com/resources/reports/2020-data-breach-investigations-report.pdf.

23. 23 Cofense Phishing Detection and Response Platform, 2020.