Chemistry as an earnest and respectable science is often said to date from 1661, when Robert Boyle of Oxford published The Sceptical Chymist—the first work to distinguish between chemists and alchemists—but it was a slow and often erratic transition. Into the eighteenth century scholars could feel oddly comfortable in both camps—like the German Johann Becher, who produced a sober and unexceptionable work on mineralogy called Physica Subterranea, but who also was certain that, given the right materials, he could make himself invisible.

Perhaps nothing better typifies the strange and often accidental nature of chemical science in its early days than a discovery made by a German named Hennig Brand in 1675. Brand became convinced that gold could somehow be distilled from human urine. (The similarity of colour seems to have been a factor in his conclusion.) He assembled fifty buckets of human urine, which he kept for months in his cellar. By various recondite processes, he converted the urine first into a noxious paste and then into a translucent waxy substance. None of it yielded gold, of course, but a strange and interesting thing did happen. After a time, the substance began to glow. Moreover, when exposed to air, it often spontaneously burst into flame.

The commercial potential for the stuff—which soon became known as phosphorus, from Greek and Latin roots meaning “light-bearing”—was not lost on eager business people, but the difficulties of manufacture made it too costly to exploit. An ounce of phosphorus retailed for 6 guineas—perhaps £300 in today’s money—or more than gold.

At first, soldiers were called on to provide the raw material, but such an arrangement was hardly conducive to industrial-scale production. In the 1750s a Swedish chemist named Karl (or Carl) Scheele devised a way to manufacture phosphorus in bulk without the slop or smell of urine. It was largely because of this mastery of phosphorus that Sweden became, and remains, a leading producer of matches.

Scheele was both an extraordinary and an extraordinarily luckless fellow. A humble pharmacist with little in the way of advanced apparatus, he discovered eight elements—chlorine, fluorine, manganese, barium, molybdenum, tungsten, nitrogen and oxygen—and got credit for none of them. In every case, his finds either were overlooked or made it into publication after someone else had made the same discovery independently. He also discovered many useful compounds, among them ammonia, glycerin and tannic acid, and was the first to see the commercial potential ofchlorine as a bleach—all breakthroughs that made other people extremely wealthy.

Scheele’s one notable shortcoming was a curious insistence on tasting a little of everything he worked with, including such disagreeable substances as mercury and hydrocyanic acid—a compound so famously poisonous that 150 years later Erwin Schrödinger chose it as his toxin of choice in a famous thought experiment (see this page). Scheele’s rashness eventually caught up with him. In 1786, aged just forty-three, he was found dead at his workbench surrounded by an array of toxic chemicals, any one of which could have accounted for the stunned and terminal look on his face.

The seventeenth-century German alchemist Hennig Brand, who was convinced it was possible to distil gold from human urine; in the attempt to do so he discovered phosphorus. (credit 7.2)

Were the world just and Swedish-speaking, Scheele would have enjoyed universal acclaim. As it is, the plaudits have tended to go to more celebrated chemists, mostly from the English-speaking world. Scheele discovered oxygen in 1772, but for various heartbreakingly complicated reasons could not get his paper published in a timely manner. Credit went instead to Joseph Priestley, who discovered the same element independently but latterly, in the summer of 1774. Even more remarkable was Scheele’s failure to receive credit for the discovery of chlorine. Nearly all textbooks still attribute chlorine’s discovery to Humphry Davy, who did indeed find it, but thirty-six years after Scheele.

Although chemistry had come a long way in the century that separated Newton and Boyle from Scheele and Priestley and Henry Cavendish, it still had a long way to go. Right up to the closing years of the eighteenth century (and in Priestley’s case a little beyond) scientists everywhere searched for, and sometimes believed they had actually found, things that just weren’t there: vitiated airs, dephlogisticated marine acids, phloxes, calxes, terraqueous exhalations and, above all, phlogiston, the substance that was thought to be the active agent in combustion. Somewhere in all this, it was thought, there also resided a mysterious élan vital, the force that brought inanimate objects to life. No-one knew where this ethereal essence lay, but two things seemed probable: that you could enliven it with a jolt of electricity (a notion Mary Shelley exploited to full effect in her novel Frankenstein); and that it existed in some substances but not others, which is why we ended up with two branches of chemistry: organic (for those substances that were thought to have it) and inorganic (for those that did not).

Someone of insight was needed to thrust chemistry into the modern age, and it was the French who provided him. His name was Antoine-Laurent Lavoisier. Born in 1743, Lavoisier was a member of the minor nobility (his father had purchased a title for the family). In 1768 he bought a practising share in a deeply despised institution called the Ferme Générale (or General Farm), which collected taxes and fees on behalf of the government. Although Lavoisier himself was by all accounts mild and fair-minded, the company he worked for was neither. For one thing, it did not tax the rich but only the poor, and then often arbitrarily. For Lavoisier, the appeal of the institution was that it provided him with the wealth to follow his principal devotion, science. At his peak, his personal earnings reached 150,000 livres a year—perhaps £12 million in today’s money.

Three years after embarking on this lucrative career path, he married the fourteen-year-old daughter of one of his bosses. The marriage was a meeting of hearts and minds. Mme Lavoisier had an incisive intellect and soon was working productively alongside her husband. Despite the demands of his job and busy social life, they managed on most days to put in five hours of science—two in the early morning and three in the evening—as well as the whole of Sunday, which they called their jour de bonheur (day of happiness). Somehow Lavoisier also found the time to be commissioner of gunpowder, supervise the building of a wall around Paris to deter smugglers, help found the metric system and co-author the handbook Méthode de Nomenclature Chimique, which became the bible for agreeing the names of the elements.

As a leading member of the Académie Royale des Sciences, he was also required to take an informed and active interest in whatever was topical—hypnotism, prison reform, the respiration of insects, the water supply of Paris. It was in such a capacity in 1780 that Lavoisier made some dismissive remarks about a new theory of combustion that had been submitted to the academy by a hopeful young scientist. The theory was indeed wrong, but the scientist never forgave him. His name was Jean-Paul Marat.

The one thing Lavoisier never did was discover an element. At a time when it seemed as if almost anybody with a beaker, a flame and some interesting powders could discover something new—and when, not incidentally, some two-thirds of the elements were yet to be found—Lavoisierfailed to uncover a single one. It certainly wasn’t for want of beakers. Lavoisier had thirteen thousand of them in what was, to an almost preposterous degree, the finest private laboratory in existence.

Instead, he took the discoveries of others and made sense of them. He threw out phlogiston and mephitic airs. He identified oxygen and hydrogen for what they were and gave them both their modern names. In short, he helped to bring rigour, clarity and method to chemistry.

Antoine-Laurent Lavoisier and his wife, who, despite a demanding career and social life, devoted five hours a day to science—in the course of which Lavoisier laid the foundations for modern chemistry. Lavoisier was executed during the French Reign of Terror in 1794. (credit 7.3)

And his fancy equipment did in fact come in very handy. For years, he and Mme Lavoisier occupied themselves with extremely exacting studies requiring the finest measurements. They determined, for instance, that a rusting object doesn’t lose weight, as everyone had long assumed, but gains weight—an extraordinary discovery. Somehow, as it rusted the object was attracting elemental particles from the air. It was the first realization that matter can be transformed but not eliminated. If you burned this book now, its matter would be changed to ash and smoke, but the net amount of stuff in the universe would be the same. This became known as the conservation of mass, and it was a revolutionary concept. Unfortunately, it coincided with another type of revolution—the French one—and in this one Lavoisier was entirely on the wrong side.

Not only was he a member of the hated Ferme Générale, but he had enthusiastically built the wall that enclosed Paris—an edifice so loathed that it was the first thing attacked by the rebellious citizens. Capitalizing on this, in 1791 Marat, now a leading voice in the National Assembly, denounced Lavoisier and suggested that it was well past time for his hanging. Soon afterwards the Ferme Générale was shut down. Not long after this Marat was murdered in his bath by an aggrieved young woman named Charlotte Corday, but by this time it was too late for Lavoisier.

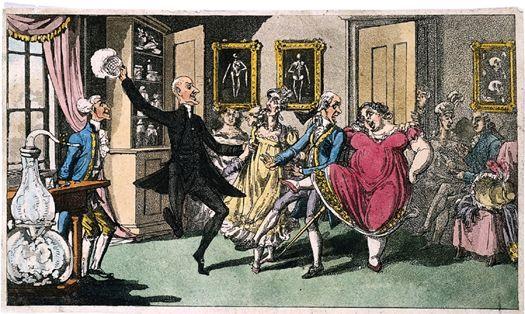

Another Rowlandson drawing, showing the amusing effects of laughing gas on a party of willing participants. Half a century would pass before the gas found a practical use and was transformed into an effective anaesthetic. (credit 7.4)

In 1793 the Reign of Terror, already intense, ratcheted up to a higher gear. In October Marie Antoinette was sent to the guillotine. The following month, as he and his wife were making tardy plans to slip away to Scotland, Lavoisier was arrested. In May he and thirty-one fellow farmers-general were brought before the Revolutionary Tribunal (in a courtroom presided over by a bust of Marat). Eight were granted acquittals, but Lavoisier and the others were taken directly to the Place de la Révolution (now the Place de la Concorde), site of the busiest of French guillotines. Lavoisier watched his father-in-law beheaded, then stepped up and accepted his fate. Less than three months later, on 27 July, Robespierre himself was dispatched in the same way and in the same place and the Reign of Terror swiftly ended.

A hundred years after his death, a statue of Lavoisier was erected in Paris and much admired until someone pointed out that it looked nothing like him. Under questioning, the sculptor admitted that he had used the head of the mathematician and philosopher the Marquis de Condorcet—apparently he had a spare—in the hope that no-one would notice or, having noticed, would care. In the second regard he was correct. The statue of Lavoisier-cum-Condorcet was allowed to remain in place for another half-century until the Second World War, when, one morning, it was taken away and melted down for scrap.

In the early 1800s there arose in England a fashion for inhaling nitrous oxide, or laughing gas, after it was discovered that its use “was attended by a highly pleasurable thrilling.” For the next half-century it would be the drug of choice for young people. One learned body, the Askesian Society was for a time devoted to little else. Theatres put on “laughing gas evenings” where volunteers could refresh themselves with a robust inhalation and then entertain the audience with their comical staggerings.

It wasn’t until 1846 that anyone got around to finding a practical use for nitrous oxide, as an anaesthetic. Goodness knows how many tens of thousands of people suffered unnecessary agonies under the surgeon’s knife because no-one had thought of the gas’s most obvious practical application.

I mention this to make the point that chemistry, having come so far in the eighteenth century, rather lost its bearings in the first decades of the nineteenth, in much the way that geology would in the early years of the twentieth. Partly it was to do with the limitations of equipment—there were, for instance, no centrifuges until the second half of the century, severely restricting many kinds of experiments—and partly it was social. Chemistry was, generally speaking, a science for business people, for those who worked with coal and potash and dyes, and not for gentlemen, who tended to be drawn to geology, natural history and physics. (This was slightly less true in continental Europe than in Britain, but only slightly.) It is perhaps telling that one of the most important observations of the century, Brownian motion, which established the active nature of molecules, was made not by a chemist but by a Scottish botanist, Robert Brown. (What Brown noticed, in 1827, was that tiny grains of pollen suspended in water remained indefinitely in motion no matter how long he gave them to settle. The cause of this perpetual motion—namely, the actions of invisible molecules—was long a mystery.)

Things might have been worse had it not been for a splendidly improbable character named Count von Rumford, who, despite the grandeur of his title, began life in Woburn, Massachusetts, in 1753 as plain Benjamin Thompson. Thompson was dashing and ambitious, “handsome in feature and figure,” occasionally courageous and exceedingly bright, but untroubled by anything so inconveniencing as a scruple. At nineteen he married a rich widow fourteen years his senior, but at the outbreak of revolution in the colonies he unwisely sided with the loyalists, for a time spying on their behalf. In the fateful year of 1776, facing arrest “for lukewarmness in the cause of liberty,” he abandoned his wife and child and fled just ahead of a mob of anti-royalists armed with buckets of hot tar, bags of feathers and an earnest desire to adorn him with both.

He decamped first to England and then to Germany, where he served as a military adviser to the government of Bavaria, so impressing the authorities that in 1791 he was named Count von Rumford of the Holy Roman Empire. While in Munich, he also designed and laid out the famous park known as the English Garden.

In between these undertakings, he somehow found time to conduct a good deal of solid science. He became the world’s foremost authority on thermodynamics and the first to elucidate the principles of the convection of fluids and the circulation of ocean currents. He also invented several useful objects, including a drip coffee-maker, thermal underwear, and a type of range still known as the Rumford fireplace. In 1805, during a sojourn in France, he wooed and married Mme Lavoisier, widow of Antoine-Laurent. The marriage was not a success and they soon parted. Rumford stayed on in France where he died, universally esteemed by all but his former wives, in 1814.

American-British physicist Count von Rumford, who narrowly escaped the wrath of anti-royalist mobs in Massachusetts in 1776, and went on to found London’s Royal Institution in 1799. (credit 7.5)

Our purpose in mentioning him here is that in 1799, during a comparatively brief interlude in London, he founded the Royal Institution, yet another of the many learned societies that popped into being all over Britain in the late eighteenth and early nineteenth centuries. For a time it was almost the only institution of standing to actively promote the young science of chemistry, and that was thanks almost entirely to a brilliant young man named Humphry Davy, who was appointed the institution’s professor of chemistry shortly after its inception and rapidly gained fame as an outstanding lecturer and productive experimentalist.

Soon after taking up his position, Davy began to bang out new elements one after another—potassium, sodium, magnesium, calcium, strontium, and aluminum or aluminium (depending on which branch of English you favour).1 He discovered so many elements not so much because he was serially astute as because he developed an ingenious technique of applying electricity to a molten substance—electrolysis, as it is known. Altogether he discovered a dozen elements, a fifth of the known total of his day. Davy might have done far more, but unfortunately as a young man he developed an abiding attachment to the buoyant pleasures of nitrous oxide. He grew so attached to the gas that he drew on it (literally) three or four times a day. Eventually, in 1829, it is thought to have killed him.

Fortunately, more sober types were at work elsewhere. In 1808, a dour Quaker named John Dalton became the first person to intimate the nature of an atom (progress that will be discussed more completely a little further on) and in 1811 an Italian with the splendidly operatic name of Lorenzo Romano Amadeo Carlo Avogadro, Count of Quarequa and Ceretto, made a discovery that would prove highly significant in the long term—namely, that two equal volumes of gases of any type, if kept at the same pressure and temperature, will contain identical numbers of molecules.

Two things were notable about the appealingly simple Avogadro’s Principle, as it became known. First, it provided a basis for more accurately measuring the size and weight of atoms. Using Avogadro’s mathematics, chemists were eventually able to work out, for instance, that a typical atom had a diameter of 0.00000008 centimetres, which is very little indeed. And second, almost no-one knew about it for almost fifty years.2

Italian physicist Lorenzo Romano Amadeo Carlo Avogadro, Count of Quarequa and Ceretto, whose hugely important theory of molecules was thankfully more simply entitled Avogadro’s Principle. (credit 7.6)

Partly this was because Avogadro himself was a retiring fellow—he worked alone, corresponded very little with fellow scientists, published few papers and attended no meetings—but also it was because there were no meetings to attend and few chemical journals in which to publish. This is a fairly extraordinary fact. The Industrial Revolution was driven in large part by developments in chemistry and yet as an organized science chemistry barely existed for decades.

The Chemical Society of London was not founded until 1841 and didn’t begin to produce a regular journal until 1848, by which time most learned societies in Britain—Geological, Geographical, Zoological, Horticultural and Linnaean (for naturalists and botanists)—were at least twenty years old and in several cases much more. The rival Institute of Chemistry didn’t come into being until 1877, a year after the founding of the American Chemical Society. Because chemistry was so slow to get organized, news of Avogadro’s important breakthrough of 1811 didn’t begin to become general until the first international chemistry congress, in Karlsruhe, in 1860.

Because chemists worked for so long in isolation, conventions were slow to emerge. Until well into the second half of the century, the formula H2O2 might mean water to one chemist but hydrogen peroxide to another. C2H4 could signify ethylene or marsh gas. There was hardly a molecule that was uniformly represented everywhere.

Chemists also used a bewildering variety of symbols and abbreviations, often self-invented. Sweden’s J. J. Berzelius brought a much-needed measure of order to matters by decreeing that the elements be abbreviated on the basis of their Greek or Latin names, which is why the abbreviation for iron is Fe (from the Latin ferrum) and for silver is Ag (from the Latin argentum). That so many of the other abbreviations accord with their English names (N for nitrogen, O for oxygen, H for hydrogen and so on) reflects English’s latinate nature, not its exalted status. To indicate the number of atoms in a molecule, Berzelius employed a superscript notation, as in H2O. Later, for no special reason, the fashion became to render the number as subscript: H2O.

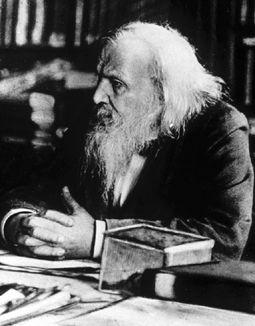

Despite the occasional tidyings-up, chemistry by the second half of the nineteenth century was in something of a mess, which is why everybody was so pleased by the rise to prominence in 1869 of an odd and crazed-looking professor at the University of St. Petersburg named Dmitri Ivanovich Mendeleyev.

Mendeleyev (also sometimes spelled Mendeleev or Mendeléef) was born in 1834 at Tobolsk, in the far west of Siberia, into a well-educated, reasonably prosperous and very large family—so large, in fact, that history has lost track of exactly how many Mendeleyevs there were: some sources say there were fourteen children, some say seventeen. All agree, at any rate, that Dmitri was the youngest. Luck was not always with the Mendeleyevs. When Dmitri was small his father, the headmaster of a local school, went blind and his mother had to go out to work. Clearly an extraordinary woman, she eventually became the manager of a successful glass factory. All went well until 1848, when the factory burned down and the family was reduced to penury. Determined to get her youngest child an education, the indomitable Mrs. Mendeleyev hitchhiked with young Dmitri four thousand miles to St. Petersburg—that’s equivalent to travelling from London to Equatorial Guinea—and deposited him at the Institute of Pedagogy. Worn out by her efforts, she died soon after.

Dmitri Mendeleyev, creator of the Periodic Table, caught in a moment of rare tranquillity during his stormy final years. (credit 7.7)

Mendeleyev dutifully completed his studies and eventually landed a position at the local university. There he was a competent but not terribly outstanding chemist, known more for his wild hair and beard, which he had trimmed just once a year, than for his gifts in the laboratory.

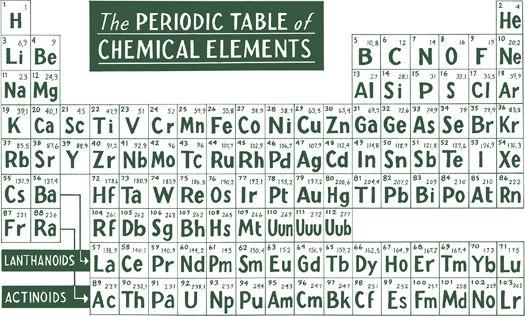

However, in 1869, at the age of thirty-five, he began to toy with a way to arrange the elements. At the time, elements were normally grouped in two ways—either by atomic weight (using Avogadro’s Principle) or by common properties (whether they were metals or gases, for instance). Mendeleyev’s breakthrough was to see that the two could be combined in a single table.

Mendeleyev’s Periodic Table, still as useful today as when he invented it in 1869. Mendeleyev was said to have modelled the table on the card game solitaire. (credit 7.8)

As is often the way in science, the principle had actually been anticipated three years previously by an amateur chemist in England named John Newlands. He suggested that when elements were arranged by weight they appeared to repeat certain properties—in a sense to harmonize—at every eighth place along the scale. Slightly unwisely, for this was an idea whose time had not quite yet come, Newlands called it the Law of Octaves and likened the arrangement to the octaves on a piano keyboard. Perhaps there was something in Newlands’ manner of presentation, but the idea was considered fundamentally preposterous and widely mocked. At gatherings, droller members of the audience would sometimes ask him if he could get his elements to play them a little tune. Discouraged, Newlands gave up pushing the idea and soon dropped out of sight altogether.

Mendeleyev used a slightly different approach, placing his elements into groups of seven, but employed fundamentally the same premise. Suddenly the idea seemed brilliant and wondrously perceptive. Because the properties repeated themselves periodically, the invention became known as the Periodic Table.

Mendeleyev was said to have been inspired by the card game known as solitaire in North America and patience elsewhere, wherein cards are arranged by suit horizontally and by number vertically. Using a broadly similar concept, he arranged the elements in horizontal rows called periods and vertical columns called groups. This instantly showed one set of relationships when read up and down and another when read from side to side. Specifically, the vertical columns put together chemicals that have similar properties. Thus copper sits on top of silver and silver sits on top of gold because of their chemical affinities as metals, while helium, neon and argon are in a column made up of gases. (The actual, formal determinant in the ordering is something called their electron valences, and if you want to understand them you will have to enrol in evening classes.) The horizontal rows, meanwhile, arrange the chemicals in ascending order by the number of protons in their nuclei—what is known as their atomic number.

The structure of atoms and the significance of protons will come in a following chapter; for the moment, all that is necessary is to appreciate the organizing principle: hydrogen has just one proton and so it has an atomic number of 1 and comes first on the chart; uranium has 92 protons and so it comes near the end and has an atomic number of 92. In this sense, as Philip Ball has pointed out, chemistry really is just a matter of counting. (Atomic number, incidentally, is not to be confused with atomic weight, which is the number of protons plus the number of neutrons in a given element.)

The Periodic Table of Chemical Elements, re-imagined as a landscape, with the peaks and slopes representing properties of the elements, such as atomic weights and numbers. This work is described as “a view from the northeast across the transition metals towards the peaks of Osmium and Iridium.” (credit 7.8a)

There was still a great deal that wasn’t known or understood. Hydrogen is the most common element in the universe, and yet no-one would guess as much for another thirty years. Helium, the second most abundant element, had only been found the year before—its existence hadn’t even been suspected before that—and then not on the Earth, but in the Sun, where it was found with a spectroscope during a solar eclipse, which is why it honours the Greek sun god Helios. It wouldn’t be isolated until 1895. Even so, thanks to Mendeleyev’s invention, chemistry was now on a firm footing.

For most of us, the Periodic Table is a thing of beauty in the abstract, but for chemists it established an immediate orderliness and clarity that can hardly be overstated. “Without a doubt, the Periodic Table of the Chemical Elements is the most elegant organizational chart ever devised,” wrote Robert E. Krebs in The History and Use of Our Earth’s Chemical Elements—and you can find similar sentiments in virtually every history of chemistry in print.

Today we have “120 or so” known elements—92 naturally occurring ones plus a couple of dozen that have been created in labs. The actual number is slightly contentious because the heavy, synthesized elements exist for only millionths of seconds and chemists sometimes argue over whether they have really been detected or not. In Mendeleyev’s day just sixty-three elements were known, but part of his cleverness was to realize that the elements as then known didn’t make a complete picture, that many pieces were missing. His table predicted, with pleasing accuracy, where new elements would slot in when they were found.

No-one knows, incidentally, how high the number of elements might go, though anything beyond 168 as an atomic weight is considered “purely speculative”; but what is certain is that anything that is found will fit neatly into Mendeleyev’s great scheme.

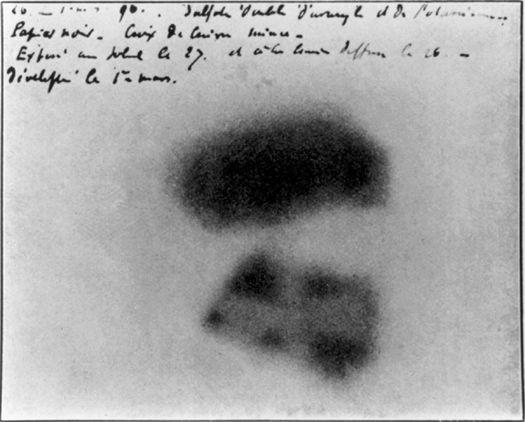

The nineteenth century held one last important surprise for chemists. It began in 1896 when Henri Becquerel in Paris carelessly left a packet of uranium salts on a wrapped photographic plate in a drawer. When he took the plate out some time later, he was surprised to discover that the salts had burned an impression in it, just as if the plate had been exposed to light. The salts were emitting rays of some sort.

Considering the importance of what he had found, Becquerel did a very strange thing: he turned the matter over to a graduate student for investigation. Fortunately the student was a recent émigré from Poland named Marie Curie. Working with her new husband, Pierre, Curie found that certain kinds of rocks poured out constant and extraordinary amounts of energy, yet without diminishing in size or changing in any detectable way. What she and her husband couldn’t know—what no-one could know until Einstein explained things the following decade—was that the rocks were converting mass into energy in an exceedingly efficient way. Marie Curie dubbed the effect “radioactivity.” In the process of their work, the Curies also found two new elements—polonium, which they named after her native country, and radium. In 1903 the Curies and Becquerel were jointly awarded the Nobel Prize in physics. (Marie Curie would win a second prize, in chemistry, in 1911; the only person to win in both chemistry and physics.)

At McGill University in Montreal the young New Zealand-born Ernest Rutherford became interested in the new radioactive materials. With a colleague named Frederick Soddy he discovered that immense reserves of energy were bound up in these small amounts of matter, and that the radioactive decay of these reserves could account for most of the Earth’s warmth. They also discovered that radioactive elements decayed into other elements—that one day you had an atom of uranium, say, and the next you had an atom of lead. This was truly extraordinary. It was alchemy pure and simple; no-one had ever imagined that such a thing could happen naturally and spontaneously.

The smudges left accidentally on a photographic plate in Henri Becquerel’s desk drawer that led to the discovery of radioactivity. (credit 7.9)

Ever the pragmatist, Rutherford was the first to see that there could be a valuable practical application in this. He noticed that in any sample of radioactive material, it always took the same amount of time for half the sample to decay—the celebrated half-life3—and that this steady, reliable rate of decay could be used as a kind of clock. By calculating backwards from how much radiation a material had now and how swiftly it was decaying, you could work out its age. He tested a piece of pitchblende, the principal ore of uranium, and found it to be 700 million years old—very much older than the age most people were prepared to grant the Earth.

In the spring of 1904, Rutherford travelled to London to give a lecture at the Royal Institution—the august organization founded by Count von Rumford only 105 years before, though that powdery and periwigged age now seemed a distant aeon compared with the roll-your-sleeves-up robustness of the late Victorians. Rutherford was there to talk about his new disintegration theory of radioactivity, as part of which he brought out his piece of pitchblende. Tactfully— for the ageing Kelvin was present, if not always fully awake—Rutherford noted that Kelvin himself had suggested that the discovery of some other source of heat would throw his calculations out. Rutherford had found that other source. Thanks to radioactivity the Earth could be—and self-evidently was—much older than the 24 million years Kelvin’s final calculations allowed.

Kelvin beamed at Rutherford’s respectful presentation, but was in fact unmoved. He never accepted the revised figures and to his dying day believed his work on the age of the Earth his most astute and important contribution to science—far greater than his work on thermodynamics.

As with most scientific revolutions, Rutherford’s new findings were not universally welcomed. John Joly of Dublin strenuously insisted well into the 1930s that the Earth was no more than 89 million years old, and was stopped only then by his own death. Others began to worry that Rutherford had now given them too much time. But even with radiometric dating, as decay measurements became known, it would be decades before we got within a billion years or so of the Earth’s actual age. Science was on the right track, but still way out.

Pierre and Marie Curie photographed in Paris. The Curies were jointly awarded the Nobel Prize in physics with Henri Becquerel in 1903 for their work on radioactivity. (credit 7.10)

Kelvin died in 1907. That year also saw the death of Dmitri Mendeleyev. Like Kelvin, his productive work was far behind him, but his declining years were notably less serene. As he aged, Mendeleyev became increasingly eccentric—he refused to acknowledge the existence of radiation or the electron or anything else much that was new—and difficult. His final decades were spent mostly storming out of labs and lecture halls all across Europe. In 1955, element 101 was named mendelevium in his honour. “Appropriately,” notes Paul Strathern, “it is an unstable element.”

Radiation, of course, went on and on, literally and in ways nobody expected. In the early 1900s Pierre Curie began to experience clear signs of radiation sickness—notably dull aches in his bones and chronic feelings of malaise—which doubtless would have progressed unpleasantly. We shall never know for certain because in 1906 he was fatally run over by a carriage while crossing a Paris street.

Marie Curie spent the rest of her life working with distinction in the field, helping to found the celebrated Radium Institute of the University of Paris in 1914. Despite her two Nobel Prizes, she was never elected to the Academy of Sciences, in large part because after the death of Pierre she conducted an affair with a married physicist sufficiently indiscreet to scandalize even the French—or at least the old men who ran the academy, which is perhaps another matter.

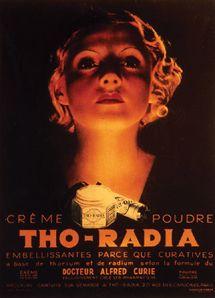

For a long time it was assumed that anything so miraculously energetic as radioactivity must be beneficial. For years, manufacturers of toothpaste and laxatives put radioactive thorium in their products, and at least until the late 1920s the Glen Springs Hotel in the Finger Lakes region of New York (and doubtless others as well) featured with pride the therapeutic effects of its “Radio-active mineral springs.” It wasn’t banned in consumer products until 1938. By this time it was much too late for Mme Curie, who died of leukaemia in 1934. Radiation, in fact, is so pernicious and long-lasting that even now her papers from the 1890s—even her cookbooks—are too dangerous to handle. Her lab books are kept in lead-lined boxes and those who wish to see them must don protective clothing.

Never has the promise of glowing skin been more dangerously apt than in the early years of the twentieth century when radium was commonly used as a featured ingredient in beauty products. (credit 7.11)

Thanks to the devoted and unwittingly high-risk work of the first atomic scientists, by the early years of the twentieth century it was becoming clear that the Earth was unquestionably venerable, though another half-century of science would have to be done before anyone could confidently say quite how venerable. Science, meanwhile, was about to get a new age of its own— the atomic one.

Marie Curie, towards the end of her life, with Albert Einstein: their brilliant discoveries started scientists on the road that led to the atomic bomb. (credit 7.12)

![]()

1 The confusion over the aluminum/aluminium spelling arose because of some uncharacteristic indecisiveness on Davy’s part. When he first isolated the element in 1808, he called it alumium. For some reason he thought better of that and changed it to aluminum four years later. Americans dutifully adopted the new term, but many British users disliked aluminum, pointing out that it disrupted the -ium pattern established by sodium, calcium and strontium, so they added a vowel and syllable. Among his other achievements, Davy also invented the miner’s safety lamp.

2 The principle led to the much later adoption of Avogadro’s Number, a basic unit of measure in chemistry, which was named for Avogadro long after his death. It is the number of molecules found in 2.016 grams of hydrogen gas (or an equal volume of any other gas). Its value is placed at 6.0221367 × 1023, which is an enormously large number. Chemistry students have long amused themselves by computing just how large a number it is, so I can report that it is equivalent to the number of popcorn kernels needed to cover the United States to a depth of nine miles, or cupfuls of water in the Pacific Ocean, or soft-drink cans that would, evenly stacked, cover the Earth to a depth of two hundred miles. An equivalent number of American pennies would be enough to make every person on Earth a dollar trillionaire. It is a big number.

3 If you have ever wondered how the atoms determine which 50 per cent will die and which 50 per cent will survive for the next session, the answer is that the half-life is really just a statistical convenience—a kind of actuarial table for elemental things. Imagine you had a sample of material with a half-life of 30 seconds. It isn’t that every atom in the sample will exist for exactly 30 seconds or 60 seconds or 90 seconds or some other tidily ordained period. Each atom will in fact survive for an entirely random length of time that has nothing to do with multiples of 30; it might last until two seconds from now or it might oscillate away for years or decades or centuries to come. No-one can say. But what we can say is that for the sample as a whole the rate of disappearance will be such that half the atoms will disappear every 30 seconds. It’s an average rate, in other words, and you can apply it to any large sampling. Someone once worked out, for instance, that American dimes have a half-life of about thirty years.

A technician makes adjustments to a recently installed atom smasher at the University of Notre Dame in Indiana in 1941. The 8 million volts generated by the machine helped scientists experiment with atomic structure as well as with the production of radioactive metals. (credit p3.1)