Chapter 10

THE METAVERSE IS ENVISIONED AS A PARALLEL plane for human leisure, labor, and existence more broadly. So it should come as no surprise that the extent to which the Metaverse succeeds will depend, in part, on whether it has a thriving economy. Yet we are not accustomed to thinking in these terms; while science fiction has predicted the Metaverse, one usually finds only glancing references to a virtual world’s internal economy in such stories. A virtual economy might sound like a strange, daunting, even confusing prospect, but it shouldn’t be. With a few important exceptions, the Metaverse economy will follow the patterns of real-world ones. Most experts agree on many of the attributes that produce a thriving real-world economy: rigorous competition, a large number of profitable businesses, trust in its “rules” and “fairness,” consistent consumer rights, consistent consumer spending, and a constant cycle of disruption and displacement, among others.

We can see these attributes at play in the world’s largest economy. The United States was not built by any one government or corporation, but instead by millions of different businesses. Even in today’s era of megacorporations and technology giants, the country’s more than 30 million small-to-medium businesses employ over half the workforce and are responsible for half of GDP (both figures exclude military and defense spending). Amazon’s hundreds of billions in sales are almost exclusively of things other companies make. Apple’s iPhone is one of the most significant products in human history and each year, Apple designs an ever-growing portion of its richly integrated components. However, most of its components still come from competitors—and many of them are constantly warring with Apple over prices, while enabling the company’s competitors. In addition, consumers buy (and frequently upgrade to new versions of) this incredible device in order to access content, apps, and data made in large part by companies other than Apple.

Apple is a prime example of the dynamism of the American economy. Although the company was an early leader in the PC era of the 1970s and 1980s, it struggled throughout the 1990s as Microsoft’s ecosystem grew and internet services proliferated. But through the iPod in 2001, iTunes in 2003, iPhone in 2007, and App Store in 2008, Apple became the most valuable company in the world. It isn’t difficult to imagine another outcome: one in which Microsoft, whose operating system powered 95% of the computers used to manage an iPod or run iTunes, was able to stymie its would-be competitor as a means of buttressing its Windows Mobile and Zune offerings. Alternatively, we could imagine a version of earth in which internet providers such as AOL, AT&T, or Comcast had been able to use their power over data transmission to control what content could flow through their systems, how, and with which royalties.

The American economy is backed by an elaborate legal system which covers everything made or invested in, what’s sold and bought, who is hired and what tasks they perform, and also what’s owed. While this system is imperfect, costly to use, and often slow, its existence instills in all market participants faith that their agreements will be honored and that there is some middle ground between “free market competition” and “fairness” which benefits all parties. The success of Apple, as well as other internet giants that were born during the PC era, such as Google and Facebook, is inextricably linked to the famous court case United States v. Microsoft Corporation, which found the company to have unlawfully monopolized its operating system through the control of APIs, forced bundling of software, restrictive licensing, and other technical restrictions. Another example is the “first sale doctrine,” which allows someone who buys a copy of a copyrighted work from the copyright holder to dispose of that copy however they wish. This is why Blockbuster was able to purchase a $25 VHS tape and then endlessly rent it to its customers without needing to pay royalties to the Hollywood studio that made it, and why you have the right to sell your copy of a book or rip up and restitch a shirt with a copyrighted design.

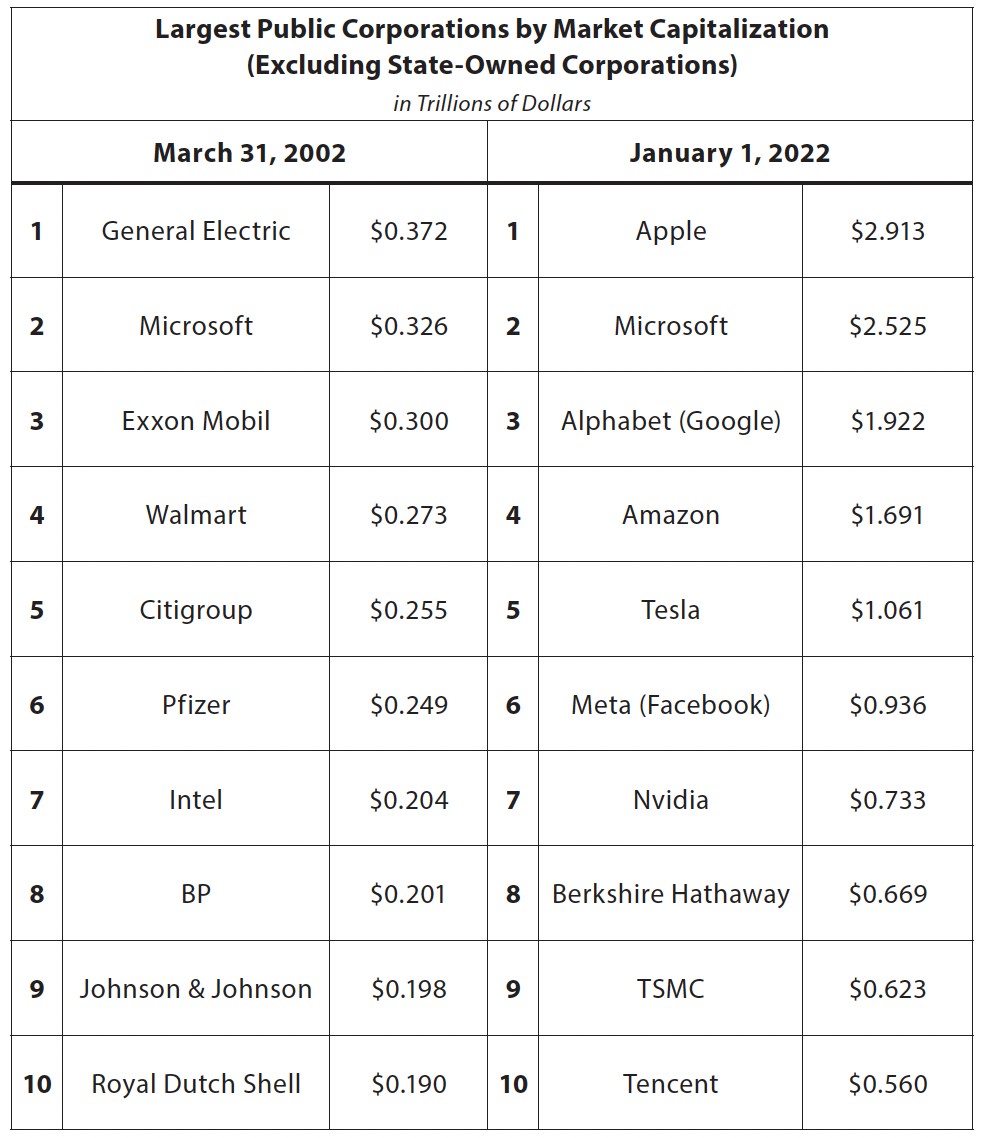

Sources: “Global 500,” Internet Archive Wayback Machine, https://web.archive.org/web/20080828204144/http://specials.ft.com/spdocs/FT3BNS7BW0D.pdf; “Largest Companies by Market Cap,” https://companiesmarketcap.com/.

In this book so far, I’ve examined many of the innovations, conventions, and devices required to achieve a flourishing and fully realized Metaverse. But I have not yet addressed one of the most important: “payment rails.”

Because most payment rails largely predate the digital age, we tend not to think of them as “technology.” In truth, they are the embodiment of digital ecosystems—complex series of systems and standards, deployed across a wide network and in support of trillions of dollars in economic activity, and in a primarily automated fashion. They’re typically difficult to build and even harder to displace, and also quite profitable. Visa, MasterCard, and Alipay are each among the 20 most valuable public companies in the world, with most of their peers the likes of Google, Apple, Facebook, Amazon, and Microsoft, as well as large financial conglomerates such as JPMorgan Chase and Bank of America which hold trillions in deposits and manage the transfer of trillions more in financial instruments each day.

Unsurprisingly, there is already a fight to become the dominant “payment rail” in the Metaverse. What’s more, this fight is arguably the central battleground for the Metaverse, and potentially its greatest impediment, too. To unpack Metaverse payment rails, I’ll first provide an overview of the major payment rails of the modern era, before explaining the role of payments in today’s gaming industry and how that role has informed the payment rails of the mobile computing era. Then, I’ll discuss how mobile payment rails are being used to control emergent technologies and stifling competition; before touching on why so many Metaverse-focused founders, investors, and analysts see blockchains and cryptocurrencies as the first “digitally native” payment rail and the solution to the problems plaguing the current virtual economy.

The Major Payment Rails Today

Over the past century, the number of distinct payment rails has grown as a result of new communication technologies, the increase in the number of transactions made per person per day, and the fact that most purchases are not in physical cash. From 2010 to 2021, cash’s share of US transactions fell from over 40% to roughly 20%.

The most common payment rails in the United States are Fedwire (formerly known as the Federal Reserve Wire Network), CHIPS (Clearing House Interbank Payment System), ACH (Automated Clearing House), credit cards, PayPal, and peer-to-peer payment services like Venmo. These rails have different requirements, merits, and demerits, having to do with the fees that are charged, network size, speed, reliability, and flexibility. We’ll come back to this later on when I discuss blockchains and cryptocurrencies, so it’s important to remember these categories and related details.

Let’s start with the classic payment rail: wires. In the mid-1910s, the US Federal Reserve Banks began to move funds electronically, eventually establishing a proprietary telecommunications system that spanned each of the 12 Reserve Banks, the Federal Reserve Board, and the US Treasury. Early systems were telegraphic and used Morse code, but by the 1970s, Fedwire began to move to telex, then computer operations, followed by proprietary digital networks. Wires can only be used between (and thus through) banks, so both the sender and receiver must have a bank account. For similar reasons, a wire can only be sent on non-holiday weekdays and during business hours. While a sender can set up recurring wires (say, sending $5,000 every Tuesday), there is no such thing as a “wire request.” As such, wires cannot be used to automatically pay recurring bills or other invoices. Once money is sent via wires, that sending cannot be reversed. Even if this were possible, other limitations discourage frequent use of wires. For example, there are often significant fees charged to both the sender ($25–$45) and recipient ($15), in addition to other fees for non-USD wires, failed wires, confirmations (which are not always possible), and more. The banks themselves are charged as little as $0.35 and $0.9 per transaction by Fedwire. The size of these fees, which are mostly fixed, makes small dollar-value wires impractical. But for larger sums (individuals can wire up to $100,000), wires are the least expensive option.

In the 1970s, the major US banks also established a competitor to (and customer of) Fedwire, named CHIPS, in part to reduce their transfer costs. In particular, this meant departing from Fedwire’s “real-time settlement,” in which a sender’s wire would be instantly received by and usable to the recipient. In contrast, each bank holds outgoing CHIPS wires until the end of the day, at which point they are grouped together based on the recipient bank, and then netted against all of the incoming CHIPS wires from that same bank. In simple terms, CHIPS means that rather than Bank A sending Bank B millions of wires per day, and Bank B sending Bank A millions of wires per day, they wait until the end of the day and make a single transaction. With this system, neither the sender of a wire nor its recipient has access to the wire’s funds (and for up to 23 hours, 59 minutes, and 59 seconds). Only the bank does, and the bank collects interest on much of it over the course of the day. Naturally, banks typically default to CHIPS for their wires. Due to time zones, money-laundering protections, and other governmental restrictions, international wires typically take two to three days.

As anyone who has sent a wire knows, they are typically the most complex and time-consuming way to send money, as extensive information is needed from the recipient. The irreversibility of the transaction, combined with the lack of (or delayed) confirmation, also means that mistakes are even more time-consuming to correct. Yet wires are still typically considered the most secure way to send money, as CHIPS is limited to only 47 member banks and involves no intermediary, while Fedwire’s only intermediary is the United States Federal Reserve. In 2021, $992 trillion in value was sent via US Fedwire across 205 million transactions (an average of roughly $5 million), while CHIPS cleared over $700 trillion across an estimated 250 million transactions (an average of $3 million).

An ACH is an electronic network for processing payments. The first ACH emerged in the United Kingdom in the late 1960s. Like wires, ACH payments can only be made during business hours, and they require the sender and the recipient to each have a bank account. These bank accounts must usually be part of the ACH network, and as such ACH payments face geographic limitations, in most cases. A Canadian bank account can typically make an ACH payment to one in the United States, but making an ACH payment to Vietnam, Russia, or Brazil is unlikely to be possible, or at least requires various intermediaries that increase costs. The fees associated with ACH payments are seen as their primary differentiator. Most banks allow customers to make ACH-based transfers for free, or at most, for $5. Businesses can make ACH payments to vendors or employees for less than 1% per transaction. Unlike a wire, an ACH payment is also reversible and allows payment requests from would-be recipients. These capabilities, mixed with its low cost, is why this rail is typically used to make payments to vendors and employees, and set on “auto-pay” for electrical, telephone, insurance, and other bills. An estimated $70 trillion was processed by the US ACH in 2021, spanning more than 20 billion transactions (an average of roughly $2,500 per transaction).1

The major downside of ACH is that it is slow: transactions take anywhere from one to three days. This is because ACH payments don’t “clear” until the end of the day (some banks perform a few batches per day), at which point a bank aggregates everything it must send to another bank (that is, all ACHs) and sends it in one sum via Fedwire, CHIPS, or similar solution. The resulting lag produces several challenges beyond just one to two and a half days during which neither sender nor receiver has the funds. For example, with ACH there’s no confirmation of a successful transaction—you’re only notified if there is an error. And that error takes several days to correct: the recipient bank doesn’t notice the failure until the second day, its report isn’t processed until the end of the second day, and the original sender receives the notice the following day (at which point the three-day process starts again).

Makeshift credit card systems have existed since the late 19th century, though what we now consider a “credit card” did not emerge until the 1950s. Today, we “swipe” or “tap” a physical card (or enter our credit card information into a box online), after which a credit card machine or remote server captures the account information and digitally sends it to the merchant’s bank, which then submits it to the customer’s credit card provider, which either grants or denies the transaction. The process takes one to three days, though the consumer of course doesn’t notice this, and typically costs merchants 1.5% to 3.5% of the transaction. This fee is much higher than that for an ACH payment, but credit cards enable a transaction to be placed in seconds and without exchanging detailed and personal bank account information. The purchaser does not even need to have a bank account.

While credit cards are often free to the user, late payments and interest can quickly result in paying more than 20% per year on top of the relevant transactions (it’s likely you pay your credit card bill via ACH). Credit card operators generate a third of their revenue through other services sold to merchants and credit card owners, such as insurance, or by selling data generated in their network. Like ACH, but unlike wires, credit card payments can be reversed, though this process can take days, is often contested, and is only available for a few hours or days after a transaction (a dispute can be filed far later). Like wires, credit cards work across almost all markets globally. And, unlike both wires and ACH, credit card payments are supported by almost all merchants, and transactions can be placed at any time and every day. As anyone with a credit card knows, they are typically the least secure way to make a payment, and often suffer the most fraud. An estimated $6 trillion was spent via credit card in the US in 2021, with an average of $90 across more than 50 billion transactions.

Finally, there are the digital payment networks (also known as peer-to-peer networks) such as PayPal and Venmo. Although users do not need bank accounts to open a PayPal or Venmo account, these accounts must be funded, with the money coming from an ACH payment (a bank account), credit card payment, or transfer from another user. Once funded, these platforms then serve as a centralized bank used by all accounts; transfers between users are effectively just reassignments of money held by the platform itself. As a result, payments are instantaneous and can be made irrespective of the day or time. When money is sent between friends and family, these platforms typically do not charge a fee. However, payments made to businesses typically involve fees of 2%–4%. And if a user wants to move their on-platform money to their bank account, they must typically pay 1% (up to $10) for it to arrive the same day, or otherwise wait two to three days (during which the platform collects interest). Finally, these networks are usually geographically limited (Venmo is US only, for example) and do not support peer-to-peer payments outside their networks (that is, a PayPal user cannot send funds to a Venmo wallet, meaning they must instead route it through several intermediary accounts or rails). In 2021, an estimated $2 trillion was processed globally by PayPal, Venmo, and Square’s Cash App, with an average of roughly $65 per transaction across more than 30 billion transactions.

In sum, the various US payment rails tend to vary in terms of security, fees, and speed. No payment rail is perfect, but more important than their technical attributes is that they compete with one another, including within each category. There are multiple wire rails, multiple credit card networks, multiple digital payment processors and platforms. Each of these competes based on their advantages and drawbacks, and even within a single category, there are various fee strucutres. The credit card operator American Express, for example, charges far more than Visa, but it offers consumers more lucrative points and perks, and merchants a higher-income clientele. If a user decides they don’t want a credit card, or a merchant declines to take Amex, each has multiple alternatives available. And again, they can also make some transfers for free if they’re willing to lend their money to a given digital payment network for two to three days.

The 30% Standard

We might assume that the virtual world would have “better” payment rails than the “real world.” After all, its economy primarily involves goods that only exist virtually, and that are bought via purely digital (and thus low marginal cost) transactions, and are, for the most part, $5–$100 each. The virtual economy is also large. In 2021, consumers spent more than $50 billion on digital-only video games (in contrast to physical discs), and nearly $100 billion more on in-game goods, outfits, and extra lives. As a point of comparison, $40 billion was spent at the theatrical film box office in 2019, the last year before the COVID-19 pandemic, and $30 billion on recorded music. What’s more, the “GDP” of the virtual world is growing rapidly—it has quintupled on an inflation-adjusted basis since 2005. In theory, these facts should mean creativity, innovation, and competition in payments. In practice, the reverse has been true; the payment rails of today’s virtual economy are more expensive, cumbersome, slow to change, and less competitive than those in the real world. Why? Because what we consider to be a virtual payment rail, such as PlayStation’s wallet, Apple’s Apple Pay, or in-app payment services, are really a stack of different “real world” rails and forced bundle of many other services.

In 1983, the arcade manufacturer Namco approached Nintendo about publishing versions of its titles, such as Pac-Man, on its Nintendo Entertainment System (NES). At the time, the NES was not intended to be a platform. Instead, it played only titles made by Nintendo. Eventually, Namco agreed to pay Nintendo a 10% licensing fee on all of its titles that appeared for NES (Nintendo would have right of approval over every individual title), plus another 20% for Nintendo to manufacture Namco’s game cartridges. This 30% fee ultimately became an industry standard, replicated by the likes of Atari, Sega, and PlayStation.2

Forty years later, few people play Pac-Man anymore, and costly cartridges have transformed into low-cost digital discs manufactured by game-makers and even lower-cost bandwidth for digital downloads (where the costs are mostly borne by consumers via internet fees and console hard drives). However, the 30% standard has endured and expanded to all in-game purchases, such as an extra life, digital backpack, premium pass, subscription, update, and more (this fee also covers the two to three percentage points charged by an underlying payment rail, such as PayPal or Visa).

Console platforms have several rationales for the fee beyond simply making money. The most important is how they enable game developers themselves to make money. For example, Sony and Microsoft typically sell their respective PlayStation and Xbox consoles for less than they cost to manufacture, which makes it cheaper for consumers to access powerful GPUs and CPUs, as well as other related hardware and components that are needed to play a game. And this per-unit loss is before these platforms allocate the research and development investments to design their consoles, marketing costs to convince users to buy them, and exclusive content (that is, Microsoft and Sony’s internal game development studios) that encourages users to buy them upon release, rather than years later. Given that new consoles usually enable new or better capabilities, speedier adoption should benefit developers and players alike.

The platforms also develop and maintain a number of proprietary tools and APIs that developers need in order to make their games run on a given console. The platforms also operate online multiplayer networks and services such as Xbox Live, Nintendo Switch Online, and PlayStation Network. These investments help game-makers, but they lead the platform to attempt to recoup and then profit from their expenditures—hence the 30% fee.

Gaming platforms may have a rationale for a 30% fee, but that does not mean the fee is set by the market, nor that it is fully earned. Consumers are forced to buy these consoles at below cost; there’s no option to buy a more expensive unit that has 30% lower prices on software. And while consoles must attract developers, they don’t compete against one another for these developers. Most game-makers release their titles on as many platforms as possible in order to reach as many players as possible. As such, none of the major consoles stands to benefit from offering developers better terms. A 15% reduction by Xbox would mean a game publisher would make 21% more from every copy they sold on the Xbox, but if they chose not to release their title on PlayStation or Nintendo Switch as a result, they’d miss out on as much as 80% of total sales. This might net Microsoft some additional customers, but not 400% more, the figure required to make the publisher whole. If Microsoft’s maneuver was matched by PlayStation or Nintendo, all three platforms would lose half of their software revenue and for little benefit.

The most pointed critique of the 30% cut focuses on the console’s proprietary tools, APIs, and services. In many cases, they add cost to the developer, rather than aid them. In other cases, they produce limited value. And in some cases, they serve only to lock in customers and developers alike, and to the detriment of both groups. This reality can be seen clearly in three areas: API collections, multiplayer services, and entitlements.

For a game to run on a specific device, it needs to know how to communicate with that device’s many components, such as its GPU or microphone. To support this communication, console, smartphone, and PC operating systems produce “software development kits” (or SDKs) that include, among other things, “collections of APIs.” In theory, a developer could write their own “driver” to talk to these components, or use free and open-source alternatives. OpenGL is another collection of APIs used to speak to as many GPUs as possible from the same codebase. But on consoles and Apple’s iPhone, a developer can only use those made by the platform’s operator. Epic Games’ Fortnite must use Microsoft’s DirectX collection of APIs to speak to the Xbox’s GPU. The PlayStation version of Fortnite must use PlayStation’s GNMX, while Apple’s iOS requires Metal, Nintendo Switch requires Nvidia’s NVM, and so on.

Each platform argues that their proprietary APIs are best suited to their proprietary operating systems and/or hardware, and therefore developers can make better software using them, which leads to happier users. This is generally true, though the majority of virtual worlds operating today—and especially the most popular ones—are made to run on as many platforms as possible. As such, they are not richly optimized to any platform. Furthermore, many games don’t need every ounce of computing power. The variations in API collections and lack of open alternatives are partly why developers use cross-platform game engines such as Unity and Unreal, as they are designed to speak to every API collection. To this end, some developers might prefer to give up a little bit of performance optimization in order to instead optimize for their budget by using OpenGL, rather than pay or share a portion of revenues with Unity or Epic Games.

The challenge of multiplayer is a bit different. In the mid-2000s, Microsoft’s Xbox Live managed almost all of the “work” for an online game: communications, matchmaking, servers, and so on. Though this work was hard and expensive, it also substantially increased gamer engagement and happiness, which was good for developers. Yet 20 years later, almost all this cost is now borne and managed by a game’s maker. The transition reflects the growing importance of online services, and the shift to support cross-play. Most developers now want to manage their own “live ops,” such as content updates, competitions, in-game analytics, and user accounts, and it doesn’t make sense for Xbox to manage the live services for a game that’s integrated into PlayStation, Nintendo Switch, and more. But game developers are still obligated to pay the full 30% to gaming platforms and work through their online account systems. Furthermore, if the Xbox Live network goes offline due to technical difficulties, as an example, players cannot access Call of Duty: Modern Warfare’s online play. And of course, players themselves are already paying a monthly subscription fee to Microsoft for Xbox Live, and no part of that fee goes to the developers whose games justify its existence and who pay the most in server bills.

Critics argue that the real goals of platform services are to create extra distance between developers and players, lock both groups into hardware-based platforms, and justify the platform’s 30% fee. So when players buy a digital copy of FIFA 2017 from the PlayStation Store, that copy is forever tied to PlayStation. In other words, PlayStation has already netted its $20 from a $60 purchase, but if the player wants to play the game on Xbox, they’ll have to spend another $60 even if the developer would be willing to give it to the player for free. The more a user pays a console manufacturer like Sony—thus repaying their console loss—the more expensive it becomes to ever leave.

Platforms take a similar approach when it comes to game-related content. If a player beats Bioshock on PlayStation, and later switches to Xbox, they not only need to repurchase the game, they’d need to beat it a second time to replay the final level. In addition, if PlayStation awarded Bioshock players any trophies (say, for beating the game faster than 99% of other players), PlayStation would keep these awards for eternity. As I discussed in Chapter 8, Sony was able to use their control over online play to prevent cross-platform gaming for more than a decade. This helped neither developers nor players—it obviously harmed both—but it (theoretically) helped Sony retain PlayStation customers by making it harder to acquire Xbox customers.

The payment rails of console gaming are not discrete, as they are in the real world. Players and developers alike are prohibited from directly using credit cards, ACH, wires, or digital payment networks, and the billing solution offered by a platform is bundled with many other things—entitlements, save data, multiplayer, APIs, and more. It doesn’t matter what the market rate is, or what a developer or user needs. There is no discount if a publisher’s game is offline only, or if they don’t need the online multiplayer services of a given platform. It also doesn’t matter if a publisher’s game was bought at GameStop, rather than digitally at PlayStation’s Store—even though the publisher had to give GameStop a cut of the transaction, too. The fee is the fee. The best illustration of this reality is a platform that lacks any hardware at all, yet has proven more dominant than Nintendo, Sony, or Microsoft.

The Rise of Steam

In 2003, the game-maker Valve launched the PC-only application Steam, effectively the iTunes of games. At the time, most PC hard drives could store just a few games at a time—a problem that was only getting worse as the size of the average game file grew faster than affordable storage space. Finding and then downloading these games, uninstalling them to free up space for others, reinstalling the old game later on when the user wanted to return to it, and shifting them to a new PC were all laborious. A user had to manage multiple credentials, numerous credit card receipts, web addresses, and so on. Furthermore, many online multiplayer games, such as Valve’s own Counter-Strike, were moving to a “games-as-a-service” model in which the game would be updated or patched on a frequent basis. This allowed games to be “refreshed” with new features, weapons, modes, and cosmetics, but it also meant that players had to constantly update their games, causing no small amount of frustration. Imagine coming home after a long day of work to play Counter-Strike, only to discover you had to wait an hour for an update to download and be installed.

Steam solved these problems by creating a “game launcher” that indexed and centrally managed game installer files, but also took care of a user’s rights to these games and automatically downloaded and updated the games a player had installed on their PC. In exchange, Steam would keep 30% from the sale of every game through its system—just like the console game platforms.

Over time, Valve added more services to Steam, collectively called Steamworks. For example, Valve used the Steam account system to create an early “social network” of friends and teammates that any game could access. Players no longer had to search for and re-add their friends (or rebuild their teams) every time they bought a new game. Steamworks’ Matchmaking, meanwhile, enabled developers to use Steam’s player networks to create balanced and fair online multiplayer experiences. Steam Voice allowed players to speak in real time. These services were provided at no additional cost to developers, and unlike console platforms, Steam did not charge players themselves to access online networks or services, either. Later, Valve made Steamworks available to games not sold on Steam, such as a physical copy of Call of Duty that was bought from GameStop or Amazon, thereby building a bigger and more richly integrated network of online gaming services. Steamworks was theoretically free to developers, but it also forced each game to use Steam’s payment service for all subsequent in-game transactions. As such, developers paid for Steamworks by netting Steam 30% of ongoing revenues.

Steam is seen as one of the most important innovations in PC gaming history, and a critical reason the segment remains as large as console gaming, even with its greater complexity of use and higher cost of entry (a decent gaming PC still costs more than $1,000, while meeting the specifications of newer consoles requires $2,000 or more). But nearly 20 years later, its technical innovations in game distribution, rights management, and online services have largely been commoditized. In some cases, users and publishers skip them altogether. Many PC gamers, for example, now use Discord for audio chat, rather than Steam’s voice chat. The rise of cross-platform gaming also means that most in-game trophies and play records are awarded and managed by a game-maker, rather than by Steam.

Yet no one has managed to compete with or disrupt Valve’s platform, even though PCs, unlike consoles, are open ecosystems. A player can download as many software stores as they like and even buy a game directly from the publisher. The publisher can also withhold that title from Steam and still reach its customers. But Steam’s power and centrality endure.

In 2011 gaming giant Electronics Arts launched its own store, EA Origin, which would exclusively sell PC versions of its titles (thus cutting distribution fees from 30% to 3% or less). Eight years later, EA announced it would return to Steam. Activision Blizzard, the studio behind hits such as Warcraft and Call of Duty, has spent 20 years trying to leave Steam, but except for free-to-play titles such as Call of Duty: Warzone, most of its titles continue to sell through the platform. And Amazon, the largest e-commerce platform in the world and owner of Twitch, the largest video game livestreaming service outside China, has struggled to gain any meaningful share of PC gaming—even after it began adding free games and in-game items to its popular Prime subscription. None of the above even prompted a modest fee cut or policy change by Valve.

Steam’s ongoing success is partly due to its outstanding service and rich feature set. It is also protected by its forcible bundling of distribution, payments, online services, entitlements, and other policies—just like consoles.

One example is that any game purchased through the Steam store or running through Steamworks will forever require Steam to be played. Even decades after Steam rendered its services to a player and developer, the platform will continue to get a cut of ongoing revenues. The only way around this was for the publisher to pull their game from Steam altogether—which would mean requiring users to repurchase the title through another channel. Because Steam does not allow players to export their achievements earned on the platform, they’d lose any awards issued through Steamworks if they did leave Steam.

According to some reports, Steam also uses “most favored nations” (MFN) clauses to ensure that even if a competing store offered lower distribution fees, a publisher would not be able to exploit this to undercut Steam’s consumer-facing prices. Consider a $60 game sold by Steam, which takes $18 (30%) of the $60 and nets $42 to the publisher. If a competitor offered 10% fees, a publisher could still sell that game for $60, thereby netting $54 ($8 more). However, users won’t jump from a store they love (and one that is used by all of their friends and contains decades of game purchases and awards) for nothing. A competing store would have to disrupt Steam by splitting the fee reduction with developers and consumers. The game might be sold for $50, which nets $45 to the publisher ($3 more) and saves the consumer $10 (this price cut might result in more total purchases, too). Unfortunately, Steam’s MFNs made this impossible. If a publisher cuts their price on a competitor’s store, they would have to do the same on Steam. Alternatively, they could leave the store—but a publisher would doubtlessly lose more in customers than they might hope to make up in margin. Crucially, this MFN agreement applied even to a publisher’s own store, rather than just third-party aggregators like Steam.

The most notable effort to compete with Steam came from Epic Games, which launched the Epic Games Store in 2018 with the explicit purpose of reducing distribution fees in the PC gaming industry. To attract developers as well as users, Epic sought to offer all of the benefits of Steam, but with fewer limitations and better prices.

Games sold through EGS would not require a player to keep using EGS as long as they wanted to play the game. Players actually owned a copy of a game, rather than the right to a copy of that game inside EGS; game-makers could therefore leave the store at any time without abandoning their customers. Players owned their in-game data, too. If they ever wanted to leave the platform for a publisher’s own store, or any other, they could take their trophies and player networks with them. EGS offered 12% store fees (which dropped to 7% if the developer was already using Unreal, thereby ensuring that even if a developer used Epic’s engine and store, they’d pay no more than 12% combined, even if multiple distinct products were bought, used, or licensed).

Epic also used its hit game Fortnite, which was generating more revenue per year than any other game in history, to bring players to the store. With an update, PC copies of the game were transformed into the Epic Games Store itself, with Fortnite a launchable title within it. Epic also spent hundreds of millions giving away free copies of hit games such as Grand Theft Auto V and Civilization V, and hundreds of millions more on exclusive windows to a series of not-yet-released PC titles. Due to Steam’s MFNs, it could not, however, offer lower prices on non-exclusive titles.

On December 3, 2018—only three days before Epic launched its store—Steam announced that it would cut its commission to 25% after a publisher’s title exceeded $10 million in gross sales, and to 20% after $50 million. This was an early victory for Epic, though the company noted Valve’s concession most strongly benefited the largest game developers—that is, the few global giants most likely to start their own stores or pull their games from Steam. It did not apply to the many thousands of independent developers struggling to stay afloat, let alone turn a big profit. Valve also declined to open up Steamworks. Nevertheless, the move shifted hundreds of millions in annual profits from Steam to developers.

By January 2020, Epic had spent huge amounts of money yet inspired no further concessions from Steam (nor the console platforms). However, Epic’s CEO, Tim Sweeney, expressed his view that competing stores would need to cut their rates, tweeting that EGS was a “coin toss”: “Heads, other stores don’t respond, so Epic Games Store wins [by stealing market share] and all developers win. Tails, competitors match us, we lose our revenue sharing advantage, and maybe other stores win, but all developers still win.”3 Sweeney’s gambit may ultimately prove right, but as of February 2022, Valve’s policies had yet to budge a second time. EGS, meanwhile, was accumulating enormous losses and showing limited evidence of sustainable success with players. Epic’s public disclosures showed the platform’s revenues grew from $680 million in 20194 to $700 million in 20205 and $840 million in 2021.6 However, 64% of this spend was on Fortnite, with the title also driving 70% of platform revenue growth over the three-year period. With nearly 200 million unique users in 2021, some 60 million of which were active in December, EGS does seem popular (Steam has an estimated 120–150 million monthly users). But as the platform’s revenues suggest, many of these players are likely using EGS just to play Fortnite, which can only be accessed through EGS on PCs. It’s also likely that many non-Fortnite players use EGS solely for its free games. In 2021 alone, Epic released 89 titles for free, worth a combined $2,120 at retail (or roughly $24 each). Over 765 million copies were redeemed that year, representing a notional value of $18 billion, compared to $17.5 billion the prior year and $4 billion in 2019.* While these giveaways did draw players, they did not lead to much user spending (they probably harmed it). The average user spent between $2 and $6 on non-Fortnite content throughout the entirety of 2021 (and received $90 to $300 in free games). Leaked documents from Epic Games suggested that EGS lost $181 million in 2019, $273 million in 2020, and would lose between $150 and $330 million in 2021, with breakeven occurring in 2027 at the earliest.7

One could argue that because PCs are an open platform, no store can have a monopoly—and notably, the dominant online game distributor is independent from both Microsoft and Apple, which run the Windows and Mac operating systems and offer their own stores. At the same time, it’s telling that there is only one major profitable store, and its biggest suppliers struggle to exist outside of it. Few should consider this a healthy outcome, especially at a 30% fee or even 20% fee. This is because, as always, payments are a bundle that spans not just the processing of a transaction, but a user’s online existence, their storage locker, their friendships, and their memories, as well as a developer’s obligation to their oldest customers.

From Pac-Man to iPod

You might be wondering what Pac-Man cartridges, Steam MFNs, and Call of Duty copies have to do with the Metaverse. Well, the gaming industry isn’t just informing the creative design principles and building the underlying technologies of the “next-generation internet.” It also serves as the Metaverse’s economic precedent.

In 2001, Steve Jobs introduced digital distribution to most of the world through the iTunes music store. For his business model, he chose to emulate the 30% commission commanded by Nintendo and the rest of the gaming industry (though unlike consoles, the iPod itself had gross margins above 50%, not below 0%). Seven years later, this 30% was transposed to the iPhone’s app store, with Google quickly following suit for its Android operating system.

Jobs also decided, at this point, to adopt the closed software model used by the console platforms, but that had not been previously used by its Mac laptops and computers, or its iPod.† On iOS, all software and content would need to be downloaded from Apple’s App Store, and as with PlayStation, Xbox, Nintendo, and Steam, only Apple had a say over what software could be distributed and how users would be billed.

Google took a more permissive approach with Android, which technically allowed users to install apps without using the Google Play Store—and without third-party app stores. But this required users to navigate deep into their account settings, and grant permission for individual applications (for example, Chrome, Facebook, or the mobile Epic Games Store) to install “unknown apps,” while warning users this made their “phone and personal data more vulnerable to attack” and forcing them to agree “that you are responsible for any damage to your phone or loss of data that may result from their use.” Although Google did not take responsibility for any damage or loss of data resulting from the use of apps distributed by its Google Play store, the additional steps and warnings meant that while most PC users downloaded software directly from its maker, such as Microsoft Office from Microsoft.com or Spotify from Spotify.com, almost no one did on Android.

It took more than a decade for the problems associated with the proprietary model employed by Apple, and in a different way, by Google, to surface on the global stage. In June 2020, the European Union sued Apple after Spotify and Rakuten, two streaming media companies, alleged Apple used its fees to benefit its own software services (such as Apple Music) and stifle competitors. Two months later, Epic Games sued both Apple and Google, alleging their 30% fees and controls were unlawful and anti-competitive. A week before the suit, Sweeney had tweeted that “Apple has outlawed the metaverse.”

The delay had several causes. One was the unequal impact of Apple’s store policies, which primarily charged “new economy” businesses and waived fees on old economy ones. Apple established three broad categories of apps when it came to in-app purchases. The first category was transactions made for a physical product, such as buying Dove soap from Amazon or loading a Starbucks gift card. Here, Apple took no commission and even allowed these apps to directly use third-party payment rails, such as PayPal or Visa, to complete a transaction. The second category was so-called reader apps, which included services that bundle non-transactional content (for example, an all-you-can-eat Netflix, New York Times, Spotify subscription), or that allow a user to access content they previously purchased, such as a movie previously bought from Amazon’s website that the user now wants to stream on Amazon’s Prime Video iOS app. The third category was interactive apps in which users can affect the content (in a game, or cloud drive, for example) or make individual transactions for digital content (such as a specific movie rental or purchase on the Prime Video app). These apps had no choice but to offer in-app billing.

While these interactive apps could offer online browser-based payment alternatives, like reader apps, players could still not be told about these options inside the app itself. As such, these alternatives were rarely used—if known. Imagine the last time you used an app which supported in-app payments from Apple—did you ever wonder whether the app’s developer offered better prices online? And if they did, how much cheaper would they need to be for you to bother signing up for their account and entering your payment information, rather than just click “Buy” in the App Store? 10%? 15%? How big would the purchase need to be (saving 20% on a $0.99 extra life doesn’t seem worth it)? Maybe 20% worked for most purchases, but then a developer was “saving” only 7%, as they then needed to cover the fees charged by PayPal or Visa. If, instead, a game could require a customer to go elsewhere, like Netflix or Spotify, they might be able to save 20% or even 27%.

Various emails and documents from Epic’s court case against Apple revealed that the App Store’s multi-category payment models resulted primarily from where Apple believed it could exert leverage. But leverage also correlated with where Apple believed it could create value. Mobile commerce, of course, has been critical to the growth of the global economy for some time, but most of it was a reallocation from physical retail. For many people, the iPad form factor made reading the New York Times more compelling on the tablet than in print, but Apple didn’t enable the journalism industry. Mobile gaming was different. When the App Store launched, the gaming industry generated just over $50 billion per year—$1.5 billion of which was in mobile. By 2021, mobile was more than half the $180 billion industry and represented 70% of growth since 2008.

The economics of the App Store exemplify this dynamic. In 2020, an estimated $700 billion was spent using iOS apps. However, less than 10% of this was billed by Apple. Of this 10%, nearly 70% was for games. Put differently, seven in every 100 dollars spent inside iPhone and iPad apps were for games, but 70 of every 100 dollars grossed by the App Store were from the category. Given that these devices are not gaming-focused, are rarely bought for this purpose, and that Apple offers almost none of the online services of a gaming platform, this figure often comes as a surprise. The judge overseeing Epic Games’ lawsuit against Apple famously told Apple CEO Tim Cook: “You don’t charge Wells Fargo, right? Or Bank of America? But you’re charging the gamers to subsidize Wells Fargo.”8

Because the App Store’s revenue came primarily from a tiny, but fast-growing, segment of the world economy, it also took time for the App Store to become a large business worth scrutinizing. Ironically, even Apple seemed to doubt it would become one. Two months after its launch, Jobs reviewed the nascent business with the Wall Street Journal. In its report, the paper stated that “Apple wasn’t likely to derive much in the way of a direct profit from the business. . . . Jobs is betting applications will sell more iPhones and wireless-enabled iPod touch devices, enhancing the appeal of the products in the same way music sold through Apple’s iTunes has made iPods more desirable.” To this end, Jobs told the Journal that Apple’s 30% fees were intended to cover credit card fees and other operating expenses for the store. He also said that the App Store “is going to crest a half a billion, soon . . . Who knows, maybe it will be a $1 billion marketplace at some point in time.” The App Store passed this $1 billion mark in its second year, with Apple noting that it now operated “a bit over break-even.”9

By 2020, the App Store had become one of the best businesses on earth. With revenues of $73 billion and an estimated 70% margin, it would’ve been large enough to be a member of the Fortune 15 if it was spun off from its parent company (which is the largest company in the world by market capitalization, as well as the most profitable in dollar terms). And this is despite the fact that the App Store billed less than 10% of transactions flowing through its system, which themselves made up less than 1% of the global economy. Were iOS an “open platform,” these profits would likely have been competed away, at least in part. Visa and Square would offer smaller in-app fees, while competing app stores would emerge that offered services comparable to Apple’s but at lower prices. But this isn’t possible because Apple controls all of the software on its device, and like gaming consoles, keeps it closed and bundled. And its only major competitor, Google, is just as happy with the state of play.

These issues aren’t exclusive to the Metaverse, of course, but their consequences for it will be profound, for the same reason Judge Gonzalez Rogers narrowed in on Apple’s gaming policies: the entire world is becoming game-like. That means it’s being forced into the 30% models of the major platforms.

Take Netflix, as an example. In December 2018, the streaming service chose to remove in-app billing from its iOS app. As a “reader app,” this was the company’s right, and its financial planning team had decided that while asking users to sign up on Netflix.com and manually enter their credit card would cost them some sign-ups versus Apple’s one-click in-app alternative, this missed revenue was less than the 30% that it would have to send Apple.‡ But in November 2021, Netflix added mobile games to its subscription plan, which turned the company into an “interactive app” and forced the company to return to Apple’s own payment service (or stop offering an iOS app altogether).

But why, exactly, does Apple’s 30% “outlaw” the Metaverse, to return to Sweeney’s pre-lawsuit remark? There are three core reasons. First, it stifles investment in the Metaverse and adversely affects its business models. Second, it cramps the very companies that are pioneering the Metaverse today, namely integrated virtual world platforms. Third, Apple’s desire to protect these revenues effectively prohibits many of the most Metaverse-focused technologies from further development.

High Costs and Diverted Profits

In the “real world,” payment processing costs as little as 0% (cash), typically maxes out at 2.5% (standard credit card purchases), and sometimes reaches 5% (in the case of low-dollar-value transactions with high minimum fees). These figures are low because of robust competition between payment rails (wire versus ACH, for example) and within them (Visa versus MasterCard and American Express).

But in the “Metaverse,” everything costs 30%. True, Apple and Android do provide more than just payment processing—they also operate their app stores, hardware, operating systems, suite of live services, and so on. But all of these capabilities are forcibly bundled and consequently not exposed to direct competition. Many payment rails are also bundles. For example, American Express provides consumers with access to credit, as well as its payment networks, perks, and insurance, while merchants gain access to lucrative clientele, fraud service, and more. Yet they are also available unbundled and compete based on the specifics of these bundles. In smartphones and tablets, there is no such competition. Everything is bundled together, in only two flavors: Android and iOS. And neither system has an incentive to cut fees.

This doesn’t necessarily mean the bundle is overpriced or problematic. But they certainly appear to be. The average annual interest rate on unsecured credit card loans is 14%–18%, while most states have usury prohibitions that cap rates at 25%. Even the most expensive malls in the world don’t charge rents that work out to 30% of a business’s revenue, nor do the tax rates in the highest-taxed nations’ highest-taxed states’ highest-taxed cities come close to 30%. If they did, every consumer, worker, and business would leave and every taxing body would suffer as a result. But in the digital economy, there are only two “countries” and both are happy with their “GDP.”

Furthermore, average small-to-medium business profit margins in the US are between 10% and 15%. In other words, Apple and Google collect more in profit from the creation of a new digital business or digital sale than those who invested (and took the risk) to make it. It’s hard to argue that this is a healthy outcome for any economy. Considered another way, cutting the commissions of these platforms from 30% to 15% would more than double the profits of independent developers—with much of that money then reinvested into their products. Many if not most would agree that this is probably better than funneling more money to two of the richest companies on earth.

The current dominance of Apple and Google also leads to undesirable economic incentives. Nike, which is already pioneering virtual athletics apparel in the Metaverse, serves as a good example. If Nike sells physical shoes through its Nike iOS app, Apple collects a 0% fee. Later, if Nike decides to give the purchasers of its real-world shoes the rights to virtual copies (“buy Air Jordan’s in-store, get a pair in Fortnite,” for example), Apple will still not take a fee. If the owner then “wears” these virtual shoes in the real world, as might be rendered through an iPhone or forthcoming Apple AR headset, Apple is still owed nothing. The same applies if Nike’s physical shoes have Bluetooth or NFC chips that speak to Apple’s iOS devices. But if Nike wants to sell stand-alone virtual shoes to a user, or virtual running tracks, or virtual running lessons, Apple is owed 30%. In theory, Apple would be owed a cut if it determined the primary source of value in a combined virtual + physical set of shoes was virtual too. The upshot is a lot of chaos for a series of outcomes in which the function of Apple’s device, components, and capabilities is largely the same.

Here is another hypothetical, this time focused on Activision, a virtual-first company, unlike Nike. If a Call of Duty: Mobile user buys a $2 pair of virtual sneakers for her character, Apple collects $0.60. But if Activision asks the user to instead watch $2 worth of advertisements in exchange for a free pair of virtual sneakers, Apple collects $0. In short, the consequences of Apple’s policies will shape how the Metaverse is monetized, and who leads that process. For Nike, the 18% differential between Apple’s 30% fee and the 12% argued by Epic is nice, but not necessary. And if Nike wants, it can skip it altogether by leveraging its existing, physical business. Most start-ups do need the extra margin, however, and can’t rely on a pre-Metaverse business line.

These problems are only going to grow in the years to come. Today, a high school tutor can sell video-based lessons directly to customers via web browser, and if they choose to offer an iOS app, they can opt against in-app payments. This is because video-focused apps are “reader apps.” But if this tutor wants to add interactive experiences, such as a physics class that involves the construction of a simulated Rube Goldberg machine, or an instructional course on automotive engine repairs with rich 3D immersion, they are obligated to support in-app payments because they’re now an “interactive app.” Apple or Android receive a cut specifically because this tutor chose to invest in a harder, and more expensive, lesson.

Apple would argue that the added benefit of immersion would justify their cut, but the math here is tricky. A $100 non-interactive textbook sold outside the app store would need to charge $143 to make up for Apple’s fee. The teacher would need an even higher price to recoup their added investments and risk—and for every additional dollar they charged, Apple would take 30 cents. At $200, Apple receives $60 for the new lesson, while the teacher’s take-home has increased by only $40 and the students are out an extra $100. It’s difficult to read this as a positive societal outcome—especially given that the student’s educational experience is unlikely to have doubled in quality, no matter the significance of 3D-specific enhancements.

Constrained Virtual World Platform Margins

The problems of 30% payment rails are particularly acute in virtual world platforms.

Roblox is full of happy users and talented creators. However, few of these creators are making money. Although Roblox Corporation had nearly $2 billion in revenues in 2021, only 81 developers (i.e., companies) netted over $1 million that year, and only seven crossed $10 million. This is bad for everyone, really, given that more developer revenue would mean more developer investment and better products for users, which in turn drives more user spending.

Unfortunately, it’s difficult for developers to increase revenues because Roblox pays them only 25% of every dollar spent on their games, assets, or items. While this makes Apple’s 70%–85% payout rates seem generous, the reverse is true.

Imagine a hypothetical involving $100 in Roblox iOS revenue. Based on fiscal 2021 performance, $30 goes to Apple off the top, $24 is consumed by Roblox’s core infrastructure and safety costs, and another $16 is taken up by overhead. This leaves a total of $30 in pretax gross margin dollars for Roblox to reinvest in its platform. Reinvestment spans three categories: research and development (which makes the platform better for users and developers), user acquisition (which increases network effects, value for the individual player, and revenues for developers), and developer payments (which leads to the creation of better games on Roblox). These categories receive $28, $5, and $28 (this exceeds Roblox’s target 25% due to incentives, minimum guarantees, and other commitments to developers), or $60 combined. As a result, Roblox currently operates at a roughly –30% margin on iOS. (Roblox’s blended margin is a bit better at –26%. This is because iOS and Android represent 75%–80% of total revenues by platform, with most of the remaining coming from platforms such as Windows, which do not take a fee).

To summarize, Roblox has enriched the digital world and turned hundreds of thousands of people into new digital creators. But for every $100 of value it realizes on a mobile devices, it loses $30, developers collect $25 in net revenue (that is, before all of their development costs), and Apple collects roughly $30 in pure profit even though the company puts nothing at risk. The only way for Roblox to increase developer revenues today is to deepen its losses or halt its R&D, which would in turn harm both Roblox and its developers over the long term.

Roblox’s margins should improve over time as neither overhead nor sales and marketing expenses are likely to grow as fast as revenues. However, these two categories will unlock only a few percentage points—not enough to cover its sizable losses or to marginally increase developer revenue shares. R&D should offer some scale-related margin improvements, too, but fast-growing companies shouldn’t be achieving profitability through R&D operating leverage. Roblox’s largest cost category, infrastructure and safety, is unlikely to decrease as it is mostly driven by usage (which in turn drives revenue) and if anything, the company’s R&D is likely to enable experiences that cost more to operate per hour (for example, virtual worlds with high concurrency or that involve more cloud data streaming). The second-largest (and only remaining) cost category is store fees, which Roblox has no control over.

To Apple, Roblox’s margin constraints (and the consequences of those constraints on Roblox developer revenues) are a feature, not a bug, of the App Store system. Apple does not want a Metaverse comprised of integrated virtual world platforms, but of many disparate virtual worlds that are interconnected through Apple’s App Store and the use of Apple’s standards and services. By depriving these IVWPs of cash flow, while offering developers much more of it, Apple can nudge the Metaverse to this outcome.

Let’s return to my earlier example of a tutor looking to produce interactive classes. The tutor needs to increase the price of their lesson by 43% or more just to break even due to Apple’s 30% cut. But if they shift to Roblox, their price would need to increase by 400% to offset the 75.5% collected by Roblox and Apple combined. While Roblox is much easier to use than Unity or Unreal, takes on many additional costs for the tutor (for example, server fees), and aids in customer acquisition, the enormity of this price gap will drive most developers to release standalone apps using Unity and Unreal, or to bundle together in an education-specific IVWP. In either outcome, Apple becomes the primary distributor of virtual software, with the App Store providing discovery and billing services.

Stopping Disruptive Technologies

The policies of Apple and Google limit the growth potential not only of virtual world platforms, but also the internet at large. For many, the World Wide Web is the best “proto-Metaverse.” Though it lacks several components of my definition, it is a massively scaled and interoperable network of websites, all running on common standards and available on nearly every device, running any operating system, and through any web browser. Many in the Metaverse community thus believe that the web and web browser should be the focal point of all Metaverse development. Several open standards are already being shepherded, including OpenXR and WebXR for rendering, WebAssembly for executable programs, Tivoli Cloud for persistent virtual spaces, WebGPU, which aspires to provide “modern 3D graphics and computation capabilities” inside a browser, and more.

Apple has frequently argued that its platform isn’t closed because it provides access to the “open web”—that is, websites and web apps. As such, developers need not produce apps to reach its iOS users, especially if they disagree with Apple’s fees or policies. Furthermore, the company argues, most developers choose to make apps despite this alternative, which shows that Apple’s bundled services are outcompeting the entirety of the web, rather than being anti-competitive.

Apple’s argument isn’t convincing. Recall the story I highlighted at the beginning of this book, about what Mark Zuckerberg once called Facebook’s “biggest mistake.” For four years, the company’s iOS app was really just a “thin client” that ran HTML. That is, its app had very little code and was, for the most part, just loading various Facebook webpages. Within one month of switching over to an app that was “rebuilt from the ground up” on native code, users were reading double the number of Facebook News Feed stories.

When an app is written natively for a given device, programming is specifically configured for that device’s processors, components, and so forth. As a result, the app has more efficient, optimized, and consistent performance. Web pages and web apps cannot directly access native drivers. Instead, they must speak to a device’s components through a “translator” of sorts and with more generic (and often bulkier) code. This leads to the opposite outcome of native applications: inefficiency, sub-optimization, and less reliable performance (such as crashes).

But as much as consumers prefer native apps for everything from Facebook to the New York Times and Netflix, they’re essential to rich real-time rendered 2D and 3D environments. These experiences are computationally intensive—far more so than rendering a photo, loading a text article, or playing back a video file. Web-based experiences largely preclude rich gameplay such as that of Roblox, Fortnite, and Legend of Zelda. This happens to be one of the reasons Apple was able to place such strict in-app billing rules on the gaming categories.

What’s more, the web must be accessed through a web browser, which is an application. And Apple uses its control over its App Store to prevent competing browsers on its iOS devices. This may be surprising if you regularly use Chrome on your iPhone or iPad. However, these are really just the “iOS system version of [Apple’s Safari] WebKit wrapped around Google’s own browser UI,” according to the Apple expert John Gruber, and the iOS Chrome app [cannot] “use the Chrome rendering or JavaScript engines.” What we think of as Chrome on iOS is simply a variant of Apple’s own Safari browser, but one that logs into Google’s account system.§10

Because Safari underpins all iOS browsers, Apple’s technical decisions for its browser define what the nominally “open web” can and cannot offer developers and users. Critics argue that Apple uses its position to direct both developers and users to native apps, where the company collects a commission.

The best case study here is Safari’s tepid adoption of WebGL, a JavaScript API designed to enable more complex browser-based 2D and 3D rendering using local processors. WebGL doesn’t bring “app like” gaming to the browser, but it does elevate performance while also simplifying the development process.

However, Apple’s mobile browser typically supports only a sub-selection of WebGL’s total feature set, and often years after they’re first released. Mac Safari adopted WebGL 2.0 18 months after it released, but mobile Safari waited more than four years to do the same.¶ In effect, Apple’s iOS policies reduce the headroom afforded by already low ceilings of web-based gaming, thereby pushing more developers and users to its App Store, and avoiding an interoperable “Metaverse” that, like the World Wide Web, was built on HTML.

Support for this hypothesis can be found in the approach Apple has taken to another method of real-time rendering: cloud. In Chapter 6, I discussed this technology in detail; as you’ll recall, cloud game streaming involves shifting much of the “work” normally managed by a local device (such as a console or tablet) to a remote data center. A user can then access computing resources that far outstrip those that might be affordably (if ever) contained in a small consumer electronics device, which is theoretically good for both user and developers.

It is not good, however, for those whose business models are predicated upon selling said devices and the software that runs on them. Why? These devices end up little more than a touchscreen with a data connection and that’s simply playing a video file. If a 2018 iPhone and 2022 iPhone both play Call of Duty equivalently well—the most complex application that’s likely to run on the device—why spend $1,500 on replacing the device? If you no longer need to download multi-gigabyte games, why buy the higher priced (and higher margin) iPhones with large hard drives?

Cloud gaming is even more threatening to Apple’s relationship with mobile app developers. To release an iPhone game today, a developer must be distributed by Apple’s App Store and use Apple’s proprietary API collection, Metal. But to release a cloud-streaming game, a developer could distribute through nearly any application, from Facebook to Google, the New York Times, or Spotify. Not only that, but the developer could use whichever API collections they wanted, such as WebGL or even those the developer wrote themselves, while also using whichever GPUs and operating systems they liked—and still reach every Apple device that worked.

For years, Apple essentially blocked any form of cloud gaming application. Google’s Stadia and Microsoft’s Xbox were technically allowed to have an application, but only if it did not actually load games. Instead, they were effectively showrooms—showing off what these hypothetical services had—like a version of Netflix that had thumbnail tiles that could not be clicked.

Because cloud game streams are video streams, and the Safari browser supports video streaming, cloud gaming was still technically possible on iOS devices (though Apple prohibited these applications from telling users this fact). But Safari also places numerous experiential limitations on the Safari browser which, to both cloud- and WebGL-based game developers, make browser-based gaming unsatisfying. For example, web apps are not allowed to perform background data synchronization, automatically connect to Bluetooth devices, or send push notifications such as an invitation to play a game. Again, these limitations don’t really affect applications like the New York Times or Spotify, but severely erode interactive ones.

Apple originally argued that cloud gaming was banned in order to protect users. Apple would not be able to review and approve all titles and their updates, and thus users could be harmed by inappropriate content, privacy violations, or substandard quality. But this argument was inconsistent with other app categories and policies. Netflix and YouTube bundle together thousands and even billions of videos that went unreviewed by Apple. In addition, Apple’s App Store policy did not require developers to have perfect moderation, merely robust efforts and policies.

Given this, critics have countered that Apple’s policies were motivated by the desire to protect its own hardware and game sale businesses. The rise of music streaming might have been a cautionary tale for Apple, in this regard. In 2012, iTunes had a nearly 70% market share in digital music revenues in the US and operated at nearly 30% gross profit margins. Today, Apple Music has less than a third of streaming music share and is believed to operate at negative gross margin. Spotify, the market leader, doesn’t even sell itself through iTunes. Amazon Music Unlimited, which ranks third, is almost exclusively used by Prime customers, and nets Apple no revenue.

In the summer of 2020, Apple finally revised its policies so that services such as Google Stadia and Microsoft’s xCloud could exist on iOS and as apps. But the new policies are byzantine and widely described as anti-consumer. To give just one striking example, cloud gaming services would need to first submit every single game (and future update) to the App Store for review, and then maintain a separate listing for the game in the App Store.

This policy requirement has several implications. First, Apple would effectively control the content release schedules for these services. Second, it could unilaterally deny any title (which would happen only after it had been licensed, and the service would have no direct ability to modify the game for it to meet Apple’s requirements). Third, user reviews would be fragmented across the streaming service’s app and the App Store. Fourth, these game-distribution services would need their developers to form a relationship with the App Store, a competing game-distribution service.

Apple’s policies also stated that Stadia subscribers would still not be able to play Stadia games through the Stadia app (which would remain a catalogue). Instead, users would need to download a dedicated Stadia app for every individual game they wanted to play. This would be like downloading a House of Cards Netflix app, and an Orange Is the New Black Netflix app, and a Bridgerton Netflix app, with the Netflix app itself serving only as a catalogue/directory for rights management, rather than a streaming video service. According to leaked emails between Microsoft and Apple, each app would be nearly 150 megabytes, and need to be updated every time the underlying cloud-streaming technology was updated.

Even though Stadia would bill the user for their gaming subscription, curate the content inside that subscription, and power its delivery, Apple would distribute the cloud game (via the App Store) and iOS customers would access the title through the iOS home screen (not the Stadia app). Apple’s policies also create inevitable consumer confusion. If a game was offered by multiple services, for example, the App Store would end up with multiple listings (there would be Cyberpunk 2077—Stadia, Cyberpunk 2077—Xbox, Cyberpunk 2077—PlayStation Now, and so on). And every time a service removed a title from their service (if Stadia removed Cyberpunk 2077), users would be left with an empty app on their device.

Apple also declared that all game streaming services would need to be sold through the App Store as well, treating them differently than how Apple treats other media bundles, such as those of Netflix and Spotify, which have their apps distributed by the App Store but can (and choose) not to offer iTunes billing. Finally, Apple said that every subscription-based game must also be made available as an à la carte purchase through the App Store. This, again, differs from its policies with music, video, audio, and books. Netflix does not need to (and doesn’t) make Stranger Things available on iTunes for purchase or rental.

Microsoft and Facebook (which was also working on its own cloud game-streaming service) were quick to publicly criticize Apple’s revised policy. “This remains a bad experience for customers,” Microsoft reported the day of Apple’s update. “Gamers want to jump directly into a game from their curated catalog within one app just like they do with movies or songs, and not be forced to download over 100 apps to play individual games [that stream] from the cloud.” Facebook’s video president of gaming told The Verge, “We’ve come to the same conclusion as others: web apps are the only option for streaming cloud games on iOS at the moment. As many have pointed out, Apple’s policy to ‘allow’ cloud games on the App Store doesn’t allow for much at all. Apple’s requirement for each cloud game to have its own page, go through review, and appear in search listings defeats the purpose of cloud gaming. These roadblocks mean players are prevented from discovering new games, playing cross-device, and accessing high-quality games instantly in native iOS apps—even for those who aren’t using the latest and most expensive devices.”

Blocking Blockchain

For all of the constraints that Apple places on interactive experiences, its most stringent controls focus on emergent payment rails.

Witness Apple’s control over its NFC chip. NFC refers to near-field communication, a protocol that enables two electronic devices to wirelessly share information over short distances. Apple prohibits all iOS applications and browser-based experiences from using NFC mobile payments, with the sole exception being Apple Pay. Only Apple Pay can offer “tap-and-go” payments, which take a second or less to complete and don’t even require the user to open their phone, let alone navigate to an application or its sub-menu. Visa, meanwhile, must ask a user to do exactly that, then have a retailer scan a virtually reproduced version of a physical card or a bar code.

Apple claims that its policies are intended to protect its customers and their data. But there’s no evidence to suggest that Visa, Square, or Amazon would endanger users—and Apple could easily introduce a policy that provided NFC access only to regulated banking institutions. Alternatively, it could place additional security requirements, such as $100 or even a $5 limit, on NFC purchases. Apple does enable third-party developers to use the NFC chip for other use cases that are arguably more dangerous than buying a cup of coffee or a pair of jeans. Marriott and Ford, for example, use NFC to unlock hotel rooms and car doors. One might reasonably conclude this is correlated with the fact Apple doesn’t operate in the hotel or automotive industries. It does, however, take an estimated 0.15% of every Apple Pay transaction—even if Apple Pay processes the actual transaction using the customer’s Visa or MasterCard.

The Apple Pay problem may seem modest today. That said, and as I discussed in Chapter 9, we may be moving to a future in which our smartphone is not just a smartphone, but a supercomputer that will power the many devices around us. It’s also likely to serve as our passport to the virtual and physical worlds. Not only is the Apple iCloud ID used to access most online software today, Apple has received approval from several American states to operate digital versions of state-issued identification, such as a driver’s license, which can then be used to fill out a banking application or board a flight. Exactly how these IDs are used, which developers they’re made available to, and under what conditions, could help determine the nature and timing of the Metaverse.

Another case study is Apple’s approach to blockchains and cryptocurrencies. In the next chapter, I’ll go into more detail on how these technologies work, what they might offer the Metaverse, and why Apple’s policies are so problematic if you’re a blockchain believer. But first, I want to quickly address how they’re already in conflict with App Store policies and platform incentives. For example, neither Apple nor any of the major console platforms allow applications that are used for crypto mining or decentralized data processing. Apple has based this prohibition on the stated belief that such apps “rapidly drain battery, generate excessive heat, or put unnecessary strain on device resources.”11 Users might fairly argue that they—not Apple or Sony—have the right to decide whether their battery is being too quickly drained, to manage the health of their device, and to determine the appropriate use of their device’s resources. Regardless, the net effect is that none of these devices can participate in the blockchain economy, nor make their idle computing power available to those who need it (via decentralized computing).

In addition, these platforms (with the exception of the Epic Games Store) do not allow games that accept cryptocurrencies as a form of payment, or that use cryptocurrency-based virtual goods (that is, non-fungible tokens, or NFTs). Though this is sometimes portrayed as a protest against the energy used to power blockchains, such claims don’t hold up to scrutiny. Sony’s music label has invested in NFT start-ups, and created its own NFTs, while Microsoft’s Azure offers blockchain certifications and its corporate venture arm has made numerous start-up investments. Apple CEO Tim Cook has admitted that he owns cryptocurrencies and considers NFTs “interesting.” It’s more likely that these platforms refuse blockchain games because they simply do not work with their revenue models. Allowing Call of Duty: Mobile to connect to a cryptocurrency wallet would be akin to a user connecting the game directly to their bank account, rather than paying through the App Store. Accepting NFTs, meanwhile, would be like a movie theater permitting customers to bring their grocery bags to a film—some people might still buy a box of M&Ms, but most wouldn’t. What’s more, it’s impossible to imagine how a platform might justify taking a 30% commission from the purchase or selling a multi-thousand- or million-dollar NFT—and if such commissions did apply, the entirety of the NFT’s value would be devoured if it traded hands enough times.