Part II

Chapter 5

VERSIONS OF THE THOUGHT EXPERIMENT “IF A tree falls in a forest and no one is around to hear it, does it make a sound?” can be traced back hundreds of years. The exercise endures in part because it’s fun, and it’s fun because it hinges on important technicalities and philosophical ideas.

The subjective idealist George Berkeley, to whom the above question is often attributed, argued that “to be is to be perceived.” The tree—standing, falling, or fallen—exists if someone or something is perceiving it. Others claim that what we mean by “sound” is just vibrations that propagate through matter, and that it exists whether they’re received by an observer or not. Or maybe sound is the sensation experienced by a brain when these vibrations interact with nerve endings—and if there are no nerves to interact with vibrating particles, there can be no sound. Then again, for decades humans have been able to produce physical equipment capable of interpreting vibrations as sound, thereby enabling sound to be heard through an artificial observer. But does that count? Meanwhile, the quantum mechanics community today largely agrees that without an observer, existence is at best a conjecture that cannot be proved or disproved—all that can be said is that the tree might exist. (Albert Einstein, who was instrumental in founding the theory of quantum mechanics, took issue with this view.)

In Part II, I explain what it will take to power and build the Metaverse, starting with networking and computing capabilities, and then moving on to the game engines and platforms that operate its many virtual worlds, the standards which are needed to unite them, the devices through which they’re accessed, and the payment rails that underpin their economies. Throughout these many explanations, I want you to keep in mind Berkeley’s tree.

Why? Because even if the Metaverse is “fully realized,” it will not really exist. It, alongside each of its trees, their many leaves, and the forests they’re situated in, will just be data stored in a seemingly infinite network of servers. While it could be argued that as long as that data exists, the Metaverse and its contents do too, there are many different steps and technologies required for it to exist to anyone but a database. Furthermore, each part of the “Metaverse stack” provides a company with leverage and informs what is and isn’t possible for another. For example, you’ll find out that no more than a few dozen people today can even observe the fall of a high-fidelity tree. And to reach more users? Well, the virtual world will need to be duplicated—in other words, for many people to hear a single tree fall, many trees must fall. (Try that one, Berkeley!) Or, perhaps, its observers are put on time delay, thus also unable to affect the fall nor prove its correlation. Another technique is to simplify the tree’s bark to textureless, uniform brown and the sound of its fall into a generic thud.

To unpack these constraints and their implications, I want to begin with a real example: the virtual world I consider the most technically impressive today. No, it’s not Roblox, nor Fortnite. In fact, this virtual world is likely to reach fewer people in its lifetime than each of those titles do in a single day. It’s not even fair to call it a game, as so many of the virtual worlds we’ve looked at so far can be. Instead, it’s designed to precisely reproduce an experience many deem unpleasant, dull, or terrifying: airplane travel.

Bandwidth

The first Flight Simulator was released in 1979 and quickly established a small following. Three years later (but still two decades before the first Xbox was released), Microsoft acquired a license for the title, releasing another ten entries by 2006. In 2012, Guinness World Records named Flight Simulator the longest-running video game franchise, though it remained unknown to most gamers. It took until the 12th entry, which released in 2020, for Microsoft Flight Simulator (MSFS) to soar into the public consciousness. Time magazine named it one of the best games of the year. The New York Times said MSFS provided “a new way of understanding the digital world,” providing a view “that is more real than the one we can see outside [and] a picture that illuminates our understanding of reality.”1

In theory, MSFS is what many people see it as: a game. Within seconds of opening the application, you’ll be reminded that Microsoft’s Xbox Game Studios developed and published it. However, the goal of MSFS is not to win, kill, shoot, defeat, beat, or score on another player, nor an AI-based competitor. The goal is to a fly a virtual plane—a process that involves much of the same work as flying a real one. Players will communicate with air traffic control and their copilots, wait to be cleared for takeoff, set their altimeter and flaps, check for fuel reserves and mixtures, release their brake, slowly push the throttle, and so on, all before following their selected or designated flight path while managing for conflicting routes and accommodating the flight paths of other virtual planes.

Every entry in the MSFS series offered that sort of functionality, but the 2020 edition is extraordinary—the most realistic and expansive consumer-grade simulation in history. Its map is over 500,000,000 square kilometers—just like the “real” planet earth—and includes two trillion uniquely rendered trees (not two trillion copy-and-pasted trees, or two trillion trees made up of a few dozen varieties), 1.5 billion buildings, and nearly every road, mountain, city, and airport across the world.2 Everything looks like the “real thing” because MSFS’s virtual world is based on high-quality scans and images of the “real thing.”

Microsoft Flight Simulator’s reproductions and renders aren’t perfect, but they are still stunning. “Players” can fly past their own house and spot their mailbox or the tire swing in the front yard. Even when the “game” must reproduce a sunset that’s reflected across a bay and that is refracted yet again by the plane’s wings, it can be difficult to distinguish between a screenshot from MSFS and a real-world photograph.

To pull this off, MSFS’s “virtual world” is nearly 2.5 petabytes large, or 2,500,000 gigabytes—roughly 1,000 times larger than Fortnite. There is no way for a consumer device (or most enterprise devices) to store this amount of data. Most consoles and PCs top out at 1,000 gigabytes, while the largest consumer-grade Network Attached Storage (NAS) drive is 20,000 gigabytes and retails for close to $750 alone. Even the physical space required to store 2.5 petabytes is impractical.

But even if a consumer could afford such a hard drive and have enough space to house it, MSFS is a live service. It updates to reflect real-world weather (including accurate wind speed and direction, temperature, humidity, rain, and light), air traffic, and other geographic changes. This allows a player to fly into real-world hurricanes, or to trail real-life commercial airliners on their exact flight path while they’re in the air in the real world. This means that users cannot “pre-buy” or “pre-download” all of MSFS—much of it doesn’t yet exist!

Microsoft Flight Simulator works by storing a relatively small portion of the “game” on a consumer’s device—roughly 150 GB. This portion is enough to run the game—it contains all of the game’s code, visual information for numerous planes, and a number of maps. As a result, MSFS can be used offline. However, offline users see mostly procedurally generated environments and objects, with landmarks such as Manhattan broadly familiar but populated with generic, mostly duplicated buildings that bear only an occasional and sometimes accidental resemblance to their real-world counterparts. Some preprogrammed flight paths exist, but they cannot mimic actual live routes, nor can one player see another player’s plane.

It’s when players go online that MSFS becomes such a wonder, with Microsoft’s servers streaming new maps, textures, weather data, flight paths, and whatever other information a user might need. In a sense, players experience the MSFS world exactly as a real-world pilot might. When they fly over or around a mountain, new information streams into their retinas via light particles, revealing and then clarifying what’s there for the first time. Before then, a pilot knows only that, logically, something must be there.

Many gamers assume this is what happens in all online multiplayer video games. But the truth is that most online games try to send as much information as possible to the user in advance, and as little as possible when they’re playing. This explains why playing a game, even a relatively small one such as Super Mario Bros., requires purchasing digital discs that contain multi-gigabyte game files, or spending hours downloading these files—and then spending even more time installing them. And then from time to time, we might be told to download and install a multi-gigabyte update before we can play again. These files are so large because they contain nearly the entire game—namely its code, game logic, and all the assets and textures required for the in-game environment (every type of tree, every avatar, every boss battle, every weapon, and so on).

For the typical online game, what actually comes from online multiplayer servers? Not much. Fortnite’s PC and console game files are roughly 30 GB in size, but online play involves only 20–50 MB (or 0.02–0.05 GB) in downloaded data per hour. This information tells the player’s device what to do with the data they already have. For example, if you’re playing an online game of Mario Kart, Nintendo’s servers will tell your Nintendo Switch which avatars your opponents are using and should therefore be loaded. During the match, your continuous connection to this server enables it to send a constant stream of data on exactly where these opponents are (“positional data”), what they’re doing (sending a red shell at you), communications (e.g., your teammate’s audio), and various other information, such as how many players are still in the match.

That online games remain “mostly offline” is even a surprise to avid gamers. After all, most music and video is now streamed—we don’t predownload songs or TV shows anymore, let alone buy physical CDs to store them—and video gaming is supposedly a more technically sophisticated and forward-looking media category. Yet it is precisely because games are so complicated that those who make them choose to marginalize the internet—because the internet is not reliable. Connections are not reliable, bandwidth is not reliable, latency is not reliable. As I discussed in Chapter 3, most online experiences can survive this unreliability, but games cannot. As a result, developers chose to rely as little on the internet as possible.

This mostly offline approach to online games works well, but it imposes many limitations. For example, the fact that a server can only tell individual users which assets, textures, and models should be rendered means that every asset, texture, and model must be known and stored in advance. By sending rendering data on an as-needed basis, games can have a much greater diversity of virtual objects. Microsoft Flight Simulator aspires for every town to not just differ from one another, but to exist as they do in real life. And it doesn’t want to store 100 types of clouds and then tell a device which cloud to render and with what coloring; rather, it wants to say exactly what that cloud should look like.

When a player sees their friend in Fortnite today, they can interact using only a limited set of pre-loaded animations (or “emotes”), such as a wave or a moonwalk. Many users, however, imagine a future where their live facial and body movements are re-created in a virtual world. To greet a friend, they won’t pick Wave 17 of the 20 waves pre-loaded onto their device, but will wave uniquely articulated fingers in a unique way. Users also hope to bring their myriad virtual items and avatars across the myriad virtual worlds connected to the Metaverse. As MSFS’s file size suggests, it’s simply not possible to send so much data to the user in advance. Doing so not only requires impractically large hard drives, but a virtual world that knows in advance anything that might be created or performed.

The need to “presend” an otherwise living virtual world has other implications. Every time Epic Games alters Fortnite’s virtual world—say, to add new destinations, vehicles, or non-playable characters—users have to download and install an update. The more Epic adds, the longer this takes and the longer a user must wait. The more frequently a world is updated, the more delays a user will experience.

The batch-based update process also means that virtual worlds cannot be truly “alive.” Instead, a central server is choosing to send a specific version of a virtual world out to all users, a world that will endure until the next update replaces it. Each edition is not necessarily fixed—an update might have programmed changes, such as a New Year’s Eve event or snowfall which increases on a daily basis—but it is pre-scripted.

Finally, there are limitations to where users can go. During Travis Scott’s 10-minute event on Fortnite, some 30 million players were instantly transported from the game’s core map to the depths of a never-before-seen ocean, then to a never-before-seen planet, and then deep into outer space. Many of us may imagine that the Metaverse operates in similar fashion—that users can easily jump from virtual world to virtual world without first enduring long loading times. But to put on the concert, Epic had to send users each of these mini-worlds days to hours before the event via a standard Fortnite patch (users who hadn’t downloaded and installed the update before the event started weren’t able to participate in it). Then, during each set piece, every player’s device was loading the next set piece in the background. It was notable that each of Scott’s concert destinations was smaller and more limited than the one before it, with the last being a largely “on rails” experience in which users simply flew forward in largely nondescript space. Think of this as the difference between freely exploring a mall versus traversing one via a moving walkway.

The concert was nevertheless a significant creative achievement, but as is often the case with online games, it depended on technical choices that cannot support the Metaverse. In fact, the most Metaverse-like virtual worlds today are embracing a hybrid local/cloud streaming data model in which the “core game” is preloaded, but several times as much data are sent on an as needed basis. This approach is less important for titles such as Mario Kart or Call of Duty, which have relatively small item and environmental diversity, but critical to those like Roblox and MSFS.

Given the popularity of Roblox and the immensity of MSFS, it might seem as though modern internet infrastructure can now handle Metaverse-style live data streaming. However, the model only works today in a highly constrained fashion. Roblox, for example, doesn’t need to cloud stream much data because most of its in-game items are based on “pre-fabs.” The game is mostly just telling a user’s device how to tweak, recolor, or re-arrange previously downloaded items. In addition, Roblox’s graphical fidelity is relatively modest and therefore its texture and environmental file sizes are relatively small, too. Overall, Roblox’s data usage is much greater than that of Fortnite—roughly 100–300 MB per hour, rather than 30–50 MB—but still manageable.

At its target settings, MSFS needs nearly 25 times as much hourly bandwidth than Fortnite and five times as much as Roblox. This is because it’s not sending data on how to reconfigure or recolor a pre-loaded house, but instead sending a user’s device the exact dimensions, density, and coloration of a multi-kilometer cloud or a nearly exact replica of the Gulf of Mexico’s shoreline. Yet even this need is simplified in ways that won’t work for “the Metaverse.”

While MSFS needs lots of data, it doesn’t need it particularly fast. As with real-world pilots, MSFS pilots cannot suddenly teleport from New York State to New Zealand, nor see downtown Albany from 30,000 feet above Manhattan, nor descend from the firmament to the tarmac in a few minutes. This provides the player’s device with lots of time to download the data it needs—and even the ability to predict (and thus start downloading) what it needs before the player even selects a destination. Even if this data doesn’t arrive on time, the consequences are modest: some of Manhattan’s buildings will temporarily be procedurally generated, rather than resemble the real thing, with the realistic details then added when they arrive.

Finally, MSFS’s virtual world has more in common with a diorama than Neal Stephenson’s bustling and unpredictable Street. Sending users this sort of data, which cannot easily be predicted and is far more voluminous than the visual detail of an office park or forest, will require significantly more than 1 GB per hour. This brings us to the next, and arguably least understood, element of internet connectivity today: latency.

Latency

Bandwidth and latency are often conflated, and the mistake is understandable: they both impact how much data can be sent or received per unit of time. The classic way to differentiate the two is by likening your internet connection to a highway. You can think of “bandwidth” as the number of lanes on the highway, and “latency” as the speed limit. If a highway has more lanes, it can carry more cars and trucks without congestion. But if the highway’s speed limit is low—perhaps due to too many curves or because it’s laid in gravel not pavement—then the flow of traffic is slow even if there’s spare capacity. Similarly, a high speed limit with only one lane results in constant congestion, too—the speed limit is an aspiration, not a reality.

The challenge with real-time rendered virtual worlds is that users aren’t sending a single car from one destination to another. Instead, they’re sending a never-ending fleet of cars tethered together (remember, we need a “continuous connection”) both to and from that destination. It’s not possible to send these cars in advance because their contents are decided only milliseconds before they hit the road. What’s more, we need these cars to move at their fastest possible speed and without ever being diverted to another route (which would sever the continuous connection and lengthen the transit time even if the top speed was maintained).

A global road system that meets and sustains these specifications is a substantial challenge. In Part I, I explained that few online services today need ultra-low latency. It doesn’t matter if it takes 100 milliseconds or 200 milliseconds or even two-second delays between sending a WhatsApp message and receiving a read receipt. It also doesn’t matter if it takes 20 ms or 150 ms or 300 ms after a user clicks YouTube’s pause button until the video stops—and most users probably don’t register the difference between 20 ms and 50 ms. When you’re watching Netflix, it’s more important that the video plays reliably rather than immediately. And while latency in a Zoom video call is annoying, it’s easy for participants to manage; they just learn to wait a bit after the speaker stops speaking. Even a second (1,000 ms) is workable.

The human threshold for latency is incredibly low in interactive experiences. A user must instinctively feel as though their inputs actually have an effect—and delayed responses mean that the “game” is responding to old decisions after new decisions have been made. For this same reason, playing against a user with lower latency can often feel like you’re competing against someone in the future—someone with superspeed—who is able to parry a blow you’ve not even made.

Think back to the last time you watched a movie or a TV show on a plane, an iPad, or in a theater, and the audio and video were slightly out of sync. The average person doesn’t even notice a synchronization issue unless the audio is more than 45 ms early, or over 125 ms late (170 ms total variance). Acceptability thresholds, as they’re generally called, are even wider, at 90 ms early and 185 ms late (275 ms). With digital buttons, such as the YouTube pause button, the average person only thinks that their click failed if a response takes 200 to 250 ms. In games such as Fortnite, Roblox, or Grand Theft Auto, avid gamers become frustrated after 50 ms of latency (most game publishers hope for 20 ms). Even casual gamers feel input delay, rather than their inexperience, are to blame at 110 ms.3 At 150 ms, games that require a quick response are simply unplayable.

So what does latency look like in practice? In the United States, the median time for data to be sent from one city to another and back again is 35 ms. Many pairings exceed this, especially between cities with high-density and intense demand peaks (for example, San Francisco to New York during the evening). Crucially, this is just city-to-city or data center–to–data center transit time. There is still city center–to-the-user transit time, which is particularly prone to slowdowns. Dense cities, local networks, and individual condominiums can easily congest and are often laid with copper cabling with limited bandwidth, rather than high-capacity fiber. Those who live outside a major city might sit at the end of dozens or even hundreds of miles of copper-based transmission. For those whose last mile is on wireless, 4G adds up to 40 additional milliseconds.

Despite these challenges, round-trip delivery times in the United States are typically within the acceptability threshold. However, all connections suffer from “jitter,” the packet-to-packet variance in delivery time relative to the median. While most jitter is tightly distributed around the connection’s median latency, it can frequently spike severalfold due to unforeseen congestion somewhere along a network path—including the end-users’ network as a result of interference from other electronic devices, or perhaps a family member or neighbor initiating a video stream or download. Though temporary, this can easily ruin a fast-paced game or result in a severed network connection. Again, networks aren’t reliable.

To manage latency, the online gaming industry has developed a number of partial solutions and workarounds. For example, most high-fidelity multiplayer gaming is “match made” around server regions. By limiting the player roster to those who live in the northeastern United States, or Western Europe, or Southeast Asia, game publishers can minimize latency within each region. Because gaming is a leisure activity and typically played with one to three friends, this clustering works well enough. You’re unlikely to want to play with a specific person several time zones away, and you don’t really care where your unknown opponents live anyway (in most cases, you can’t even talk with them).

Multiplayer online games also use “netcode” solutions to ensure synchronization and consistency and to keep players playing. Delay-based netcode will tell a player’s device (say, a PlayStation 5) to artificially delay its rendering of its owner’s inputs until the more latent player’s (their opponent’s) inputs arrive. This will annoy players with muscle memory attuned to low latency, but it works. Rollback netcode is more sophisticated. If an opponent’s inputs are delayed, a player’s device will proceed based on what it expects to happen. If it turns out the opponent did something different, the device will try to unwind in-process animations and then replay them “correctly.”

Although these workarounds are effective, they scale poorly. Netcode works well for titles in which player inputs are fairly predictable, such as driving simulations, or those with relatively few players to synchronize, as is the case in most fighting games. However, it’s exponentially harder to correctly predict and coherently synchronize the behaviors of dozens of players, especially when they’re participating in a sandbox-style virtual world with cloud-streamed environmental and asset data. That is why Subspace, a real-time bandwidth technology company, estimates that only three-quarters of American broadband homes can consistently (but far from flawlessly) participate in today’s high-fidelity real-time virtual worlds, such as Fortnite and Call of Duty, while in the Middle East less than one-quarter can. And meeting the latency threshold is not sufficient. Subspace has found that an average 10 ms increase or decrease in latency reduces or increases weekly play time by 6%. What’s more, this correlation holds beyond the point at which even avid gamers can recognize network latency—if their connection is at 15 ms, rather than 25 ms, they will likely play 6% longer. Almost no other type of business faces such sensitivity, and in that gaming is an engagement-based business, the revenue implications are considerable.

This might seem like a game-specific problem, rather than a Metaverse problem. It’s also notable that these issues affect only a portion of game revenues at that. Many hit titles, such Hearthstone and Words with Friends, are either turn-based or asynchronous, while other synchronous titles, such as Honour of Kings and Candy Crush, need neither pixel perfect nor millisecond-precise inputs. Yet the Metaverse will require low latency. Slight facial movements are incredibly important to human conversation. We’re also highly sensitive to slight mistakes and synchronization issues—which is why we don’t mind how the mouths on a cartoonish Pixar character moves, but are quickly creeped out by a photo-real CGI human whose lips don’t move exactly right (animators call this the “uncanny valley”). Talking to your mother as though she’s on a 100-ms delay can quickly become eerie. While interactions in the Metaverse don’t have the time sensitivities of a pixel-specific bullet, the volume of data required is much greater. Recall that latency and bandwidth collectively affect how much information can be sent per unit of time.

Social products, too, depend on how many users can and do use them. Although most multiplayer games are played with other people in the same time zone, or perhaps one removed, internet communication often spans the entire globe. Earlier, I mentioned that it can take 35 ms to send data from the US Northeast to the US Southeast. It takes even longer to travel between continents. Median delivery times from the US Northeast to Northeast Asia are as much as 350 or 400 ms—and even longer from user to user (as much as 700 ms to 1 full second). Just imagine if FaceTime or Facebook didn’t work unless your friends or family were within 500 miles. Or they only worked when you were at home. If a company wants to tap into foreign or at-distance labor in the virtual world, it will need better than half-second delays. Every single additional user to a virtual world only compounds synchronization challenges.

Augmented reality–based experiences have particularly stringent requirements for latency because they’re based on both head and eye movements. If you wear eyeglasses, you may take it for granted that your eyes immediately adjust to your surroundings when you turn around and receive light particles at 0.00001 ms. But imagine how you’d feel if there were 10–100-ms delays on receiving that new information.

Latency is the greatest networking obstacle on the way to the Metaverse. Part of the issue is that few services and applications need ultra-low-latency delivery today, which in turn makes it harder for any network operator or technology company focused on real-time delivery. The good news here is that as the Metaverse grows, investment in lower latency internet infrastructure will increase. However, the fight to conquer latency doesn’t just stretch our pocketbooks; it comes up against the laws of physics. To quote the CEO of one leading video game publisher with experience building games for cloud delivery: “We are in a constant battle with the speed of light. But the speed of light is and will remain undefeated.” Consider how difficult it is to send even a single byte from NYC to Tokyo or Mumbai at ultra low-latency levels. At a distance of 11,000–12,500 km, this commute takes light 40–45 ms. The physics of the universe only beat the target minimum for competitive video games by 10%–20%. This doesn’t sound like we’re losing to the laws of physics. But in practice, we fall far short of this 40–45-ms benchmark. The average latency of a packet sent from Amazon’s northeastern US data center (which serves NYC) to its Southeast Asia Pacific (Mumbai and Tokyo) data center is 230 ms.

There are many causes for this delay. One is silica glass. While many assume that data sent via fiber optic cables travel at the speed of light, they’re both right and wrong. The light beams themselves do travel at the speed of light—which, as any student knows, is a constant—but they don’t travel in a straight line even when the cable itself is laid in a straight line. This is because all glass fibers, unlike the vacuum of space, refract light. As such, the path of a given beam is closer to a tight zigzag bouncing between the edges of a given fiber. The result is a nearly 31% elongation of a route. This brings us to 58 or 65 ms.

In addition, most internet cables are not laid as the crow flies—they must navigate international rights, geographic impediments, and cost/benefit analyses. As a result, many countries and major cities lack a direct connection. NYC has a direct undersea cable to France, but not to Portugal. Traffic from the United States can go directly to Tokyo, but reaching India requires jumping from one undersea cable to another on the Asian or Oceanian continent. A single cable could be laid from the United States to India, but it would need to navigate through or around Thailand—adding hundreds or even thousands of miles—and that only solves shore-to-shore transmission.

Perhaps surprisingly, it’s harder to improve domestic internet infrastructure than international internet infrastructure. Laying (or replacing) cables means working around extensive transportation infrastructure (freeways and railways), various population centers (each with their own political processes, constituencies, and incentives), and protected parks and lands. Laying a cable over a seamount in international waters is simple compared to laying a cable over a private-public mountain range.

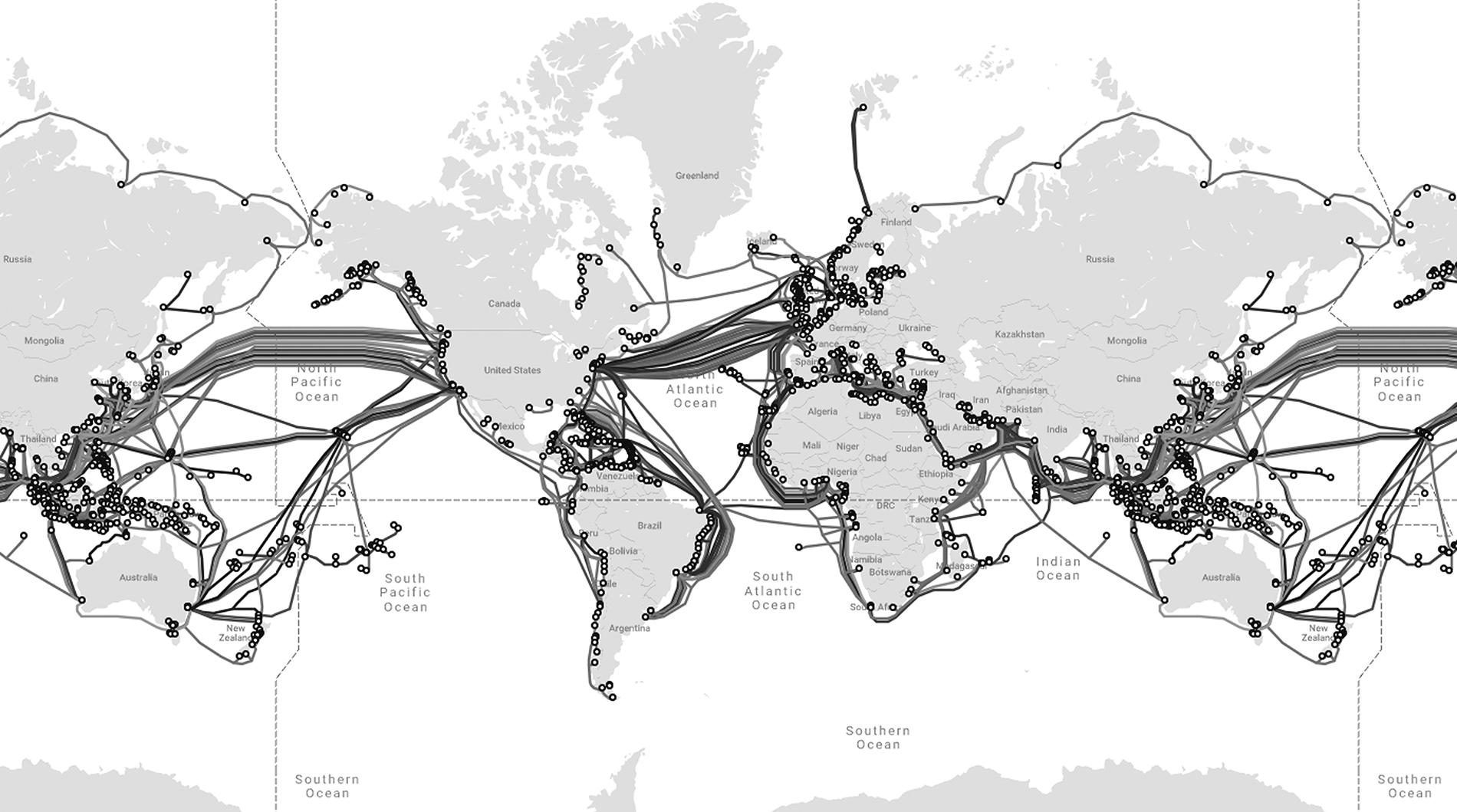

Image 1. Undersea Cables A map of the nearly 500 submarine cables and 1,250 landing stations that enable the global internet. TeleGeography

The phrase “internet backbone” might bring to mind a largely planned out, and partly federated, network of cables. In truth, the internet backbone is really a loose federation of private networks. These networks were never laid to be nationally efficient. Rather, they serve local purposes. For example, a private network operator company might have installed a fiber line between two suburbs or even two office parks. Given the expense of permits and the efficiencies of piggybacking onto existing efforts, rather than connect a pair of cities as the crow flies, cable has often been laid when and where other infrastructure was being built.

When data is sent between two cities, such as New York and San Francisco, or Los Angeles and San Francisco for that matter, it may be carried by several different networks strung together (each segment is called a hop). None of these networks were designed to minimize the distance or transit time between these two locations. Accordingly, a given packet might travel substantially farther than the literal geographic distance between a user and server.

This challenge is exacerbated by the Border Gateway Protocol (BGP), one of the core application layer protocols of TCP/IP. As you read in Chapter 3, BGP serves as a sort of air traffic controller for data transmitted “on the internet” by helping each network determine which other network to route data through. However, it does so without knowing what is being sent, in which direction, or with what significance. As such, it “helps” by applying a fairly standardized methodology which mostly prioritizes cost.

BGP’s ruleset reflects the internet’s original asynchronous network design. Its goal is to ensure all data is transmitted successfully and inexpensively. But as a result many routes are far longer than necessary—and inconsistently so. Two players located in the same building in Manhattan could be in the same Fortnite match, managed by a Virginia-based Fortnite server, with packets that might be routed through Ohio first and thus take 50% longer to reach the destination. Data might be sent back to one of the players through an even longer network path which runs through Chicago. And any one of these connections might end up severed, or suffer from recurring bouts of 150-ms latency, all to prioritize traffic that didn’t need to be delivered in real time, such as an email.

All of these factors together explain why it takes more than four times longer for the average data packet to travel from NYC to Tokyo than a particle of light, five times longer from NYC to Mumbai, and two to four times longer to reach San Francisco, depending on the moment.

Improving delivery time will be incredibly expensive, difficult, and slow. Replacing or upgrading cable-based infrastructure is not only costly—it also requires government approvals, typically at multiple levels. The more direct the intended path of these cables, the more difficult these approvals tend to be because the more direct path is more likely to run into prior residential, commercial, government, or environmentally protected property.

It’s much easier to upgrade wireless infrastructure. 5G networks are primarily billed as offering wireless users “ultra-low latency,” with the potential of 1 ms and a more realistic 20 ms expected. This represents 20–40 ms in savings versus today’s 4G networks. However, this only helps the last few hundred meters of data transmission. Once a wireless user’s data hits the tower, it moves to fixed-line backbones.

Starlink, SpaceX’s satellite internet company, promises to provide high-bandwidth, low-latency internet service across the United States, and eventually the rest of the world. However, satellite internet doesn’t achieve ultra-low latency, especially at great distances. As of 2021, Starlink averages 18–55-ms travel time from your house to the satellite and back, but this time frame extends when the data has to go from New York to Los Angeles and back, as this involves traveling across multiple satellites or traditional terrestrial networks.

In some cases, Starlink even exacerbates the problem of travel distances. New York to Philadelphia is around 100 miles in a straight line and potentially 125 miles by cable, but over 700 miles when traveling to a low-orbit satellite and back down. Not only that, fiber optic cable is much less “lossy” than light transmitted through the atmosphere, especially during cloudy days. Dense city areas, because they are noisy, are subject to interference for that reason, too. In 2020, Elon Musk emphasized that Starlink is focused “on the hardest-to-serve customers that [telecommunications companies] otherwise have trouble reaching.”4 In this sense, satellite delivery enables more people to meet the minimum latency specifications for the Metaverse, rather than offers improvements for those who already meet it.

The Border Gate Protocol may be updated or supplemented with other protocols, or new proprietary standards could be introduced and adopted. In any event, we like to imagine that what’s possible is only limited by the minds and innovations of Roblox Corporation, or Epic Games, or the individual creator, and it is true that these groups have proven adept at designing around network-based limitations. They will continue to do so as we navigate all of the bandwidth and latency challenges ahead. At least in the near future, however, these all-too-real limitations will continue to constrain the Metaverse and everything in it.