Part three

![]()

![]()

6

“If we wish to base psychiatry on evidence-based

medicine, we run a genuine risk in taking a closer

look at what has long been considered fact.”

—EMMANUEL STIP, EUROPEAN PSYCHIATRY (2002)1

The basement in Harvard Medical School’s Countway Library is one of my favorite places in Boston. After stepping off the elevator, you enter a huge, somewhat dingy room, filled with the musty smell of old books. I often stop a few feet inside the doorway and take in the grand sight: row after row of bound copies of medical journals from the early 1800s to 1986. The place is almost always empty, and yet there are rich histories to be discovered here, and soon, as you begin to piece together a particular narrative of medicine, you are hopping from one journal to the next, the pile of books on your desk growing ever higher. There is the thrill of the chase, and it seems too that this part of the library never disappoints. All of the journals are organized in alphabetical order, and whenever in one article you find a citation that interests you, all you have to do is walk a few feet and inevitably you find the journal you need. At least up until recently, the Countway Library seems to have purchased nearly every medical journal that was published.

This is where we can begin our quest to find out how psychiatric drugs affect long-term outcomes. The research method we’ll need to follow is straightforward. First, to the best we can, we’ll have to flesh out the natural spectrum of outcomes for each particular disorder. In the absence of antipsychotic medications, how would people diagnosed with schizophrenia likely fare over time? What chance—if any—would they have of recovering? How well might they fare in society? The same questions can be asked in regard to anxiety, depression, and bipolar illness. What would outcomes look like in the absence of anti-anxiety drugs, antidepressants, and mood stabilizers? Once we have a sense of a baseline for a disorder, we can trace the outcomes literature for that illness, and we can hope that it will tell a consistent, coherent story. Do the drug treatments alter the long-term course of a mental disorder—in the patient population as a whole—for the better? Or for the worse?

Since chlorpromazine (Thorazine) was the drug that launched the psychopharmacology revolution, it seems appropriate to investigate schizophrenia outcomes first.

The Natural History of Schizophrenia

Schizophrenia today is regularly thought of as a lifelong, chronic illness, and that is an understanding that originated with the work of German psychiatrist Emil Kraepelin. In the late 1800s, he systematically tracked the outcomes of patients at an asylum in Estonia, and he observed that there was an identifiable group that reliably deteriorated into dementia. These were patients who, upon entry to the asylum, showed a lack of emotion. Many were catatonic, or lost hopelessly in their own worlds, and they often had gross physical problems. They walked oddly, suffered from facial tics and muscle spasms, and were unable to complete willed physical acts. In his 1899 textbook Lehrbuch der Psychiatrie, Kraepelin wrote that these patients suffered from dementia praecox, and in 1908, Swiss psychiatrist Eugen Bleuler coined the term “schizophrenia” as a substitute diagnostic term for patients in this dilapidated condition. However, as British historian Mary Boyle convincingly argued in a 1990 article, “Is Schizophrenia What It Was? A Re-analysis of Kraepelin’s and Bleuler’s Population,” many of Kraepelin’s dementia praecox patients were undoubtedly suffering from a viral disease, encephalitis lethargica, which in the late 1800s had yet to be identified. This disease caused people to turn delirious, or to drop into a stupor, or to start walking in a jerky manner, and once Austrian neurologist Constantin von Economo described the illness in 1917, the encephalitis lethargica patients were no longer part of the “schizophrenia” pool, and after that happened, the patient group that remained was quite different from Kraepelin’s dementia praecox group. “The inaccessible, the stuporous catatonic, the intellectually deteriorated”—those types of schizophrenia patients, Boyle noted, largely disappeared. As a result, the descriptions of schizophrenia in psychiatric textbooks during the 1920s and 1930s changed. All of the old physical symptoms—the greasy skin, the odd gait, the muscle spasms, the facial tics—disappeared from the diagnostic manuals. What remained were the mental symptoms—the hallucinations, the delusions, and the bizarre thoughts. “The referents of schizophrenia,” Boyle wrote, “gradually changed until the diagnosis came to be applied to a population who bore only a slight, and possibly superficial, resemblance to Kraepelin’s.”2

So now we have to ask: What is the natural spectrum of outcomes for that group of psychotic patients? Here, unfortunately, we run into a second problem. From 1900 until the end of World War II, eugenic attitudes toward the mentally ill were quite popular in the United States, and that social philosophy dramatically affected their outcomes. Eugenicists argued that the mentally ill needed to be sequestered in hospitals to keep them from having children and spreading their “bad genes.” The goal was to keep them confined in asylums, and in 1923, an editorial in the Journal of Heredity concluded, with an air of satisfaction, that “segregation of the insane is fairly complete.”3 As a result, many people diagnosed with schizophrenia in the first half of the century were hospitalized and never discharged, but that social policy was then misperceived as outcomes data. The fact that schizophrenics never left the hospital was seen as proof that the disease was a chronic, hopeless illness.

However, after World War II, eugenics fell into disrepute. This was the very “science” that Hitler and Nazi Germany had embraced, and after Albert Deutsch’s exposé of the abysmal conditions in U.S. mental hospitals, in which he likened them to concentration camps, many states began talking about treating the mentally ill in the community. Social policy changed and discharge rates soared. As a result, there is a brief window of time, from 1946 to 1954, when we can look at how newly diagnosed schizophrenia patients fared and thereby get a sense of the “natural outcomes” of schizophrenia prior to the arrival of Thorazine.*

Here’s the data. In a study conducted by the NIMH, 62 percent of first-episode psychotic patients admitted to Warren State Hospital in Pennsylvania from 1946 to 1950 were discharged within twelve months. At the end of three years, 73 percent were out of the hospital.4 A study of 216 schizophrenia patients admitted to Delaware State Hospital from 1948 to 1950 produced similar results. Eighty-five percent were discharged within five years, and on January 1, 1956—six years or more after initial hospitalization—70 percent were successfully living in the community.5Meanwhile, Hill side Hospital in Queens, New York, tracked 87 schizophrenia patients discharged in 1950 and determined that slightly more than half never relapsed in the next four years.6 During this period, outcomes studies in England, where schizophrenia was more narrowly defined, painted a similarly encouraging picture: Thirty-three percent of the patients enjoyed a “complete recovery,” and another 20 percent a “social recovery,” which meant they could support themselves and live independently.7

These studies provide a rather startling view of schizophrenia outcomes during this time. According to the conventional wisdom, it was Thorazine that made it possible for people with schizophrenia to live in the community. But what we find is that the majority of people admitted for a first episode of schizophrenia during the late 1940s and early 1950s recovered to the point that within the first twelve months, they could return to the community. By the end of three years, that was true for 75 percent of the patients. Only a small percentage—20 percent or so—needed to be continuously hospitalized. Moreover, those returning to the community weren’t living in shelters and group homes, as facilities of that sort didn’t yet exist. They were not receiving federal disability payments, as the SSI and SSDI programs had yet to be established. Those discharged from hospitals were mostly returning to their families, and judging by the social recovery data, many were working. All in all, there was reason for people diagnosed with schizophrenia during that postwar period to be optimistic that they could get better and function fairly well in the community.

It is also important to note that the arrival of Thorazine did not improve discharge rates in the 1950s for people newly diagnosed with schizophrenia, nor did its arrival trigger the release of chronic patients. In 1961, the California Department of Mental Hygiene reported on discharge rates for all 1,413 first-episode schizophrenia patients hospitalized in 1956, and it found that 88 percent of those who weren’t prescribed a neuroleptic were discharged within eighteen months. Those treated with a neuroleptic—about half of the 1,413 patients—had a lower discharge rate; only 74 percent were discharged within eighteen months. This is the only large-scale study from the 1950s that compared discharge rates for first-episode patients treated with and without drugs, and the investigators concluded that “drug-treated patients tend to have longer periods of hospitalization…. The untreated patients consistently show a somewhat lower retention rate.”8

The discharge of chronic schizophrenia patients from state mental hospitals—and thus the beginning of deinstitutionalization—got under way in 1965 with the enactment of Medicare and Medicaid. In 1955, there were 267,000 schizophrenia patients in state and county mental hospitals, and eight years later, this number had barely budged. There were still 253,000 schizophrenics residing in the hospitals.9 But then the economics of caring for the mentally ill changed. The 1965 Medicare and Medicaid legislation provided federal subsidies for nursing home care but no such subsidy for care in state mental hospitals, and so the states, seeking to save money, naturally began shipping their chronic patients to nursing homes. That was when the census in state mental hospitals began to noticeably drop, rather than in 1955, when Thorazine was introduced. Unfortunately, our societal belief that it was this medication that emptied the asylums, which is so central to the “psychopharmacology revolution” narrative, is belied by the hospital census data.

Through a Lens Darkly

In 1955, pharmaceutical companies were not required to prove to the FDA that their new drugs were effective (that requirement was added in 1962), and thus it fell to the NIMH to assess the merits of Thorazine and the other new “wonder drugs” coming to market. Much to its credit, the NIMH organized a conference in September 1956 to “consider carefully the entire psychotropic question,” and ultimately the conversation at the conference focused on a very particular question: How could psychiatry adapt, for its own use, a scientific tool that had recently proven its worth in infectious medicine: the placebo-controlled, double-blind, randomized clinical trial?10

As many speakers noted, this tool wasn’t particularly well suited for assessing outcomes of a psychiatric drug. How could a study of a neuroleptic possibly be “double-blind”? The psychiatrist would quickly see who was on the drug and who was not, and any patient given Thorazine would know he was on a medication as well. Then there was the problem of diagnosis: How would a researcher know if the patients randomized into a trial really had “schizophrenia”? The diagnostic boundaries of mental disorders were forever changing. Equally problematic, what defined a “good outcome”? Psychiatrists and hospital staff might want to see drug-induced behavioral changes that made the patient “more socially acceptable” but weren’t to the “ultimate benefit of the patient,” said one conference speaker.11 And how could outcomes be measured? In a study of a drug for a known disease, mortality rates or laboratory results could serve as objective measures of whether a treatment worked. For instance, to test whether a drug for tuberculosis was effective, an X-ray of the lung could show whether the bacillus that caused the disease was gone. What would be the measurable endpoint in a trial of a drug for schizophrenia? The problem, said NIMH physician Edward Evarts at the conference, was that “the goals of therapy in schizophrenia, short of getting the patient ‘well,’ have not been clearly defined.”12

All of these questions bedeviled psychiatry, and yet the NIMH, in the wake of that conference, made plans to mount a trial of the neuroleptics. The push of history was simply too great. This was the scientific method now used in internal medicine to assess the merits of a therapy, and Congress had created the NIMH with the thought that it would transform psychiatry into a more modern, scientific discipline. Psychiatry’s adoption of this tool would prove that it was moving toward that goal. The NIMH established a Psychopharmacology Service Center to head up this effort, and Jonathan Cole, a psychiatrist from the National Research Council, was named its director.

Over the next couple of years, Cole and the rest of psychiatry settled on a trial design for testing psychotropic drugs. Psychiatrists and nurses would use “rating scales” to measure numerically the characteristic symptoms of the disease that was to be studied. Did a drug for schizophrenia reduce the patient’s “anxiety”? His or her “grandiosity”? “Hostility”? “Suspiciousness”? “Unusual thought content”? “Uncooperativeness”? The severity of all of those symptoms would be measured on a numerical scale and a total “symptom” score tabulated, and a drug would be deemed effective if it reduced the total score significantly more than a placebo did within a six-week period.

At least in theory, psychiatry now had a way to conduct trials of psychiatric drugs that would produce an “objective” result. Yet the adoption of this assessment put psychiatry on a very particular path: The field would now see short-term reduction of symptoms as evidence of a drug’s efficacy. Much as a physician in internal medicine would prescribe an antibiotic for a bacterial infection, a psychiatrist would prescribe a pill that knocked down a “target symptom” of a “discrete disease.” The six-week “clinical trial” would prove that this was the right thing to do. However, this tool wouldn’t provide any insight into how patients were faring over the long term. Were they able to work? Were they enjoying life? Did they have friends? Were they getting married? None of those questions would be answered.

This was the moment that magic-bullet medicine shaped psychiatry’s future. The use of the clinical trial would cause psychiatrists to see their therapies through a very particular prism, and even at the 1956 conference, New York State Psychiatric Institute researcher Joseph Zubin warned that when it came to evaluating a therapy for a psychiatric disorder, a six-week study induced a kind of scientific myopia. “It would be foolhardy to claim a definite advantage for a specified therapy without a two- to five-year follow-up,” he said. “A two-year follow-up would seem to be the very minimum for the long-term effects.”13

The Case for Neuroleptics

The Psychopharmacology Service Center launched its nine-hospital trial of neuroleptics in 1961, and this is the study that marks the beginning of the scientific record that serves today as the “evidence base” for these drugs. In the six-week trial, 270 patients were given Thorazine or another neuroleptic (which were also known as “phenothiazines,”) while the remaining 74 were put on a placebo. The neuroleptics did help reduce some target symptoms—unrealistic thinking, anxiety, suspiciousness, auditory hallucinations, etc.—better than the placebo, and thus, according to the rating’s scales cumulative score, they were effective. Furthermore, the psychiatrists in the study judged 75 percent of the drug-treated patients to be “much improved” or “very much improved,” versus 23 percent of the placebo patients.

After that, hundreds of smaller trials produced similar results, and thus the evidence that these drugs reduce symptoms over the short term better than placebo is fairly robust.* In 1977, Ross Baldessarini at Harvard Medical School reviewed 149 such trials and found that the antipsychotic drug proved superior to a placebo in 83 percent of them.14 The “Brief Psychiatric Rating Scale” (BPRS) was regularly employed in such trials, and the American Psychiatric Association eventually decided that a 20 percent reduction in total BPRS score represented a clinically significant response to a drug.15 Based on this measurement, an estimated 70 percent of all schizophrenia patients suffering from an acute episode of psychosis “respond,” over a six-week period, to an antipsychotic medication.

Once the NIMH investigators determined that the antipsychotics were efficacious over the short term, they naturally wanted to know how long schizophrenia patients should stay on the medication. To investigate this question, they ran studies that, for the most part, had this design: Patients who were good responders to the medication were either maintained on the drug or abruptly withdrawn from it. In 1995, Patricia Gilbert at the University of California at San Diego reviewed sixty-six relapse studies, involving 4,365 patients, and she found that 53 percent of the drug-withdrawn patients relapsed within ten months versus 16 percent of those maintained on the medications. “The efficacy of these medications in reducing the risk of psychotic relapse has been well documented,” she concluded. 16*

This is the scientific evidence that supports the use of antipsychotic medications for schizophrenia, both in the hospital and long-term. As John Geddes, a prominent British researcher, wrote in a 2002 article in the New England Journal of Medicine, “Antipsychotic drugs are effective in treating acute psychotic symptoms and preventing relapse.”17 Still, as many investigators have noted, there is a hole in this evidence base, and it’s the very hole that Zubin predicted would arise. “Little can be said about the efficacy and effectiveness of conventional antipsychotics on nonclinical outcomes,” confessed Lisa Dixon and other psychiatrists at the University of Maryland School of Medicine in 1995. “Well-designed long-term studies are virtually nonexistent, so the longitudinal impact of treatment with conventional antipsychotics is unclear.”18

This doubt prompted an extraordinary 2002 editorial in European Psychiatry, penned by Emmanuel Stip, a professor of psychiatry at the Université de Montréal. “After fifty years of neuroleptics, are we able to answer the following simple question: Are neuroleptics effective in treating schizophrenia?” There was, he said, “no compelling evidence on the matter, when ‘long-term’ is considered.”19

A Conundrum Appears

Although Dixon’s and Stip’s comments suggest that there is no long-term data to be reviewed, it is in fact possible to piece together a story of how antipsychotics alter the course of schizophrenia, and this story begins, quite appropriately, with the NIMH’s follow-up study of the 344 patients in its initial nine-hospital trial. In some ways, the patients—regardless of what treatment they had received in the hospital—were not faring so badly. At the end of one year, 254 were living in the community, and 58 percent of those who—according to their age and gender—could be expected to work were in fact employed. Two-thirds of the “housewives” were functioning OK in that domestic role. Although the researchers didn’t report on the medication use of patients during the one-year follow-up, they were startled to discover that “patients who received placebo treatment [in the six-week trial] were less likely to be rehospitalized than those who received any of the three active phenothiazines.”20

Here, at this very first moment in the scientific literature, there is the hint of a paradox: While the drugs were effective over the short term, perhaps they made people more vulnerable to psychosis over the long term, and thus the higher rehospitalization rates for drug-treated patients at the end of one year. Soon, NIMH investigators were back with another surprising result. In two drug withdrawal trials, both of which included patients who weren’t on any drug at the start of the study, relapse rates rose in correlation with drug dosage. Only 7 percent of those who had been on a placebo at the start of the study replapsed, compared to 65 percent of those taking more than five hundred milligrams of chlorpromazine before the drug was withdrawn. “Relapse was found to be significantly related to the dose of the tranquilizing medication the patient was receiving before he was put on placebo—the higher the dose, the greater the probability of relapse,” the researchers wrote.21

Something was amiss, and clinical observations deepened the suspicion. Schizophrenia patients discharged on medications were returning to psychiatric emergency rooms in such droves that hospital staff dubbed it the “revolving door syndrome.” Even when patients reliably took their medications, relapse was common, and researchers observed that “relapse is greater in severity during drug administration than when no drugs are given.”22 At the same time, if patients relapsed after quitting the medications, Cole noted, their psychotic symptoms tended to “persist and intensify,” and, at least for a time, they suffered from a host of new symptoms as well: nausea, vomiting, diarrhea, agitation, insomnia, headaches, and weird motor tics.23 Initial exposure to a neuroleptic seemed to be setting patients up for a future of severe psychotic episodes, and that was true regardless of whether they stayed on the medications.

These poor results prompted two psychiatrists at Boston Psychopathic Hospital, J. Sanbourne Bockoven and Harry Solomon, to revisit the past. They had been at the hospital for decades, and in the period after World War II ended, when they treated psychotic patients with a progressive form of psychological care, they had seen the majority regularly improve. That led them to believe that “the majority of mental illnesses, especially the most severe, are largely self-limiting in nature if the patient is not subjected to a demeaning experience or loss of rights and liberties.” The antipsychotics, they reasoned, should speed up this natural healing process. But were the drugs improving long-term outcomes? In a retrospective study, they found that 45 percent of the patients treated in 1947 at their hospital hadn’t relapsed in the next five years and that 76 percent were successfully living in the community at the end of that follow-up period. In contrast, only 31 percent of the patients treated at the hospital in 1967 with neuroleptics remained relapse-free for five years, and as a group they were much more “socially dependent”—on welfare and needing other forms of support. “Rather unexpectedly, these data suggest that psychotropic drugs may not be indispensable,” Bockoven and Solomon wrote. “Their extended use in aftercare may prolong the social dependency of many discharged patients.”24

With debate over the merits of neuroleptics rising, the NIMH funded three studies during the 1970s that reexamined whether schizophrenia patients—and in particular those suffering a first episode of schizophrenia—could be successfully treated without medications. In the first study, which was conducted by William Carpenter and Thomas McGlashan at the NIMH’s clinical research facility in Bethesda, Maryland, those treated without drugs were discharged sooner than the drug-treated patients, and only 35 percent of the nonmedicated group relapsed within a year after discharge, compared to 45 percent of the medicated group. The off-drug patients also suffered less from depression, blunted emotions, and retarded movements. Indeed, they told Carpenter and McGlashan that they had found it “gratifying and informative” to have gone through their psychotic episodes without having their feelings numbed by the drugs. Medicated patients didn’t have that same learning experience, and as a result, Carpenter and McGlashan concluded, over the long term they “are less able to cope with subsequent life stresses.”25

A year later, Maurice Rappaport at the University of California in San Francisco announced results that told the same story, only more strongly so. He had randomized eighty young newly diagnosed male schizophrenics admitted to Agnews State Hospital into drug and non-drug groups, and although symptoms abated more quickly in those treated with antipsychotics, both groups, on average, stayed only six weeks in the hospital. Rappaport followed the patients for three years, and it was those who weren’t treated with antipsychotics in the hospital and who stayed off the drugs after discharge that had—by far—the best outcomes. Only two of the twenty-four patients in this never-exposed-to-antipsychotics group relapsed during the three-year follow-up. Meanwhile, the patients that arguably fared the worst were those on drugs throughout the study. The very standard of care that, according to psychiatry’s “evidence base,” was supposed to produce the best outcomes had instead produced the worst.

“Our findings suggest that antipsychotic medication is not the treatment of choice, at least for certain patients, if one is interested in long-term clinical improvement,” Rappaport wrote. “Many unmedicated-while-in-hospital patients showed greater long-term improvement, less pathology at follow-up, fewer rehospitalizations, and better overall functioning in the community than patients who were given chlorpromazine while in the hospital.”26

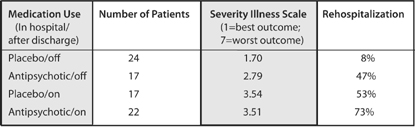

Rappaport’s Study: Three-Year Schizophrenia Outcomes

In this study, patients were grouped according to both their in-hospital care (placebo or drug) and whether they used antipsychotics after they were discharged. Thus, 24 of the 41 patients treated with placebo in the hospital remained off the drugs during the follow-up period. This never-exposed group had the best outcomes by far. Rappaport, M. “Are there schizophrenics for whom drugs may be unnecessary or contraindicated.” International Pharmacopsychiatry 13 (1978): 100–11.

The third study was led by Loren Mosher, head of schizophrenia studies at the NIMH. Although he may have been the nation’s top schizophrenia doctor at the time, his vision of the illness was at odds with many of his peers, who had come to think that schizophrenics suffered from a “broken brain.” He believed that psychosis could arise in response to emotional and inner trauma and, in its own way, could be a coping mechanism. As such, he believed there was the possibility that people could grapple with their hallucinations and delusions, struggle through a schizophrenic break, and regain their sanity. And if that was so, he reasoned that if he provided newly psychotic patients with a safe house, one staffed by people who had an evident empathy for others and who wouldn’t be frightened by strange behavior, many would get well, even though they weren’t treated with antipsychotics. “I thought that sincere human involvement and understanding were critical to healing interactions,” he said. “The idea was to treat people as people, as human beings, with dignity and respect.”

The twelve-room Victorian house he opened in Santa Clara, California, in 1971 could shelter six patients at a time. He called it Soteria House, and eventually he started a second home as well, Emanon. All told, the Soteria Project ran for twelve years, with eighty-two patients treated at the two homes. As early as 1974, Mosher began reporting that his Soteria patients were faring better than a matched cohort of patients being treated conventionally with drugs in a hospital, and in 1979, he announced his two-year results. At the end of six weeks, psychotic symptoms had abated as much in his Soteria patients as in the hospitalized patients, and at the end of two years, the Soteria patients had “lower psychopathology scores, fewer [hospital] readmissions, and better global adjustment.”27 Later, he and John Bola, an assistant professor at the University of Southern California, reported on their medication use: Forty-two percent of the Soteria patients had never been exposed to drugs, 39 percent had used them on a temporary basis, and only 19 percent had needed them throughout the two-year follow-up.

“Contrary to popular views, minimal use of antipsychotic medications combined with specially designed psychosocial intervention for patients newly identified with schizophrenia spectrum disorder is not harmful but appears to be advantageous,” Mosher and Bola wrote. “We think that the balance of risks and benefits associated with the common practice of medicating nearly all early episodes of psychosis should be re-examined.”28

Three NIMH-funded studies, and all pointed to the same conclusion.* Perhaps 50 percent of newly diagnosed schizophrenia patients, if treated without antipsychotics, would recover and stay well through lengthy follow-up periods. Only a minority of patients seemed to need to take the drugs continuously. The “revolving door” syndrome that had become so familiar was due in large part to the drugs, even though, in clinical trials, the drugs had proven to be effective in knocking down psychotic symptoms. Carpenter and McGlashan neatly summarized the scientific conundrum that psychiatry now faced:

There is no question that, once patients are placed on medication, they are less vulnerable to relapse if maintained on neuroleptics. But what if these patients had never been treated with drugs to begin with? … We raise the possibility that antipsychotic medication may make some schizophrenic patients more vulnerable to future relapse than would be the case in the natural course of the illness.29

And if that was so, these drugs were increasing the likelihood that a person who suffered a psychotic break would become chronically ill.

A Cure Worse Than the Disease?

All drugs have a risk-benefit profile, and the usual thought within medicine is that a drug should provide a benefit that outweighs the risks. A drug that curbs psychotic symptoms clearly provides a marked benefit, and that was why antipsychotics could be viewed as helpful even though the list of negatives with these drugs was a long one. Thorazine and other first-generation neuroleptics caused Parkinsonian symptoms and extraordinarily painful muscle spasms. Patients regularly complained that the drugs turned them into emotional “zombies.” In 1972, researchers concluded that neuroleptics “impaired learning.”30 Others reported that even if medicated patients stayed out of the hospital, they seemed totally unmotivated and socially disengaged. Many lived in “virtual solitude” in group homes, spending most of the time “staring vacantly at television,” wrote one investigator.31 None of this told of medicated schizophrenia patients faring well, and here was the quandary that psychiatry now faced: If the drugs increased relapse rates over the long term, then where was the benefit? This question was made all the more pressing by the fact that many patients maintained on the drugs were developing tardive dyskinesia (TD), a gross motor dysfunction that remained even after the drugs were withdrawn, evidence of permanent brain damage.

All of this required psychiatry to recalculate the risks and benefits of antipsychotics, and in 1977 Jonathan Cole did so in an article provocatively titled “Is the Cure Worse Than the Disease?” He reviewed all of the long-term harm the drugs could cause and observed that studies had shown that at least 50 percent of all schizophrenia patients could fare well without the drugs. There was only one moral thing for psychiatry to do: “Every schizophrenic outpatient maintained on antipsychotic medication should have the benefit of an adequate trial without drugs.” This, he explained, would save many “from the dangers of tardive dyskinesia as well as the financial and social burdens of prolonged drug therapy.”32

The evidence base for maintaining schizophrenia patients on antipsychotics had collapsed. “Are the antipsychotics to be withdrawn?” asked Pierre Deniker, the French psychiatrist who, in the early 1950s, had first promoted their use.33

Supersensitivity Psychosis

In the late 1970s, two physicians at McGill University, Guy Chouinard and Barry Jones, stepped forward with a biological explanation for why the drugs made schizophrenia patients more biologically vulnerable to psychosis. Their understanding arose, in large part, from the investigations into the dopamine hypothesis of schizophrenia, which had detailed how the drugs perturbed this neurotransmitter system.

Thorazine and other standard antipsychotics block 70 to 90 percent of all D2 receptors in the brain. In an effort to compensate for this blockade, the postsynaptic neurons increase the density of their D2 receptors by 30 percent or more. The brain is now “supersensitive” to dopamine, Chouinard and Jones explained, and this neurotransmitter is thought to be a mediator of psychosis. “Neuroleptics can produce a dopamine supersensitivity that leads to both dyskinetic and psychotic symptoms,” they wrote. “An implication is that the tendency toward psychotic relapse in a patient who has developed such a supersensitivity is determined by more than just the normal course of the illness.”34

A simple metaphor can help us better understand this drug-induced biological vulnerability to psychosis and why it flares up when the drug is withdrawn. Neuroleptics put a brake on dopamine transmission, and in response the brain puts down the dopamine accelerator (the extra D2receptors). If the drug is abruptly withdrawn, the brake on dopamine is suddenly released while the accelerator is still pressed to the floor. The system is now wildly out of balance, and just as a car might careen out of control, so too the dopaminergic pathways in the brain. The dopaminergic neurons in the basal ganglia may fire so rapidly that the patient withdrawing from the drugs suffers weird tics, agitation, and other motor abnormalities. The same out-of-control firing is happening with the dopaminergic pathway to the limbic region, and that may lead to “psychotic relapse or deterioration,” Chouinard and Jones wrote.35

This was an extraordinary piece of scientific detective work by the two Canadian investigators. They had—at least in theory—identified the reason that relapse rates were so high in the medication-withdrawal trials, which psychiatry had mistakenly interpreted as proving that the drugs prevented relapse. The severe relapse suffered by many patients withdrawn from antipsychotics was not necessarily the result of the “disease” returning, but rather was drug-related. Chouinard and Jones’s work also revealed that both psychiatrists and their patients would regularly suffer from a clinical delusion: They would see the return of psychotic symptoms upon drug withdrawal as proof that the antipsychotic was necessary and that it “worked.” The relapsed patient would then go back on the drug and often the psychosis would abate, which would be further proof that it worked. Both doctor and patient would experience this to be “true,” and yet, in fact, the reason that the psychosis abated with the return of the drug was that the brake on dopamine transmission was being reapplied, which countered the stuck dopamine accelerator. As Chouinard and Jones explained: “The need for continued neuroleptic treatment may itself be drug-induced.”

In short, initial exposure to neuroleptics put patients onto a path where they would likely need the drugs for life. Yet—and this was the second haunting aspect to this story of medicine—staying on the drugs regularly led to a bad end. Over time, Chouinard and Jones noted, the dopaminergic pathways tended to become permanently dysfunctional. They became irreversibly stuck in a hyperactive state, and soon the patient’s tongue was slipping rhythmically in and out of his mouth (tardive dyskinesia) and psychotic symptoms were worsening (tardive psychosis). Doctors would then need to prescribe higher doses of antipsychotics to tamp down those tardive symptoms. “The most efficacious treatment is the causative agent itself, the neuroleptic,” Chouinard and Jones said.

Over the next few years, Chouinard and Jones continued to flesh out and test their hypothesis. In 1982, they reported that 30 percent of 216 schizophrenia outpatients they studied showed signs of tardive psychosis.36 They also observed that it tended to afflict those patients who, at initial diagnosis, had a “good prognosis,” and thus would have had a chance to fare well over the long term if they had never been exposed to neuroleptics. These were the “placebo responders” who had fared best in the studies conducted by Rappaport and Mosher, and now Chouinard and Jones were reporting that they were becoming chronically psychotic after years of taking antipsychotics. Finally, Chouinard quantified the risk, reporting that tardive psychosis seemed to develop at a slightly slower rate than tardive dyskinesia. It afflicted 3 percent of patients a year, with the result that after fifteen years on the drugs, perhaps 45 percent suffered from it. When tardive psychosis sets in, Chouinard added, “the illness appears worse” than ever before. “New schizophrenic or original symptoms of greater severity will appear.”37

Animal studies confirmed this picture too. Philip Seeman reported that antipsychotics caused an increase in D2 receptors in rats, and while the density of these receptors could revert to normal if the drug was withdrawn (he reported that for every month of exposure, it took two months for renormalization to occur), at some point the increase in receptors became irreversible.38

In 1984, Swedish physician Lars Martensson, in a presentation at the World Federation of Mental Health Conference in Copenhagen, summed up the devastating bottom line. “The use of neuroleptics is a trap,” he said. “It is like having a psychosis-inducing agent built into the brain.”39

A Crazy Idea … Or Not?

This was the view of neuroleptics that came together in the early 1980s, and it was a story of science at its best. Psychiatrists saw that the drugs “worked.” They saw that antipsychotics knocked down psychotic symptoms, and they observed that patients who stopped taking their medications regularly became psychotic again. Scientific tests reinforced their clinical perceptions. Six-week trials proved the drugs were effective. Relapse studies proved that patients should be maintained on the drugs. Yet once researchers came to understand how the drugs acted on the brain, and once they began investigating why patients were developing tardive dyskinesia and why they were becoming so chronically ill, then this counterintuitive picture of the drugs—that they were increasing the likelihood that patients would become chronically ill—emerged. It was Chouinard and Jones who explicitly connected all the dots, and for a time, their work did stir up a hornet’s nest within psychiatry. One physician, at a meeting where the two McGill University doctors spoke, asked in astonishment: “I put my patients on neuroleptics because they’re psychotic. Now you’re saying that the same drug that controls their schizophrenia also causes a psychosis?”40

But what was psychiatry supposed to do with this information? It clearly imperiled the field’s very foundation. Could it really now confess to the public, or even admit to itself, that the very class of drugs said to have “revolutionized” the care of the mentally ill was in fact making patients chronically ill? That antipsychotics made patients—at least in the aggregate—more psychotic over time? Psychiatry desperately needed this discussion to go away. Soon the articles by Chouinard and Jones on “supersensitivity psychosis” were filed away in the “interesting hypothesis” category, and everyone in the field breathed a sigh of relief when Solomon Snyder, who knew as much about dopamine receptors as any scientist in the world, assured everyone in his 1986 book Drugs and the Brain that it had all turned out to be a false alarm. “If dopamine receptor sensitivity is greater in patients with tardive dyskinesia, one might wonder whether they would also suffer a corresponding increase in schizophrenia symptoms. Interestingly, though researchers have looked carefully for any possible exacerbation of schizophrenic symptoms in patients who begin to develop tardive dyskinesia, none has ever been found.”41

That moment of crisis within psychiatry, when it briefly worried about supersensitivity psychosis, occurred nearly thirty years ago, and today the notion that antipsychotics increase the likelihood that a person diagnosed with schizophrenia will become chronically ill seems, on the face of it, absurd. Ask psychiatrists at top medical schools, staff at a mental hospital, NIMH officials, leaders of the National Alliance for the Mentally Ill, science writers at major newspapers, or the ordinary person in the street, and everyone will attest that antipsychotics are essential for treating schizophrenia, the very cornerstone of care, and that anyone who touts a different idea is, well, a bit loony. Still, we started down this path of research, I’ve invited readers into this loony bin, and so now we need to move up one floor in the Countway Library. The volumes in the basement end in 1986, and now we need to comb the scientific literature since that date, and see what story it has to tell. Was it all a false alarm … or not?

The most efficient way to answer that question is to summarize, one by one, the relevant studies and avenues of research.

The Vermont longitudinal study

In the late 1950s and early 1960s, Vermont State Hospital discharged 269 chronic schizophrenics, most of whom were middle-aged, into the community. Twenty years later, Courtenay Harding interviewed 168 patients from this cohort (those who were still alive), and found that 34 percent were recovered, which meant they were “asymptomatic and living independently, had close relationships, were employed or otherwise productive citizens, were able to care for themselves, and led full lives in general.”42 This was a startling good long-term outcome for patients who had been seen as hopeless in the 1950s, and those who had recovered, Harding told the APA Monitor, had one thing in common: They all “had long since stopped taking medications.”43 She concluded that it was a “myth” that schizophrenia patients “must be on medication all their lives,” and that, in fact, “it may be a small percentage who need medication indefinitely.”44

The World Health Organization cross-cultural studies

In 1969, the World Health Organization launched an effort to track schizophrenia outcomes in nine countries. At the end of five years, the patients in the three “developing” countries—India, Nigeria, and Colombia—had a “considerably better course and outcome” than patients in the United States and five other “developed countries.” They were much more likely to be asymptomatic during the follow-up period, and even more important, they enjoyed “an exceptionally good social outcome.”

These findings stung the psychiatric community in the United States and Europe, which protested that there must have been a design flaw in the study. Perhaps the patients in India, Nigeria, and Colombia had not really been schizophrenic. In response, WHO launched a ten-country study in 1978, and this time they primarily enrolled patients suffering from a first episode of schizophrenia, all of whom were diagnosed by Western criteria. Once again, the results were much the same. At the end of two years, nearly two-thirds of the patients in the “developing countries” had had good outcomes, and slightly more than one-third had become chronically ill. In the rich countries, only 37 percent of the patients had good outcomes, and 59 percent became chronically ill. “The findings of a better outcome of patients in developing countries was confirmed,” the WHO scientists wrote. “Being in a developed country was a strong predictor of not attaining a complete remission.”45

Although the WHO investigators didn’t identify a reason for the stark disparity in outcomes, they had tracked antipsychotic usage in the second study, having hypothesized that perhaps patients in the poor countries fared better because they more reliably took their medication. However, they found the opposite to be true. Only 16 percent of the patients in the poor countries were regularly maintained on antipsychotics, versus 61 percent of the patients in the rich countries. Moreover, in Agra, India, where patients arguably fared the best, only 3 percent of the patients were kept on an antipsychotic. Medication usage was highest in Moscow, and that city had the highest percentage of patients who were constantly ill.46

In this cross-cultural study, the best outcomes were clearly associated with low medication use. Later, in 1997, WHO researchers interviewed the patients from the first two studies once again (fifteen to twenty-five years after the initial studies), and they found that those in the poor countries continued to do much better. The “outcome differential” held up for “general clinical state, symptomatology, disability, and social functioning.” In the developing countries, 53 percent of the schizophrenia patients were simply “never psychotic” anymore, and 73 percent were employed.47 Although the WHO investigators didn’t report on medication usage in their follow-up study, the bottom line is clear: In countries where patients hadn’t been regularly maintained on antipsychotics earlier in their illness, the majority had recovered and were doing well fifteen years later.

Tardive dyskinesia and global decline

Tardive dyskinesia and tardive psychosis occur because the dopaminergic pathways to the basal ganglia and limbic system become dysfunctional. But there are three dopaminergic pathways, and so it stands to reason that the third one, which transmits messages to the frontal lobes, also becomes dysfunctional over time. If so, researchers could expect to find a global decline in brain function in patients diagnosed with tardive dyskinesia, and from 1979 to 2000, more than two dozen studies found that to be the case. “The relationship appears to be linear,” reported Medical College of Virginia psychiatrist James Wade in 1987. “Individuals with severe forms of the disorder are most impaired cognitively.”48 Researchers determined that tardive dyskinesia was associated with a worsening of the negative symptoms of schizophrenia (emotional disengagement); psychosocial impairment; and a decline in memory, visual retention, and the capacity to learn. People with TD lose their “road map of consciousness,” concluded one investigator.49 Investigators have dubbed this long-term cognitive deterioration tardive dementia; in 1994, researchers found that three-fourths of medicated schizophrenia patients seventy years and older suffer from a brain pathology associated with Alzheimer’s disease.50

MRI studies

The invention of magnetic resonance imaging technology provided researchers with the opportunity to measure volumes of brain structures in people diagnosed with schizophrenia, and while they hoped to identify abnormalities that might characterize the illness, they ended up documenting instead the effect of antipsychotics on brain volumes. In a series of studies from 1994 to 1998, investigators reported that the drugs caused basal ganglion structures and the thalamus to swell, and the frontal lobes to shrink, with these changes in volumes “dose related.”51 Then, in 1998, Raquel Gur at the University of Pennsylvania Medical Center reported that the swelling of the basal ganglia and thalamus was “associated with greater severity of both negative and positive symptoms.”52

This last study provided a very clear picture of an iatrogenic process. The antipsychotic causes a change in brain volumes, and as this occurs, the patient becomes more psychotic (known as the “positive symptoms” of schizophrenia) and more emotionally disengaged (“negative symptoms”). The MRI studies showed that antipsychotics worsen the very symptoms they are supposed to treat, and that this worsening begins to occur during the first three years that patients are on the drugs.

Modeling psychosis

As part of their investigations of schizophrenia, researchers have sought to develop biological “models” of psychosis, and one way they have done that is to study the brain changes induced by various drugs—amphetamines, angel dust, etc.—that can trigger delusions and hallucinations. They also have developed ways to induce psychotic-like behaviors in rats and other animals. Lesions to the hippocampus can cause such disturbed behaviors; certain genes can be “knocked out” to produce such symptoms. In 2005, Philip Seeman reported that all of these psychotic triggers cause an increase in D2 receptors in the brain that have a “HIGH affinity” for dopamine, and by that, he meant that the receptors bound quite easily with the neurotransmitter. These “results imply that there may be many pathways to psychosis, including multiple gene mutations, drug abuse, or brain injury, all of which may converge via D2 HIGH to elicit psychotic symptoms,” he wrote.53

Seeman reasoned that this is why antipsychotics work: They block D2 receptors. But in his research, he also found that these drugs, including the newer ones like Zyprexa and Risperdal, double the density of “high affinity” D2 receptors. They induce the same abnormality that angel dust does, and thus this research confirms what Lars Martensson observed in 1984: Taking a neuroleptic is like having a “psychosis inducing agent built into the brain.”

Nancy Andreasen’s longitudinal MRI study

In 1989, Nancy Andreasen, a psychiatry professor at the University of Iowa who was editor in chief of the American Journal of Psychiatry from 1993 to 2005, began a long-term study of more than five hundred schizophrenia patients. In 2003, she reported that at the time of initial diagnosis, the patients had slightly smaller frontal lobes than normal, and that over the next three years, their frontal lobes continued to shrink. Furthermore, this “progressive reduction in frontal lobe white matter volume” was associated with a worsening of negative symptoms and functional impairment, and thus Andreasen concluded that this shrinkage is evidence that schizophrenia is a “progressive neurodevelopmental disorder,” one which antipsychotics unfortunately fail to arrest. “The medications currently used cannot modify an injurious process occurring in the brain, which is the underlying basis of symptoms.”54

Hers was a picture of antipsychotics as therapeutically ineffective, rather than harmful, and two years later, she fleshed out this picture. Her patients’ cognitive abilities began to “worsen significantly” five years after initial diagnosis, a decline tied to the “progressive brain volume reductions after illness onset.”55 In other words, as her patients’ frontal lobes shrank in size, their ability to think declined. But other researchers conducting MRI studies had found that the shrinkage of the frontal lobes was drug-related, and in a 2008 interview with the New York Times, Andreasen conceded that the “more drugs you’ve been given, the more brain tissue you lose.” The shrinkage of the frontal lobes may be part of a disease process, which the drugs then exacerbate. “What exactly do these drugs do?” Andreasen said. “They block basal ganglia activity. The prefrontal cortex doesn’t get the input it needs and is being shut down by drugs. That reduces the psychotic symptoms. It also causes the prefrontal cortex to slowly atrophy.”56

Once again, Andreasen’s investigations revealed an iatrogenic process at work. The drugs block dopamine activity in the brain and this leads to brain shrinkage, which in turn correlates with a worsening of negative symptoms and cognitive impairment. This was yet another disturbing finding, and it prompted Yale psychiatrist Thomas McGlashan, who three decades earlier had wondered whether antipsychotics were making patients “more biologically vulnerable to psychosis,” to once again question this entire paradigm of care. He put his troubled thoughts into a scientific context:

In the short term, acute D2 [receptor] blockade detaches salience and the patient’s investment in positive symptoms. In the long term, chronic D2 blockade dampens salience for all events in everyday life, inducing a chemical anhedonia that is sometimes labeled postpsychotic depression or neuroleptic dysphoria…. Do we free patients from the asylum with D2 blocking agents only to block incentive, engagement with the world, and the joie de vivre of everyday life? Medication can be lifesaving in a crisis, but it may render the patient more psychosis-prone should it be stopped and more deficit-ridden should it be maintained.57

His comments appeared in a 2006 issue of the Schizophrenia Bulletin, and at that moment it seemed like the late 1970s all over again. The “cure,” it seemed, had once again been proven to be “worse than the disease.”

The Clinician’s Illusion

I attended the 2008 meeting of the American Psychiatric Association for a number of reasons, but the person I most wanted to hear speak was Martin Harrow, who is a psychologist at the University of Illinois College of Medicine. From 1975 to 1983, he enrolled sixty-four young schizophrenics in a long-term study funded by the NIMH, recruiting the patients from two Chicago hospitals. One was private and the other public, as this ensured that the group would be economically diverse. Ever since then, he has been periodically assessing how well they are doing. Are they symptomatic? In recovery? Employed? Do they take antipsychotic medications? His results provide an up-to-date look at how schizophrenia patients in the United States are faring, and thus his study can bring our investigation of the scientific literature to a fitting climax. If the conventional wisdom is to be believed, then those who stayed on antipsychotics should have had better outcomes. If the scientific literature we have just reviewed is to be believed, then it should be the reverse.

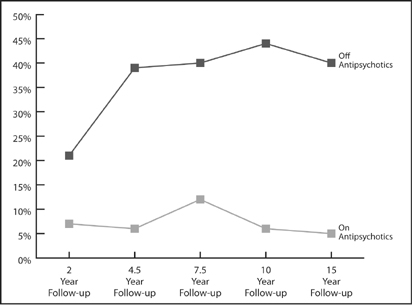

Here is Harrow’s data. In 2007, he published a report on the patients’ fifteen-year outcomes in the Journal of Nervous and Mental Disease, and he further updated that review in his presentation at the APA’s 2008 meeting.58 At the end of two years, the group not on antipsychotics were doing slightly better on a “global assessment scale” than the group on the drugs. Then, over the next thirty months, the collective fates of the two groups began to dramatically diverge. The off-med group began to improve significantly, and by the end of 4.5 years, 39 percent were “in recovery” and more than 60 percent were working. In contrast, outcomes for the medication group worsened during this thirty-month period. As a group, their global functioning declined slightly, and at the 4.5-year mark, only 6 percent were in recovery and few were working. That stark divergence in outcomes remained for the next ten years. At the fifteen-year follow-up, 40 percent of those off drugs were in recovery, more than half were working, and only 28 percent suffered from psychotic symptoms. In contrast, only 5 percent of those taking antipsychotics were in recovery, and 64 percent were actively psychotic. “I conclude that patients with schizophrenia not on antipsychotic medication for a long period of time have significantly better global functioning than those on antipsychotics,” Harrow told the APA audience.

Long-term Recovery Rates for Schizophrenia Patients

Source: Harrow, M. “Factors involved in outcome and recovery in schizophrenia patients not on antipsychotic medications.” The Journal of Nervous and Mental Disease, 195 (2007): 406–14.

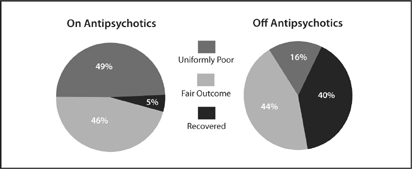

Indeed, it wasn’t just that there were more recoveries in the un-medicated group. There were also fewer terrible outcomes in this group. There was a shift in the entire spectrum of outcomes. Ten of the twenty-five patients who stopped taking antipsychotics recovered, eleven had so-so outcomes, and only four (16 percent) had a “uniformly poor outcome.” In contrast, only two of the thirty-nine patients who stayed on antipsychotics recovered, eighteen had so-so outcomes, and nineteen (49 percent) fell into the “uniformly poor” camp. Medicated patients had one-eighth the recovery rate of un-medicated patients, and a threefold higher rate of faring miserably over the long term.

This is the outcomes picture revealed in an NIMH-funded study, the most up-to-date one we have today. It also provides us with insight into how long it takes for the better outcomes for non medicated patients, as a group, to become apparent. Although this difference began to show up at the end of two years, it wasn’t until the 4.5-year mark that it became evident that the nonmedicated group, as a whole, was doing much better. Furthermore, through his rigorous tracking of patients, Harrow discovered why psychiatrists remain blind to this fact. Those who got off their antipsychotic medications left the system, he said. They stopped going to day programs, they stopped seeing therapists, they stopped telling people they had ever been diagnosed with schizophrenia, and they disappeared into society. A few of the nonmedicated people in Harrow’s study even got “high-level jobs”—one became a college professor and another a lawyer—and several had “mid-level jobs.” Explained Harrow: “We [clinicians] get our experience from seeing those who leave us, and then come back because they relapse. We don’t see the ones who don’t relapse. They don’t come back. They are quite happy.”

Spectrum of Outcomes in Schizophrenia Patients

The spectrum of outcomes for medicated versus unmedicated patients. Those on antipsychotics had a much lower recovery rate, and were much more likely to have a “uniformly poor” outcome. Source: Harrow, M. “Factors involved in outcome and recovery in schizophrenia patients not on antipsychotic medications.” The Journal of Nervous and Mental Disease, 195 (2007): 406–14.

Afterward, I asked Dr. Harrow why he thought the nonmedicated patients did so much better. He did not attribute it to their being off antipsychotics, but rather said it was because this group “had a stronger internal sense of self,” and once they initially stabilized on the medications, this “better personhood” gave them the confidence to go off the drugs. “It’s not that those who went off medications did better, but rather it was those who did better [initially] who then went off the medications.” When I pressed on with a question about whether his findings supported a different interpretation, which was that the drugs worsened long-term outcomes, he grew a bit testy. “That’s a possibility, but I’m not advocating it,” he said. “People recognize there may be side effects…. I’m not just trying to avoid the question. I’m one of the few people in the field without drug money.”

I asked one last question. At the very least, shouldn’t his findings be worked into the paradigm of care used in our society to treat those diagnosed with schizophrenia? “There is no question about that,” he replied. “Our data is overwhelming that not all schizophrenic patients need to be on antipsychotics all their lives.”

Reviewing the Evidence

We have followed a trail of documents to a surprising end, and thus I think we need to ask one final question: Does the evidence refuting the common wisdom all hang together? In other words, does the outcomes literature tell a coherent and consistent story? We need to double-check to make sure we are not missing something, for it is always discomforting to arrive at a conclusion so at odds with what society “knows” to be true.

First, as researchers Lisa Dixon and Emmanuel Stip acknowledged, there is no good evidence that antipsychotics improve long-term schizophrenia outcomes. As such, we can be confident that we haven’t missed any such studies in our survey. Second, evidence that the drugs might worsen long-term outcomes showed up in the very first follow-up study conducted by the NIMH, and then it appears again and again over the next fifty years. We can link the authors of this research into a lengthy chain: Cole, Bockoven, Rappaport, Carpenter, Mosher, Harding, the World Health Organization, and Harrow. Third, once researchers came to understand how antipsychotics affected the brain, Chouinard and Jones stepped forward with a biological explanation for why the drugs made patients more vulnerable to psychosis over the long term. They were also able to explain why the drug-induced brain changes made it so risky for people to go off the medications, and thus they revealed why the drug-withdrawal studies misled psychiatrists into believing that the drugs prevented relapse. Fourth, evidence that long-term recovery rates are higher for nonmedicated patients appears in studies and investigations of many different types. It shows up in the randomized studies conducted by Rappaport, Carpenter, and Mosher; in the cross-cultural studies conducted by the World Health Organization; and in the naturalistic studies conducted by Harding and Harrow. Fifth, we see in the tardive dyskinesia studies evidence that the drugs induce global brain dysfunction in a high percentage of patients over the long term. Sixth, once a new tool for studying brain structures came along (MRIs), investigators discovered that antipsychotics cause morphological changes in the brain and that these changes are associated with a worsening of both positive and negative symptoms, and with cognitive impairment as well. Finally, for the most part, the psychiatric researchers who conducted these studies hoped and expected to find the reverse. They wanted to tell a story of drugs that help schizophrenia patients fare well over the long term—their bias was in that direction.

We are trying to solve a puzzle in this book—why have the number of disabled mentally ill soared over the past fifty years—and I think we now have our first puzzle piece in hand. We saw that in the decade before the introduction of Thorazine, 65 percent or so of first-episode schizophrenics would be discharged within twelve months, and the majority of those discharged would not be rehospitalized in follow-up periods of four and five years. This was what we saw in Bockoven’s study, too: Seventy-six percent of the psychotic patients treated with a progressive form of psychosocial care in 1947 were living successfully in the community five years later. But, as we saw in Harrow’s study, only 5 percent of schizophrenia patients who stayed on their drugs long-term ended up recovered. That is a dramatic decline in recovery rates in the modern era, and older psychiatrists, who can still remember what it was like to work with unmedicated patients, can personally attest to this difference in outcomes.

“In the nonmedication era, my schizophrenic patients did far better than do those in the more modern era,” said Maryland psychiatrist Ann Silver, in an interview. “They chose careers, pursued them, and married. One patient, who had been called the sickest admitted to the adolescent division [of her hospital], is raising three children and works as a registered nurse. In the later [medicated] era, none chose a career, although many held various jobs, and none married or even had lasting relationships.”

We can also see how this drug-induced chronicity has contributed to the rise in the number of disabled mentally ill. In 1955, there were 267,000 people with schizophrenia in state and county mental hospitals, or one in every 617 Americans. Today, there are an estimated 2.4 million people receiving SSI or SSDI because they are ill with schizophrenia (or some other psychotic disorder), a disability rate of one in every 125 Americans.59 Since the arrival of Thorazine, the disability rate due to psychotic illness has increased fourfold in our society.

Cathy, George, and Kate

In the second chapter, we met two people—Cathy Levin and George Badillo—who had been diagnosed with schizoaffective disorder (Cathy) or schizophrenia (George). We can now see how their stories fit into the outcomes literature.

As I said, Cathy Levin is one of the best responders to atypical antipsychotics that I’ve ever met. She could be Janssen’s poster girl for promoting Risperdal. Still, she remains on SSDI and she perceives the medications as a barrier to her working full-time. Now let’s go back to that moment when she had her first psychotic episode at Earlham College. What might her life have been like if she had not been immediately placed on neuroleptics, but instead had been treated with some form of psychosocial care? Or if, at some point early on, she had been encouraged to withdraw gradually from the antipsychotic medication? Would she have cycled in and out of hospitals for the next twelve years? Would she have ended up on SSDI? Although we can’t really answer those questions, we can say that the drug treatment increased the likelihood that she would suffer that long period of constant hospitalizations, and decreased the likelihood that she would fully recover from her initial crackup. As Cathy said: “The thing I remember, looking back, is that I was not really that sick early on. I was really just confused.”

Meanwhile, George Badillo’s story illustrates how getting off meds can be the key to recovery, at least for some people diagnosed with schizophrenia. His journey out of the back wards of a state hospital began when he started tonguing his antipsychotic medication. He is healthy today, he has an evident zest for life, and he revels in being a good father to his son and having his daughter Madelyne back in his life. He is an example of the many recovered people who showed up in the long-term studies by Harding and Harrow—former patients who have quit taking antipsychotics and are doing well.

Here is a third story of a young woman I’ll call Kate, as she did not want her real name used. Diagnosed with schizophrenia at age nineteen, she did well on antipsychotics. In Harrow’s study, she would have been among the 5 percent on meds who recovered. But she also knows what it is like to be off meds and doing well, and from her perspective, the latter type of recovery is totally unlike the first.

Before I met Kate in person, I knew from a phone conversation the bare outlines of her story, of how she had spent ten years on anti psychotics, and given that those drugs can take such a physical toll, I was a bit startled by her appearance when she showed up at my office. To be blunt, the words “drop dead gorgeous” popped into my head. A dark-haired woman, she wore jeans, a roseate top, and light makeup, and she introduced herself in a confident, warm way. Soon, she was showing me a “before” picture taken three years earlier. “I was well over two-hundred pounds,” she says. “I was very slow, my face was droopy. I smoked a lot of cigarettes…. It was very inhibiting to any sort of professional look.”

Kate’s story about her childhood is a familiar one. Her parents divorced when she was eight, and she remembers herself as socially awkward and horribly shy. “I only had social skills enough to interact with my family members,” she says, and that awkwardness followed her to college. During her freshman year at the University of Massachusetts at Dartmouth, she found it difficult to make friends, and she felt so isolated that she cried constantly. Early in her sophomore year she dropped out and went to live with her mother in Boston, hoping to find a “purpose in life.” Instead, “my sense of reality started to disintegrate,” she recalls. “I started worrying about God versus the devil, and I started becoming afraid of everything. I’d say to my mom’s friend, ‘Is the food poisoned?’ I was acting quite bizarre, and I couldn’t make sense of the conversations around me. I would say these very odd things, and I would speak very slowly, very deliberately, and weird.”

When she began talking about seeing wolves in her bedroom, her mother put her in the hospital. Although she stabilized pretty well on the antipsychotic medication, she hated how it made her feel, and not long after she was discharged, she abruptly went off it, which triggered a florid psychotic break. During her second hospitalization, in February 1997, she was diagnosed with schizophrenia, and this time she accepted the fact that she would have to take antipsychotics for life. Eventually, she found a two-drug combination that worked well for her, and she began rebuilding a life. In 2001, she graduated from UMass Boston, and a year later she married a man she had met in a day treatment program. “We both had a psychiatric disability, and we both smoked heavily,” she says. “We both saw therapists daily. This is what we had in common.”

Kate took a job in a group home for the mentally handicapped, and although at times she had trouble staying awake, a side effect of her medications, she earned enough to get off SSDI. For a person with schizophrenia, she was doing extremely well. Yet she wasn’t happy. She had gained nearly one hundred pounds, and her husband often cruelly taunted her, telling her that she was “ugly” and had a “fat ass.” She chafed too over how everybody in the system treated her. “Recovery on the med model requires you to be obedient, like a child,” she explains. “You are obedient to your doctors, you are compliant with your therapist, and you take your meds. There’s no striving toward greater intellectual concerns.”

In 2005, she grew closer to a longtime friend, who was twenty years older and belonged to a fundamentalist religious community. She began attending their meetings, and they in turn began advising her to dress, speak, and present herself to the world in a more formal way. “They told me, ‘You are representing God, and you don’t want to bring shame to God,” she says. Kate’s older friend also urged her to stop thinking of herself as schizophrenic. “He’s making me think outside the box, and to think in ways that before I never would have accepted. I would always defend my therapist, defend my psychiatrist, defend the drugs, and defend my illness. He was asking me to give up my identity as a mentally impaired person.”

Soon, her old life fell completely apart. She discovered that her husband had been sleeping with one of her friends, and after she moved out of their apartment, she had to sleep for a time in her car. Although at first, during that desperate time, she clung to her meds, the nonschizophrenic vision of herself also beckoned, and in February of 2006, she decided to take the leap: She would stop smoking, she would stop drinking coffee, and she would wean herself from her psychiatric medications. “Now I have no drugs, no nicotine, and no coffee, and my body is going into shock. I am coming down from all of this, and I am almost vibrating because I need my cigarettes, my drugs.”

This decision also put her at odds with most everyone in her life. “I stopped talking to my family, because I didn’t want to go back into that identity [of a disabled person]. My mind was very delicate. So I had to disengage from what I knew, and disengage from my therapist.” Soon, she was losing so much weight that her friends thought she must be sick. As she struggled to stay sane, she clung to the advice from her religious group, speaking to others in a very formal manner, and this behavior convinced her mother that she was relapsing. “Strange ain’t the word, honey” is how her mother puts it, and even Kate privately feared that she was becoming psychotic again. “But I had this hope, this faith, and so I said to myself, ‘I am going to walk this tightrope across this horrible canyon, and hopefully when I get to the other side, there will be a mountain ridge I can stand on.’ I had to focus on going forward regardless of where it took me, because if I fell off the tightrope, I was back in the hospital.”

It was at that perilous moment, when it seemed that she was about to crash, that Kate agreed to meet her mother for dinner. “I think she is having a breakdown,” her mother says. “She sat very proper, and looked scattered and disorganized. Her body was stiff. I was seeing a lot of the same symptoms as before. Her eyes were dilated and she seemed paranoid.” As they drove away from the restaurant, Kate’s mother started to turn toward the hospital, but at the last second she changed her mind. Kate “wasn’t so crazy” that she needed to be locked up. “I went home and cried,” her mother remembers. “I didn’t know what was happening.”

By her mother’s reckoning, it took Kate six months to get through this withdrawal process. But she emerged on the other side transformed. “I see that her face is so alive now and she is more connected to her body,” her mother says. “She feels comfortable in her own skin and more at peace with herself than ever. She is physically healthy. I didn’t know that this kind of recovery was possible.” In 2007, Kate married the older man who had encouraged her to go this route; she also has thrived in her job as the manager of a home for people with psychiatric problems, the company recognizing her for her “outstanding” performance in 2008, an award that came with a cash prize.

Kate does still struggle at times. The home she manages provides shelter to several men who are sexual deviants—“I’ve had people say they are going to set me on fire, or they are going to pee in my mouth,” she says—and she no longer is having her emotional responses to such stress numbed by medication. “I’ve been off the drugs for two years, and sometimes I find it very, very difficult to deal with my emotions. I tend to have these rages of anger. Did the drugs bring such a cloud over my mind, make me so comatose, that I never gained skills on how to deal with my emotions? Now I’m finding myself getting angrier than ever and getting happier than ever too. The circle with my emotions is getting wider. And yes, it’s easy to deal with when you’re happy, but how do you deal with it when you are mad? I’m working on not getting overly defensive, and trying to take things in stride.”

Kate’s story, of course, is idiosyncratic in kind. Her success at getting off meds does not mean that everyone can successfully withdraw from them. Kate is an amazing person—incredibly willful and incredibly brave. Indeed, what the scientific literature reveals is that once a person is on an antipsychotic, it can be very difficult and risky to withdraw from the medication, and that many people suffer severe relapses. But the literature also reveals that there are people who can successfully withdraw from the medications and that it is this group that fares best in the long term. Kate made it into that group.

“That day in 2005 when I decided to get better, that’s the dividing line in my life,” she says. “I was a completely different person then. I was very heavy, I smoked all the time, I had flat affect. Today I run into people who knew me then, and they don’t even recognize me. Even my mother says, ‘You are not the same person.’”

* During this period, schizophrenia was a diagnosis being broadly applied to those being hospitalized. Many of these patients would be diagnosed as bipolar or schizoaffective today. Still, this was the diagnosis for the most “seriously disturbed” people in American society at that time.

* In 2007, the Cochrane Collaboration, an international group of scientists that doesn’t take funding from pharmaceutical companies, raised questions about this short-term efficacy record. They conducted a meta-analysis of all chlorpromazine-versus-placebo studies in the scientific literature, and after identifying fifty of decent quality, they concluded that the advantage of drug over placebo was smaller than commonly thought. They calculated that seven patients had to be treated with chlorpromazine to produce a net gain of one “global improvement,” and that “even this finding may be an overestimate of the positive and an understimate of the negative effects of giving chlorpromazine.” The Cochrane investigators, somewhat startled by their results, wrote that “reliable evidence about [chlorpromazine’s] short-term efficacy is surprisingly weak.”

* There is an evident flaw with Gilbert’s meta-analysis. She didn’t determine whether the speed with which drugs were withdrawn affected the relapse rate. After her study appeared, Adele Viguera at Harvard Medical School reanalyzed the same sixty-six studies and determined that when the drugs were gradually withdrawn, the relapse rate was only one-third as high as in the abrupt-withdrawal studies. The abrupt-withdrawal design in the majority of the relapse studies dramatically increased the risk that the schizophrenia patients would become sick again. Indeed, the relapse rate for gradually withdrawn patients was similar to what it was for the drug-maintained patients.

* In the early 1960s, Philip May conducted a study that compared five forms of in-hospital treatment: drug, electroconvulsive therapy (ECT), psychotherapy, psychotherapy plus drug, and milieu therapy (a supportive environment). Over the short term, the drug-treated patients did much better. As a result, the study came to be cited as proof that schizophrenia patients could not be treated without drugs. However, the two-year results told a more nuanced story. Fifty-nine percent of patients initially treated with milieu therapy but no drugs were successfully discharged in the initial study period, and this group “functioned over the follow-up at least as well, if not better, than the successes from the other treatments.” Thus, the May study, which is usually cited as proving that all psychotic patients should be medicated, in fact suggested that a majority of first-episode patients would fare best over the long term if initially treated with milieu therapy rather than drugs. Source: P. May, “Schizophrenia: a follow-up study of the results of five forms of treatment,” Archives of General Psychiatry 38 (1981): 776–84.