CHAPTER 4

BEFORE PERESTROIKA TRANSFORMED the socialist world, it swept across the capitalist world to profound effect.1 That, at least, was the view of Soviet officials in the 1980s. In 1983, for instance, officials from the Soviet state bank, Gosbank, wrote that the International Monetary Fund (IMF) required debtor countries to carry out “a perestroika of the economy” before it would grant the debtor states financial relief.2 For Soviet analysts, the word “perestroika”—translated most directly in English as “restructuring”—was so intimately tied to processes of capitalist economic reform that it even anchored the Russian phrase (strukturnaya perestroika) for “structural adjustment,” the loaded idiom the IMF used to describe the promarket reforms it attached as conditions of its financial aid.3 Even after Mikhail Gorbachev had claimed “perestroika” as his own and instilled it with particular socialist and Soviet meanings, both he and Soviet officials continued to use the word to describe economic change in the capitalist world. As we have seen, Gorbachev told his Politburo in 1987 that many capitalist countries in Western Europe, including Great Britain under Margaret Thatcher, were “also carrying out a perestroika.”4 And officials from the Soviet Union’s influential United States and Canada Institute echoed their leader’s judgment. The “current structural perestroika of the economy of developed countries has far-reaching consequences,” they wrote in 1989.5

Indeed it did. This chapter looks at the causes and consequences of the perestroika that swept the capitalist world in the early 1980s. The capitalist perestroika included three processes. First, US Federal Reserve chairman Paul Volcker launched the capitalist perestroika through his shock to US dollar interest rates from 1979 to 1983. We saw earlier the onset of the Volcker Shock and will now carry the story forward from 1980. Throughout the capitalist world, the Volcker Shock set off a wave of deflation, bankruptcies, and unemployment on a scale without precedent in the postwar period. This wave of broken promises, in turn, produced a variety of long-term disciplinary effects in economies throughout the Western world. In the United States, capital firmly regained the upper hand over labor, wages permanently fell behind productivity growth, and inequality dramatically increased. In Western Europe, governments did more to protect labor rights and income equality, but unemployment rose steeply and remained high for the remainder of the twentieth century. Most importantly, while the East remained a world of heavy industry, Western governments embraced deindustrialization and shrank the size of their industrial working classes. In total, Western governments after 1979 provided their citizens with some combination of the rising incomes, job security, and full employment that had defined the politics of making promises. But they could no longer provide all three at the same time. The promise of the postwar social contract was broken.

Second, in the early 1980s, Ronald Reagan unwittingly but fundamentally altered the flow of capital in the global economy by transforming the United States from a net exporter of capital to the world’s largest debtor nation. Reagan’s unyielding pursuit of both the largest tax cuts and the largest peacetime military buildup in the nation’s history created massive US budget and current account deficits. In defiance of all expectation, these deficits were funded by a deluge of foreign capital. Capital poured into the United States in the 1980s at a rate previously unimaginable. This “Reagan financial buildup” erased the US government’s traditional choice between guns and butter and underwrote the renewal of economic growth at home and the projection of American power abroad. The same policy instrument that disciplined American workers—high interest rates—held the key to unlocking these capital inflows from abroad, so only by breaking promises at home did the United States renew its power in the world.

Third, the capitalist perestroika ended the economic interdependence of the 1970s and initiated a new era of Western economic leverage over the rest of the world. The Reagan financial buildup produced this fundamental transformation in the world’s political economy. The buildup not only enhanced the projection of American power by funding the military buildup, but it also fundamentally altered the geopolitics of the decade by preventing the flow of capital to other countries. The more the United States monopolized the world’s surplus capital, the harder other governments found it to attract capital to their countries. Capital scarcity, in turn, altered the power relations between lenders and borrowers in the world economy and allowed the Reagan administration and the IMF to impose their economic vision on the rest of the world. The sovereign debt crisis that followed initially threatened to bring down the entire global financial system because debtor and creditor countries found themselves in a state of mutually assured financial destruction—if the debtor nations defaulted, the creditor nations would plunge into economic depression. But the leaders of the global financial system at the Federal Reserve, the IMF, and within the US government adroitly unwound this interdependence and made debtor countries fully dependent on their creditors. In so doing, Western policy makers discovered how to use sovereign debt as leverage, and through debt, they began to impose neoliberal economic programs on countries around the world.

The cumulative effect of the capitalist perestroika on both the United States and the world was profound. In the five short years from Volcker’s appointment in 1979 to Reagan’s reelection in 1984, the United States went from a country beset by financial limits, international interdependence, and domestic government weakness to a country with no discernable material limits that wielded the leverage of financial dependence and military superiority over the rest of the world. Unlike the Soviet government under its own perestroika, the US government renewed its power during the capitalist perestroika by disciplining its own citizens and tapping the wellspring of foreign capital that has sustained the American imperium ever since.

![]()

Though Paul Volcker announced the Federal Reserve’s move to monetarism in November 1979, he held the brunt of his attack on inflation in reserve until after the 1980 presidential election was complete. Volcker was fully aware of the deleterious effects higher interest rates would have on the real economy, and he did not want to be perceived as having tipped the election against Jimmy Carter by deepening the country’s economic woes on the eve of the vote. So he waited. And with Ronald Reagan’s election in November 1980, he finally had the opportunity he had been waiting for.

In choosing Reagan, the American people consciously elected someone who was committed to fighting inflation, even if it came at the price of working-class jobs. In fact, 42 percent of union households pulled the lever for Reagan at the ballot box.6 Frustrated by a decade of economic crisis and attracted to the religious and cultural appeals of Reagan’s “New Right,” many white, working-class voters who had traditionally formed the cornerstone of the Democrats’ New Deal coalition were willing to give Reagan’s promarket, antigovernment conservatism a chance. These so-called Reagan Democrats delivered the Republican candidate an Electoral College landslide, as Reagan defeated Carter by a staggering 489–49 margin.7

Volcker believed that the months immediately following Reagan’s victory presented the country with a “rare opportunity” to “come to grips, in a fundamental and decisive way, with the inflationary problem.”8 As he told Congress in the early days of January 1981, “the fact is we now have one of those rare opportunities to marshal a national consensus” in prioritizing inflation over the traditional priorities of full employment and economic growth.9 It was an opportunity he was sure not to miss. For the next year and a half, US dollar interest rates breached 20 percent for weeks on end and never dipped below 15 percent.10 Even after Volcker began to ease rates in the second half of 1982, his determination to vanquish any hint of inflationary expectations led the Federal Reserve to keep interest rates unprecedentedly high for the remainder of the decade. After languishing below zero for most of the 1970s, real interest rates (nominal interest rates minus inflation) skyrocketed to 5 percent in the early 1980s and remained staunchly positive for the remainder of the twentieth century.11

As Volcker’s shock set in, the politics of making promises gave way to the politics of breaking promises across the country. Factories closed, unemployed lines swelled, and the nation’s industrial backbone shuttered out of existence. Between 1979 and 1983, fixed investment in manufacturing in the United States plummeted at its steepest rate on record, and employment in durable goods manufacturing declined by over two million jobs.12 Real output contracted 3.3 percent, and unemployment reached a postwar peak of 10.8 percent.13 Even when national economic growth returned in 1983, the good old days for American industry did not. In 1983, for instance, U.S. Steel, a bellwether of America’s industrial might, announced it was shutting down nearly 20 percent of its capacity and laying off 15,000 workers. This was just one bleak moment in a broader decade of despair for the American steel industry, which saw the employees in its ranks fall from 450,000 at the start of the 1980s to 170,000 at the decade’s end.14

For Reagan, Volcker’s monetary discipline was a necessary antidote to what he perceived to be the profligacy of the politics of making promises. The high taxes, regulation, and social spending of the postwar period had, he believed, destroyed Americans’ incentives to work hard and demolished American companies’ reasons to invest in new equipment and expand production. His rhetoric suggested the economy needed to be freed from the shackles of the government. “Government is not the solution to our problem; government is the problem,” he famously told the country in his first inaugural address.15 In place of the promissory paradigm of the postwar period, Reagan embraced supply-side economics, a school of thought that proposed to solve the riddle of stagflation by cutting taxes and government regulation in order to unleash a new economic boom. Why work hard and invest in the future, supply-siders asked, if the government was just going to confiscate the fruits of success through taxation? How could companies and individuals take risks and chase innovation when the government increasingly regulated every facet of the marketplace? Amid the stagnant production, nosediving productivity growth, and low savings and investment totals of the 1970s, these questions had begun to resonate, and with Reagan’s election, they prevailed. Supply-siders confidently assured the country that individuals would save more, businesses would invest more, and the economy would grow more if taxes and regulations were cut back.

Once in government, these convictions led the Reagan economic team to embrace a cavalcade of deregulation already well underway. In the last years of the Carter administration, bipartisan efforts to deregulate most facets of the American economy had gained traction. By the early years of Reagan’s term, rules controlling competition and pricing in sectors ranging from airlines and railroads to telecommunications and trucking had been abolished. The financial sector soon followed suit. In a pair of laws passed in 1980 and 1982, Congress removed all interest rate caps on bank deposits, deregulated the savings and loan industry, and allowed banks to lend at variable rates. In one sector after another, lawmakers limited the government’s power to moderate and regulate the marketplace. All told, the percentage of the economy subject to some kind of price regulation dropped from 17 percent in 1977 to 6.6 percent in 1988.16

To this burgeoning trend of deregulation, Reagan added his own dash of anti-labor militancy. After the federal minimum wage had increased 127 percent in real terms from 1950 to 1980, it fell 26 percent in real terms while Reagan was in office.17 In his first year in office, he made it more costly for workers to defend their economic position by declaring those who went on strike ineligible for food stamps.18 And in the summer of 1981, he publicly signaled his intention to discipline American organized labor by abruptly firing 11,000 members of the Professional Air Traffic Controllers Organization (PATCO) who had walked off the job on strike and banning them from federal employment for life. Both labor and management quickly viewed PATCO’s demolition as a turning point in the country’s labor relations. President of the AFL-CIO (American Federation of Labor and Congress of Industrial Organizations) Lane Kirkland described Reagan’s heavy-handed response as having the “massive, vindictive, brutal quality of the carpet bombing.”19 By the letter of the law, Reagan’s action was perfectly legal, but it was a step few corporate leaders had been willing to take since the New Deal.20 Now corporate leaders were quick to take their cue. “Managers,” Fortune magazine reported in November 1981, “are discovering that strikes can be broken . . . and that strikebreaking . . . doesn’t have to be a dirty word.”21 Broad changes in public sentiment drove the removal of this taboo. Reagan’s action was popular, even among union members: 64 percent of the country supported the president’s decision, including 52 percent of those in unions.22 As in Britain, the American public clamored for an end to economic crisis and malaise, even if it came at the expense of organized labor.

The combination of the Volcker Shock’s wave of mass unemployment, the rising tide of deregulation, and Reagan’s firing of the air traffic controllers broke the resistance of American labor to economic discipline. Rather than fight for further gains, many workers simply rushed to secure what they already had. In 1982, for instance, the International Brotherhood of Teamsters—a potent union that many feared could bring the economy to a halt with a national truckers’ strike—agreed to a three-year freeze in wages for more than 300,000 long-haul drivers.23 Across the nation, the number of days workers spent on strike plummeted. During the heyday of the politics of making promises from 1950 to 1973, the number of large strikes per year averaged 325. But from 1982 to 1990, that number fell to roughly 60. As unions’ militancy dissipated, so did their presence in the workplace. Though unionized workers were 21 percent of the private sector labor force in 1979, they only comprised 12 percent a decade later.24 A less organized labor force was also a less well-compensated one. Real hourly compensation in the private economy flatlined during the 1980s, growing at an average annual rate of just 0.1 percent from 1979 to 1990.25

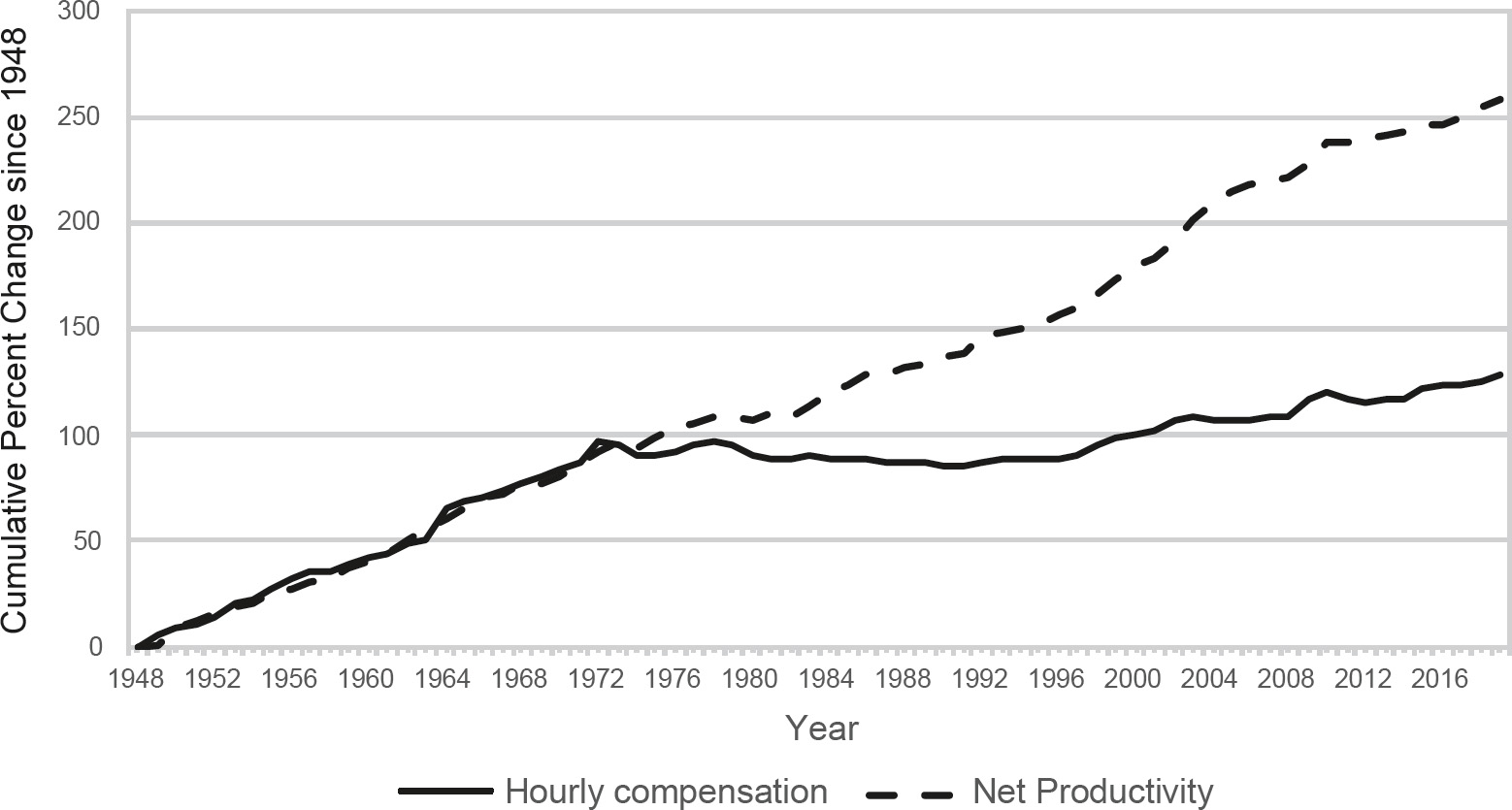

In total, the early 1980s marked a sea change in the compensation the American working class received for its labor. As shown in Figure 4.1, until the early 1970s, the relationship between workers’ productivity and hourly compensation in the United States had moved in unison throughout the postwar period. The economic crises of the 1970s put a dent in this relationship, but it was only with the Volcker Shock, the deregulation push of the late 1970s, the rising import competition of the early 1980s, and the empowerment of management over labor in Reagan’s first term that wages and productivity became permanently severed. The average American worker saw virtually no increase in real wages from 1979 to the end of the century.26

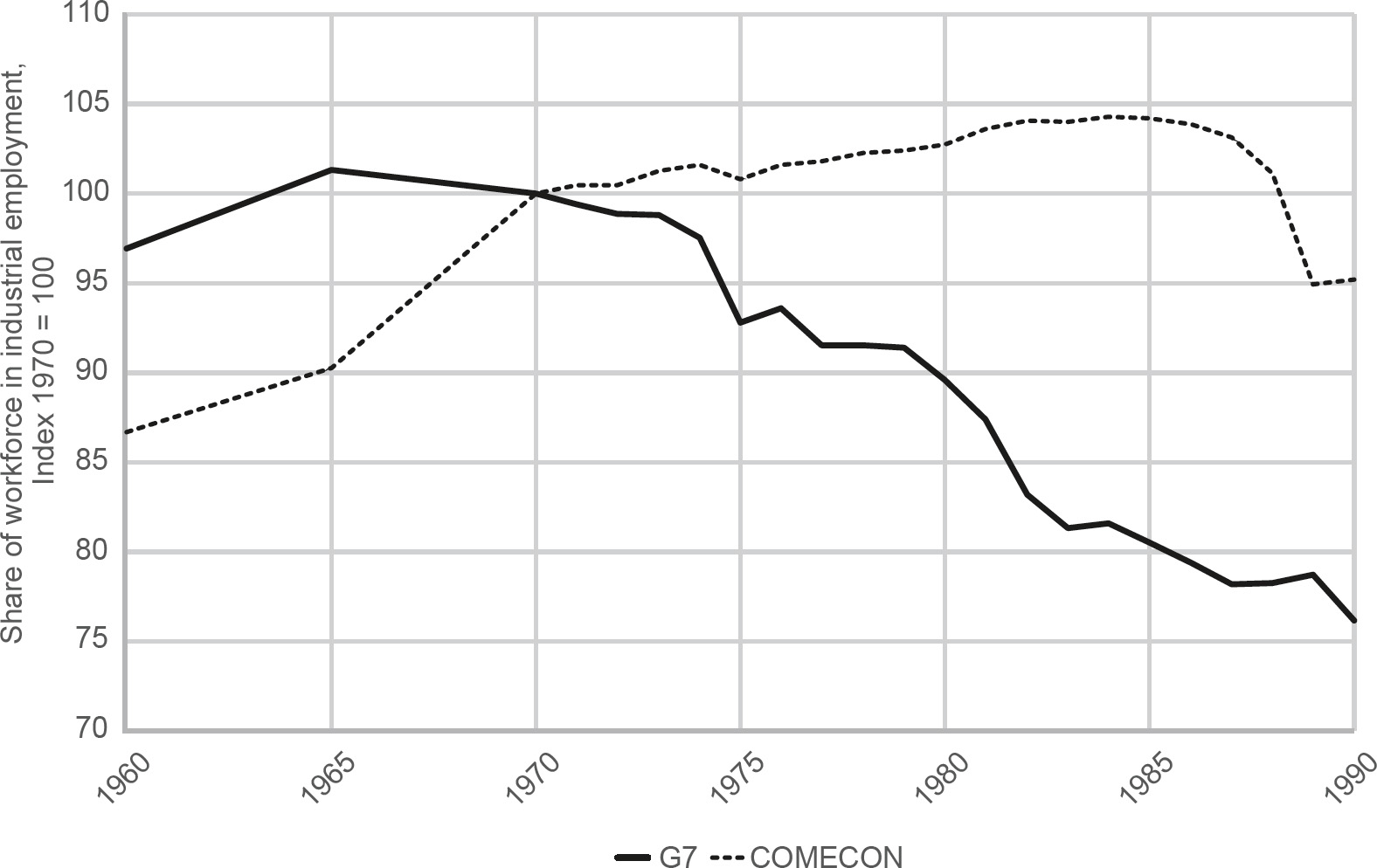

American workers were far from the only ones to bear the burden of high interest rates. The Federal Reserve’s actions forced other central banks around the world to dramatically increase interest rates in order to protect their currencies. West German chancellor Helmut Schmidt, therefore, had a right to complain when he lamented that Volcker had caused interest rates in Europe to rise to their highest levels “since the birth of Jesus Christ.”27 High real interest rates, in turn, squeezed excess capacity and labor out of all the industrial economies of the West with ruthless effectiveness. After falling below 2 percent on the eve of the first oil crisis, unemployment across Western Europe ballooned to over 10 percent in the mid-1980s and remained over 8 percent well into the 1990s.28 The potent combination of high unemployment and high interest rates put a very low ceiling on wage growth. After rising 3–5 percent per year in the 1960s and early 1970s, real wages in Western Europe and Japan grew only about 1 percent per year in the 1980s and 1990s.29 As in the United States, employment in manufacturing and heavy industry was hit particularly hard. Even in an industrial powerhouse like West Germany, work hours in manufacturing fell by 10 percent from 1979 to 1985, and the growth of unit labor costs was cut in half during the 1980s.30 The dismantling of the industrial working classes was broad based across Western countries and proved to be a decisive difference between democratic capitalism and state socialism. As Figure 4.2 shows, while Eastern Bloc governments continued to add workers to heavy industries in the 1980s, Western governments were able to deindustrialize their workforces.

Figure 4.1 The divergence between American productivity growth and average hourly compensation.

Data source: Economic Policy Institute (EPI) analysis of unpublished Total Economy Productivity data from Bureau of Labor Statistics (BLS) Labor Productivity and Costs program, wage data from the BLS Current Employment Statistics, BLS Employment Cost Trends, BLS Consumer Price Index, and Bureau of Economic Analysis National Income and Product Accounts. “The Productivity–Pay Gap,” EPI, accessed June 21, 2021, https://www.epi.org/productivity-pay-gap/.

Much of this “de-manning,” as it was often called, occurred through the privatization of state-owned assets and enterprises. Few other Western leaders matched Thatcher’s and Reagan’s millenarian zeal for neoliberal solutions, but that did not stop them from embracing privatization as a means of making their countries’ legacy industries leaner and more competitive. Over the 1980s, a veritable wave of privatization swept across Western economies.31 Everything from Dutch airlines to Japanese telecom companies to German electric utilities found their way into private hands. Even the socialist government of Spain joined the action. In the late 1980s, some eighty Spanish companies were privatized—a number that would only grow larger by the end of the century.32 When Gorbachev told the Politburo in 1987 that Spanish prime minister Felipe González was among the Western leaders “also carrying out a perestroika” of their economies, he was referring to a socialist presiding over the full-scale privatization of an economy where almost 20 percent of Spaniards were unemployed.33

Figure 4.2 The rise and fall of industrial workforces in the East and West.

Reformatted with permission of University of Chicago Press Journals from Maximilian Krahé, “TINA and the Market Turn: Why Deindustrialization Proceeded under Democratic Capitalism but Not State Socialism,” Critical Historical Studies, 8:2, Fall 2021, Figure 1; permission conveyed through Copyright Clearance Center, Inc.

As Western governments pulled back from owning the means of production, they did not affect a similar decline in the size of their welfare states. The large increase in unemployment even slightly increased social spending across the OECD (Organization for Economic Cooperation and Development) from 14.5 percent to 16.5 percent of GDP during the 1980s.34 But high interest rates and globalized financial markets did place strict limits on how much public welfare and state intervention Western leaders could pursue in their domestic economies. Global capital holders tolerated the survival of the welfare state as a way of providing basic necessities for the workers made redundant by economic restructuring, but they did not tolerate any expansion of the state’s role in regulating the economy or providing economic and social security for its citizens.

These new limits became most starkly apparent in France. In 1981, French voters bucked the conservative trend spreading through the Anglo-American world and elected the socialist François Mitterrand president. As the first directly elected socialist head of state in Europe, Mitterrand’s election was a historic achievement for the European Left. Socialists within France and around Europe viewed his victory as a long-awaited chance to use the reins of state power to institute a “rupture with capitalism” and bring the economy fully under government control.35 Mitterrand’s campaign platform contained all the hallmarks of the politics of making promises—industries would be nationalized, government jobs would be created, and the welfare state would be resolutely expanded. In a governing coalition with the French Communist Party, the Socialists quickly delivered on these promises in their first year in office. Public spending jumped 27 percent, and the government borrowed money to raise wages and pensions, lower the retirement age, and reduce work hours. Thirty-eight banks and financial companies, five of France’s largest industrial corporations, and the country’s two giant iron and steel conglomerates were nationalized.36 And for a brief moment, the politics of making promises in the West reached their postwar apogee.

But capital holders did not like what they saw, and they wasted little time in signaling their displeasure. As inflation, spending, and taxes on wealth increased within France, investors did everything they could to get their money out of francs. Capital flight forced French authorities to devalue the currency in October 1981 and June 1982 and caused Mitterrand to begin his own search for new economic thinking. In support of the second devaluation, he announced what became known as his “U-turn”: a domestic austerity package of wage and price freezes, tax increases, and spending cuts that directly contradicted the thrust of his earlier policies. But with industry still nationalized and communists still in the government, this austerity did little to restore capital’s confidence. Faced with the prospect of further devaluations and capital flight, Mitterrand abandoned his socialist crusade completely in 1983 and embraced a policy of rigueur, a code word for prioritizing price stability and fiscal austerity over socialist domestic policy. By the spring of 1984, the communists were gone from the government, and Mitterrand was calling for a modernization of the French economy à l’américaine.37 Over the remainder of the decade, the government joined the privatization craze sweeping the West and led the global charge to eliminate national capital controls and give finance free reign to roam across state borders.38

Thus, we can conclude that although the size of the Western welfare state remained largely unchanged in the 1980s, its meaning in Western societies changed dramatically. The postwar welfare state had emerged as part of Western governments’ broader commitment to regulate the economy in pursuit of higher living standards and equitable distribution across their societies. But its persistence in the 1980s signaled a trimming of government’s aspirations. As high unemployment or stagnant incomes became enduring failings of many Western economies, the welfare state became an ally of the politics of breaking promises by providing subsistence to the millions of workers deindustrialization had made superfluous. Indeed, in a place like Britain, the government actively encouraged healthy people to apply for disability benefits simply so they would not show up in the state’s unemployment numbers.39 As governments’ ability or willingness to raise wages and ensure employment diminished, so too did citizens’ confidence in the state’s power to shape the economy toward just and prosperous ends. As Tony Judt has written, there was a “cumulative unravelling” in the Western world during the 1980s of the postwar assumption “that the activist state was a necessary condition of economic growth and social amelioration.”40 No longer self-confident architects of equality and progress, Western governments became tools for socializing the human costs of economic restructuring.

![]()

The static size and diminished expectations of government were nowhere more evident than in the United States. Despite his assertive rhetoric about getting the government out of Americans’ lives, Ronald Reagan failed miserably to shrink the size of the American state. “The true Reagan Revolution never had a chance,” David Stockman, Reagan’s first budget director, wrote in his 1986 memoir, The Triumph of Politics: How the Reagan Revolution Failed. What started as an “ideas-based” movement to create “minimalist government” turned into “an unintended exercise in free lunch economics,” Stockman wrote. Reagan’s tax cuts and military spending increases combined with the government’s inability to reduce domestic spending “unleashed” a “massive fiscal error . . . on the national and world economy.”41 The Reagan Revolution was “only a half-revolution—and a fiscal disaster.”42

The budget director’s views had not always been so dour. Stockman burst onto the American political scene in the late 1970s as an ardent proponent of supply-side economics, and he became Reagan’s budget director with the firm intention of cutting taxes and shrinking the size of the state. A central tenet of at least the rhetoric of supply-side economics was that tax cuts would pay for themselves by spurring a boom in economic growth. In the first budget forecast of the Reagan presidential campaign in August 1980, Stockman appeared to provide proof of this tenet by producing budget projections that led to a budget surplus in 1985. These projections included Reagan’s plan for a 30 percent cut in income tax rates, a 7 percent annual increase in military spending, and no significant cuts in entitlements. Stockman would later call these projections “neither logical, careful, nor accurate within a country mile,” but he achieved such a logic-defying budget miracle because of the dramatic effect of inflation on tax revenue through a process known as “bracket creep.” As the inflation of the 1970s raised Americans’ incomes, it also pushed them into new tax brackets, which led them to pay a higher share of their income in taxes.43 Critics ranging from Jimmy Carter in the White House to George H. W. Bush in the Republican presidential primary painted Reagan’s program as inflationary “voodoo economics” that would explode the budget deficit. But with double-digit inflation showing no sign of slowing down, Reagan’s economic team could produce budget projections to refute the charge. Most importantly, the more the Reagan team told itself and the country that it could cut taxes, increase military spending, and balance the budget, the more they actually believed such a combination was possible.44 Reagan sincerely proclaimed, in the early moments of his first inaugural address, “For decades we have piled deficit upon deficit, mortgaging our future and our children’s future for the temporary convenience of the present.” Normal citizens, he said, “can, by borrowing, live beyond our means, but for only a limited period of time. Why, then, should we think that collectively, as a nation, we’re not bound by that same limitation?”45

Before 1981, there was little reason to think the nation was not bound by the same limitation. But the events of Reagan’s first term would permanently alter this line of thinking. Change began with Volcker. As the Federal Reserve’s skyrocketing interest rates brought the liquidity engine of the 1970s to a screeching halt, inflation began an unexpectedly precipitous retreat—from a high of 13.5 percent in 1980 to 3.9 percent in 1982. The economic engine of bracket creep that had fueled Stockman’s forecasts quickly evaporated.

As it did, Congress passed the Economic Recovery Tax Act (ERTA), which included a 25 percent reduction in personal income tax rates over three years, a significant cut in the capital gains tax, and an enormous reduction in corporate taxes through extremely favorable changes in depreciation rules. Combined with financial innovations taking place on Wall Street, including the creation of the collateralized mortgage obligation, the ERTA created two economic conditions that would define the Reagan financial buildup—an extremely hospitable environment for debt investments and an unprecedented hole in federal tax revenues. In retrospect, the Reagan economic team estimated that the ERTA reduced the effective tax rate on capital by 50 percent,46 and the Office of Management and Budget calculated the cumulative federal revenue loss of the ERTA over the course of the 1980s at almost $1.5 trillion.47

This decline in tax revenue only became apparent once Volcker’s sky-high interest rates had their unexpectedly quick effect on inflation. As inflation began its steep decline, administration officials ran new budget projections in the fall of 1981 based on lower inflation and economic growth numbers. They discovered, to their surprise, that the fiscal policy they had just enacted would produce, in Stockman’s memorable formulation, “deficits as far as the eye can see.” The new projections were “horrifying,” Stockman recalled. They “showed cumulative red ink over five years of more than $700 billion. That was nearly as much national debt as it had taken America two hundred years to accumulate. It just took your breath away. No government official had ever seen such a thing.”48 In his diary, Reagan called the new deficit projections a “bomb.” He wrote with apparent surprise, “Inflation is a tax. We have brought down inflation so much faster than we anticipated that tax revenues will be lower than we figured.”49 By December 1981, he had resigned himself to the fact that “we who were going to balance the budget face the biggest budget deficits ever.”50

The question on everyone’s mind in the fall of 1981 was how these deficits could possibly be funded. In a closed national economy, economic theory posited that government budget deficits would harm the economy by “crowding out” private investment, driving up interest rates, and killing economic growth. No less an authority than Volcker himself firmly held to this position, and most of the administration’s own economists agreed.51 Larry Kudlow, a staff economist on the Council of Economic Advisors at the time, concluded at the beginning of 1982, “Excessive Federal and federally-assisted borrowing will compete with private demands, absorbing much needed capital resources and generating additional upward interest rate pressures.”52

The stakes of this funding question for Reagan’s presidency could not have been higher. If the massive deficits Reagan had created through tax cuts and military spending increases in his first year had crowded out domestic investment and killed off economic recovery in the remaining three years of his first term, the political effects on his presidency would have been resoundingly negative: he would have had to either retreat on his signature policy achievements and repeal his income tax cuts while cutting back on military spending or run for reelection in 1984 with the economy still mired in a deep recession. Put simply, if traditional economic theory had held true in the early 1980s, the Reagan Revolution itself would have been crowded out of the American political scene, and Reagan’s military buildup would have been an inconsequential blip on the radar of the long-running Cold War conflict.

By the early 1980s, however, glimmers of change in the global economy suggested that Reagan’s day of financial reckoning might forever be deferred. International capital flows were on the rise, and there were faint indications the United States was uniquely positioned in the global economy to use these flows for its lasting benefit. Governments had long faced a fundamental choice between guns and butter, but perhaps with the aid of the world’s capital, the United States could transcend that choice for good.

Of this, no one could be sure. But in the summer of 1981, some members of the Reagan administration were eager to find out. In June, the administration created the Cabinet Council Working Group on International Investment to review US policy toward foreign investment because it had become “an important and rapidly growing source of capital to the U.S. economy.”53 By the fall of 1981, the chairman of the Council of Economic Advisors was writing that “foreign portfolio flows are potentially useful in easing deficit financing pressures in domestic markets.”54 But this was far from certain, and smart minds across the political and economic spectrum doubted its feasibility.55 William Niskanen, a member of the Reagan economic staff, speculated to an audience at the American Enterprise Institute in December 1981 that “the opportunity to import capital” might save the economy from the harm of the deficits. But, he recalled, “the audience rejected the plausibility of net capital inflows in any substantial magnitude.”56

Throughout 1982, the question of the role of foreign capital in funding the US deficits lay dormant as the economy entered the deepest recession of the postwar period. In this gloomy economic climate, there was little danger of government borrowing crowding out private investment because there was little private investment to speak of. The public debate was instead about the future. The now widespread recognition that the ERTA would lead to unprecedented deficits led to loud political debates in 1982 and a number of piecemeal efforts to close the gap between revenues and expenditures, including the Tax Equity and Fiscal Responsibility Act of 1982 (TEFRA).57 Though accompanied by a great deal of consternation and fanfare, these efforts left the long-term picture basically unaltered: budget and current account deficits as far as the eye could see.

Something would have to give. And in 1983, Reagan administration officials began to notice that it was the old rules of economics, rather than their own policies, that were changing. Over the course of the year, they came to understand and tentatively embrace the fact that the newly globalized financial system would allow them to borrow foreign capital in far larger quantities than anyone had ever dreamed possible. The United States ran a current account deficit of $8 billion in 1982 and was projected to run one of $25 billion in 1983. This meant that after being a capital exporter for most of the postwar period, the United States had made the fundamental shift to being a net importer of capital from the rest of the world. Volcker’s high interest rates and Reagan’s tax cuts, labor discipline, and budget deficits had created a perfect storm of attractive conditions for capital, and foreigners were flocking to US markets in response.

The result was the so-called Super Dollar. From its nadir in the fall of 1978, the US dollar began a rapid and virtually constant ascent through 1985.58 The Super Dollar was a potent double-edged sword for the US economy. For many economists, exporters, and workers throughout the country, the dollar’s rise was a disturbing trend because it made foreign goods cheaper within the United States and priced American exports out of foreign markets. The higher the dollar rose, the more American workers lost their jobs, the more downward pressure was placed on wages, and the more businesses went bankrupt. But for capital holders and consumers, a rising dollar meant greater returns, more access to borrowed capital, and cheaper goods. And for the government, the rising dollar allowed US officials to solve their fiscal problems without having to raise taxes or cut spending to balance the budget. Foreign capital inflows transformed the hard budget constraints of traditional economy theory into a brave new world of no budget constraints at all.

Martin Feldstein, one of the most influential economists within the administration, noted in an internal memo in spring 1983 that the rising dollar was a “safety valve” that reduced the competition for capital and inflationary pressures within the United States. The United States could utilize this safety valve because the dollar had become “a portfolio asset for international investors.” Now that Volcker’s interest rate policy had restored capital holders’ confidence in the US capacity to impose discipline, foreigners were chasing dollar investments because of the currency’s central role in international trade and financial flows. As a result, Feldstein wrote, the administration did not need to worry in the short term about the country’s turn toward relying on foreign capital to meet its domestic needs. “A country cannot expect to go on running a current account deficit forever,” he wrote. “But why should we expect or want a current account balance every year?”59

Treasury Secretary Donald Regan very much agreed. In the summer of 1983, he established a number of working groups in the administration to study international capital flows as well as the country’s trade and current account deficits.60 The resulting studies cautiously embraced the new reality. “Traditionally the current account was thought to drive international transactions,” officials noted in September 1983. “Currently, however, there are reasons to believe the capital account is the driving force, as foreigners seek to invest in the United States. As they exchange their currencies for ours, the value of the dollar is pushed upward.” Foreigners sought out US financial markets because of the “marked reduction in inflation” in the United States and because US markets were “the largest and most liquid in the world.” This left the United States in a uniquely benign international position, officials tentatively believed: “If it is true that the rest of the world is intentionally seeking out the United States as a capital investment location, there could be a beneficial impact on U.S. credit and equity markets and a valuable supplement to domestic savings.”61

Volcker and his colleagues at the Federal Reserve fully understood the crucial role they played in unlocking the nation’s access to foreign capital. All the leading members of the Federal Reserve in the 1980s later admitted that attracting foreign capital to the United States played a key role in their decision-making. Vice Chairman Preston Martin explained, “We have to have rates high enough to bring in the capital. All of us have to consider the government financing very seriously.” Governor Charles Partee concurred. “We stayed above the foreign interest rates so that foreign investors would be attracted to the U.S,” he said.62 Japanese investors were by far the most important to attract. Martin explained how the Federal Reserve set interest rates just high enough to attract Japanese capital. “How are the Japanese reacting to these new thirty-year securities?” he would ask his colleagues. “What mattered was the Japanese. Are the Japanese buying? Fine, if they are, we don’t have to raise rates.”63

The combination of Volcker’s interest rates and Reagan’s deficits produced a financial buildup in the United States of massive proportions: $85 billion in net foreign capital entered the United States in 1983, followed by $103 billion in 1984, $129 billion in 1985, and $221 billion in 1986. The federal budget deficit in these years ranged from $208 billion in 1983 to $221 billion in 1986, so by the end of this period, foreign capital inflows were directly or indirectly covering all the federal government’s borrowing.64

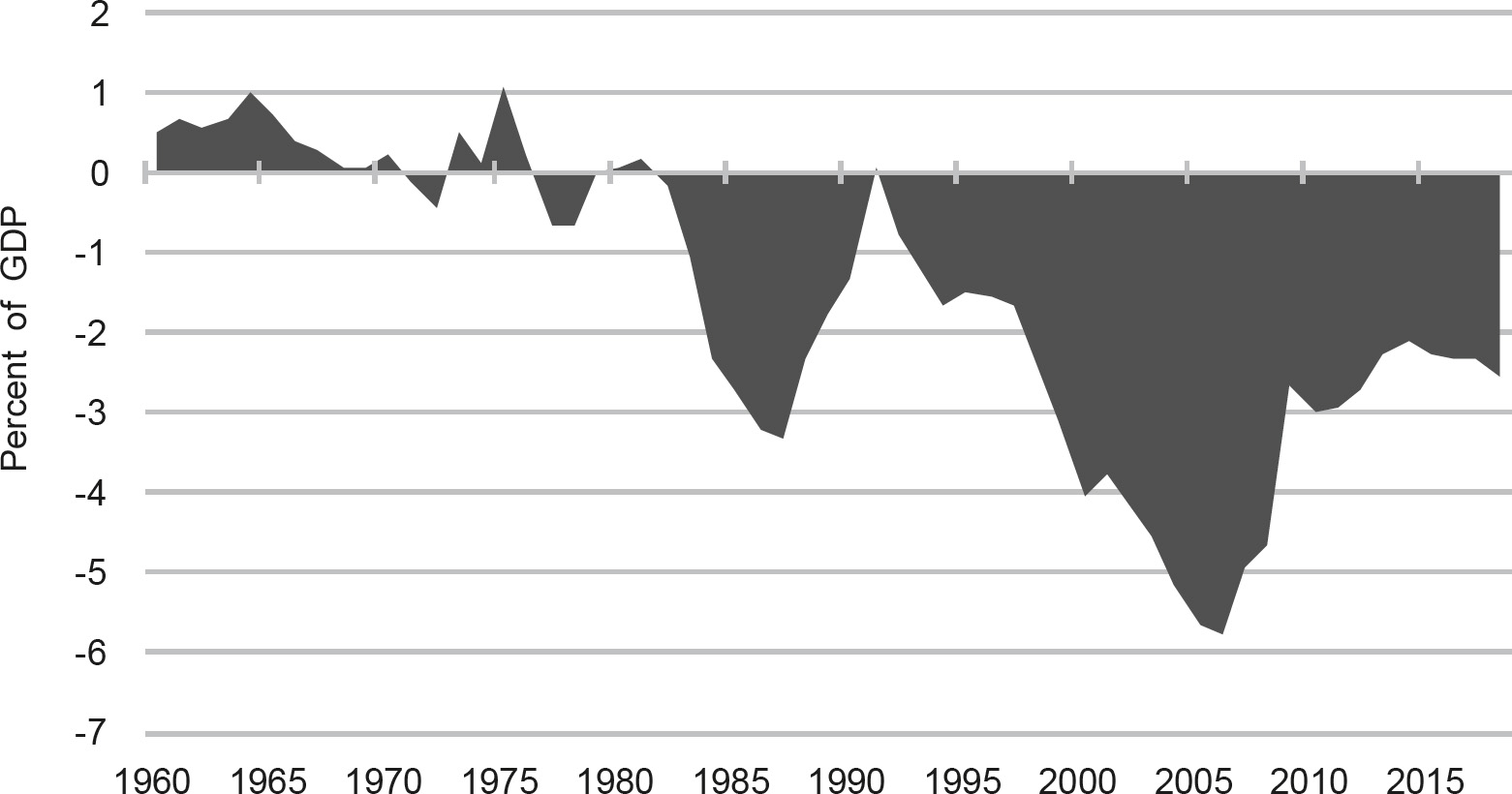

By the dawn of 1984, the US economy was booming and inflation had been vanquished. But the turnaround had not happened in the way Reagan had anticipated. Rather than defeating inflation by balancing the budget and cutting spending, the administration had exploded the federal deficit and unexpectedly discovered a way to pay for American guns and butter with foreign capital. The two economic phenomena that supply-side policies were supposed to encourage—increased savings and investment—had not materialized and, in fact, had gotten worse.65 Instead, an administration economist noted in early 1984 that “large flows of foreign funds into the United States have helped finance our Federal budget deficits and partially alleviated upward pressure on domestic interest rates.” This meant that the “continuation of the current trends” would make “the United States a debtor nation in the international economy” for the first time since 1914.66 Those trends did continue, and in 1986, the United States became the largest debtor country in the world.67 Foreign capital was now sustaining the renewal of American prosperity at home and the projection of American power abroad. It is a trend that has continued to this day (Figure 4.3).

The resurgence of the American economy in the 1980s was also a profoundly unequal renewal of fortunes. The potent tonic of high interest rates, low taxes, capital inflows, and booming asset prices made the 1980s a dynamite decade to be wealthy in the United States. Over the course of the decade, the wealthiest 10 percent of Americans saw their share of the country’s total income grow 16 percent; the wealthiest 1 percent saw their share increase 43 percent; and the wealthiest 0.1 percent saw their share surge 71 percent. This inaugurated a long-term redistribution of income upward in US society, as the top 1 percent’s share of total national income exploded from 10 percent in 1980 to 23.5 percent on the eve of the 2008 financial crisis.68

The less fortunate were, well, less fortunate. Wages stagnated, job security decreased, and entire occupations virtually disappeared from the American landscape. In place of the postwar social contract of rising incomes, job security, and full employment, working- and middle-class Americans were offered a new deal of debt-fueled consumption. Outstanding consumer credit loans doubled during the 1980s, and two-thirds of American households had a credit card by the end of the century.69 Cheap imports from abroad, rising home prices, and access to all manner of credit became the new foundations of a decidedly more precarious American way of life.

Figure 4.3 The US current account balance as a percentage of GDP.

Data sources: Federal Reserve Bank of St. Louis and IMF World Economic Outlook.

![]()

Just because the US economy was not suffering from crowding-out effects did not mean that crowding out was not happening. It only meant that it was not happening in the United States. As capital began to pour into the United States in the early 1980s, governments around the world that had borrowed heavily on global capital markets in the 1970s suddenly found it difficult to maintain their solvency. The petrodollar recycling system that had fueled the global economy for almost a decade ground to a halt. The G7 countries’ cumulative capital outflow of $46.8 billion in the 1970s turned into a cumulative capital inflow of $347.4 billion in the 1980s.70 It is strictly true in theory and roughly true in practice that international current accounts must balance out at a global level. International finance in the 1980s was Newtonian: every action produced an equal and opposite reaction. The equal and opposite reaction to the Reagan financial buildup was the sovereign debt crisis that came to dominate the global political economy of the 1980s. The debt crisis empowered lenders over borrowers in the global economy, and because the United States controlled the world economy’s lender of last resort—the IMF—the US government came to hold a particularly powerful position during the crisis. The potent combination of the Reagan financial buildup and the administration’s own financial diplomacy during the crisis unwound the relationships of economic interdependence that had defined the 1970s and opened powerful points of American leverage over the rest of the world.

The first sign of crisis came—as it often would in the last decade of the Cold War—from Poland. As we have seen, the Polish Crisis was intimately linked to developments in the global financial system. From the vantage point of global capital holders, events in Warsaw and Washington moved in opposite directions in 1981. As commercial bankers watched Volcker’s burgeoning success in defeating inflation and imposing discipline on the American economy, they also saw the Polish government struggle to do the same on the other side of the Iron Curtain. While they watched Reagan fire the air traffic controllers with surprising speed and popular support, they also saw Solidarity grow ever more resistant to the communist government’s attempts to trade domestic austerity for limited political reform in Poland. And as they saw Reagan’s tax cuts create massive new demand for capital within the United States by the end of the year, they also watched Wojciech Jaruzelski declare martial law in Poland on December 13, 1981, with his country in de facto bankruptcy.

The result was a collective rethinking of the sovereign lending system that had propped up governments across the developing and communist worlds since the oil crisis of 1973. “Fundamental change is sweeping the Euromarkets,” Euromoney reported in May 1982. “The era of the government borrower, the main prop of international bank lending in the ’seventies, is dying.” Bankers reported that “the Eastern European economic situation” had generated “a change in attitude” among banks toward the sovereign loan market.71

In turning away from their previous state clients, the banks were unwittingly sowing the seeds of their own potential destruction. A decline in sovereign loans did not mean a decline in sovereign debt. By 1982, debt among non-OECD countries sat at $600 billion, more than enough to bring down the global financial system if the debtors defaulted on their loans.72 This meant the Western financial system was fully interdependent with the debtors of the developing and communist worlds. The prospect of default became all too real in August 1982 when Mexican officials informed the international financial community that the country could no longer service its debts. Argentina and Brazil soon announced the same, and by autumn the world was hurtling toward the largest financial crisis since the Great Depression.

Officials in the US government, the Federal Reserve, and the IMF quickly came to believe that resolving the crisis would require large amounts of two things: structural adjustment from the borrowers and more money from the lenders. Structural adjustment—which, the reader will recall, Soviet officials termed strukturnaya perestroika—was an umbrella term for a whole series of policies with one primary goal: to turn a debtor country into a net exporter of capital and thus begin the process of paying off the debt. To do this, Western officials told debtor countries to devalue their currencies and eliminate foreign exchange controls, reduce their government budget deficits through cuts in investment and public subsidies, raise domestic interest rates to encourage domestic saving and halt inflation, privatize state-owned industries, lower import tariffs, eliminate domestic price and wage controls, strengthen bankruptcy laws to eliminate inefficient enterprises, and eliminate barriers to foreign investment. This long list of policy prescriptions would affect the debtor economies in diverse ways, but it pointed in one overarching direction: economic discipline. To solve the burgeoning sovereign debt crisis, Western officials believed that debtor countries would have to implement the politics of breaking promises.

But discipline alone would not be enough. Breaking promises would take time, but the debts needed to be paid without delay if the system was to avoid a general financial crisis throughout the capitalist world. Banks counted sovereign loans as assets on their balance sheets because, under normal circumstances, the principal and interest payments on loans offset the banks’ obligations to their depositors (the banks’ liabilities). If debtors defaulted on their debts and those sovereign loans became “nonperforming,” the banks would have to write those loans down as losses on their balance sheets. One or many sovereign defaults, therefore, had the potential to turn billions of dollars in bank assets into billions of dollars in losses virtually overnight. And if that happened, it was not difficult to imagine how economic depression across the capitalist world would soon follow.

Unwinding this interdependence would not happen overnight. Banks would need years to build up reserves on their balance sheets that could sustain the blow of writing down their sovereign loan portfolios as losses. In order for the banks to have more time, the borrowers needed to have more money. Only new money from Western banks, governments, and international organizations enabled the debtor countries to continue to service their debts. And only the debtor countries continuing to service their debts allowed the banks to continue to treat their sovereign loans as assets on their balance sheets. In short, unless debtor countries received a fresh infusion of capital to pay off their old debts that were coming due, the accounting ruse that propped up the banks’ house of cards would collapse in a downward spiral of defaults, write-offs of losses, insolvencies, and bank runs. Therefore, Western officials also believed that they—and the commercial banks whose reluctance to continue lending had precipitated the crisis in the first place—would have to provide debtors with a fresh round of capital to pay off old debts.73

Western financial institutions and the Reagan administration came to believe in this two-pronged approach of austerity and capital infusion very early in the crisis. Indeed, members of the administration were planning for it as soon as signs of trouble emerged in the spring of 1982. At that time, the Federal Reserve began circulating proposals to dramatically increase the IMF’s capital base (known as its quota). The Reagan administration supported this proposal because, as one NSC official noted in April 1982, “although most . . . vulnerable countries have implemented austere stabilization programs, it will probably take between 4–10 years until the major economic adjustments entailed can . . . sharply curtail or eliminate borrowing requirements.”74

In the meantime, new capital would need to be pumped into the international financial system. Western central banks kicked this off with a $1.85 billion loan from the Bank of International Settlements to Mexico just days after the government declared insolvency, and the US Treasury followed with $2 billion in further credits and financial support. The central banks and the Treasury Department only extended their financial aid on the condition that Mexico negotiate an adjustment program with the IMF, so in November 1982, the Fund and the Mexican government signed a three-year agreement that granted Mexico $3.7 billion in exchange for a domestic economic plan that would dramatically cut the budget and current account deficits through 1985. New government money and debtor austerity went hand in hand. But the IMF, the Fed, and the Treasury also aimed to ensure that commercial banks contributed their fair share to keeping the financial system afloat. So, even as the IMF agreed to the terms of the bailout in November, its managing director, Jacque de Larosière, shocked the banks by forcing them to supplement the public financing with an additional $5 billion of their own capital.75 This combination of fundamentals—short-term bridge financing from central banks and governments, the negotiation of an austere IMF structural adjustment program, and the commitment of new bank capital to the debtor country—became the standard formula for addressing the many national debt crises that roiled the world economy over the next decade.76

With this standard formula in hand, the Reagan administration, the IMF, and the Federal Reserve continued to strengthen their leverage by buying time to decrease the interdependence in the international financial system. The trick was to create enough new liquidity to prevent sovereign defaults and banks failures without setting off new inflationary pressures in the global economy. In this way, Volcker’s program of high interest rates at home was intimately linked to the Fed’s and IMF’s handling of the debt crises abroad. Beginning in the fall of 1982, Volcker and the Fed began to moderate the draconian interest rates of the previous two years to provide a modicum of liquidity to the financial system. Throughout 1983, administration officials and Reagan himself stridently lobbied Congress to increase US funding of the IMF. Despite a slew of domestic opponents who claimed that increasing IMF funding would merely bail out the big banks who had engaged in reckless lending, Congress passed a significant expansion of IMF funding into law in the fall of 1983.77 Not for the last time, the US government bailed out Wall Street despite the resistance of Main Street because it believed that the banks were too big to fail.

Across the Global South, debt crises begat more debt crises as the banks pulled back on their lending to sovereign borrowers. By the end of 1984, only two Latin American countries—Colombia and Paraguay—had not rescheduled their debt, and all told, thirty countries across the world had fallen behind on their debt payments and were seeking help from the IMF (and, by extension, the United States). With each passing day, Western banks were able to set aside more reserves to cushion the potential losses on their sovereign loans, and as they did, the interdependence of the 1970s waned and the US government’s leverage waxed in equal measure. As the Western financial system became ever more insulated from the threat of sovereign default, US officials grew to understand that they now held all the cards. As Treasury Secretary Regan brashly recognized as quickly as November 1982 in a meeting of the National Security Council, debtor countries would “look to us for help.” The question was, “What do we want in return?”78

Structural adjustment and austerity were what they wanted, and over the course of the 1980s, that is exactly what they got. In country after country, the Reagan administration and the IMF restructured the economies of the Global South. Even as the administration presided over unprecedented budget deficits in the United States and turned the country into the world’s largest borrower, the US Treasury and the IMF demanded that borrowing countries in the developing world act differently. Budget deficits were slashed, austerity of all kinds was imposed, and state intervention in the economy was beaten back at every turn. Poverty and economic dislocation spiked throughout debtor countries, and austerity inevitably bred political resistance. Most governments that entered debt crises failed to survive them, and a wave of political change followed in debt’s wake. Across Latin America, military dictatorships and authoritarian rulers who had survived the 1970s by borrowing on global capital markets collapsed in the 1980s as the economic basis of their rule precipitously crumpled. In their stead, US, IMF, and domestic officials within debtor countries looked to democratic elections as a means of building popular support and legitimacy for austerity. As one Brazilian business publication warned while the country faced economic crisis, “The dark clouds accumulating on the horizon . . . will only be dissipated with an authentic and democratic government.”79 Many throughout the region agreed, and over the course of the 1980s, Latin America experienced a wave of democratization directly tied to the region’s sovereign debt crisis.80 Just as in Thatcher’s Britain and Reagan’s America, governments in the Global South that had received a stamp of democratic legitimacy found it easier to break promises, so electoral democracy and the debt crisis rippled through the global economy hand in hand.

Thus, by 1985, the world economy had undergone a dramatic restructuring in the six short years since Paul Volcker had assumed his post at the Federal Reserve. The United States had emerged from the malaise of the 1970s to recapture its dominant position in the global economy, and the Reagan administration had discovered an unexpected way to renew economic growth at home and project power abroad with a seemingly endless supply of foreign capital. The Volcker Shock had simultaneously imposed the politics of breaking promises on the United States and a resistant world, but the Reagan financial buildup had quickly allowed the United States to write a new, but lopsided, social contract funded by borrowed foreign capital. And in buying time for banks to insulate themselves from defaults during the early years of the sovereign debt crisis, the Reagan administration and the IMF had unwound the financial interdependence of the 1970s and established new and potent points of American leverage over debtors in the world economy.

Through it all, the Cold War conflict between East and West persisted, but it was hardly insulated from the effects of the capitalist perestroika. The Reagan administration entered office with a keen sense of the Communist Bloc’s economic weakness and an intense desire to exploit that weakness for geopolitical gain. Had the capitalist perestroika not fundamentally restructured the world economy in the early 1980s, it is likely this intense desire would have come to naught. But because the Volcker Shock, the Reagan financial buildup, and the sovereign debt crisis starkly transformed the world economy in the United States’ favor, Reagan and his administration were able to wage an effective economic Cold War against the Soviet Bloc in the early 1980s and set the stage for the socialist perestroika that was to come.