THE STATE OF THE UNION

The curtain rises on a victorious nation bestriding the world in seersucker shorts, penny loafers, and a tennis sweater, humming “Zip-A-Dee-Doo-Dah"–rich, powerful, confident, downright cocky. When the curtain falls, the United States is limping offstage wearing nothing but a dazed look and a tie-dye robe, muttering the lyrics to “Helter Skelter.” Even people who saw it all had to wonder: what the hell just happened?

It’s one of history’s strange ironies that a society enjoying unprecedented prosperity should suddenly tear itself apart, and there’s no simple explanation for why it happened. The most important factor was the huge number of children born to returning GIs after World War II. The “baby boom” was fueled by a new feeling of optimism, economic expansion, and welfare guarantees from the New Deal. Average American families enjoyed higher incomes and a better standard of living than any group of people, anywhere, at any time in history, including new housing, better nutrition, and more educational opportunities.

With everything looking swell, people started doing what people do best and popping out babies by the millions–make that tens of millions. From 1945 to 1965, an incredible 80 million new Americans were born, increasing the total population by 62 million. The number of Americans under the age of 20 rose from 45 million in 1940 to 69 million in 1960, increasing from 34 percent to 39 percent of the population; the 1960 figure included 39 million children under the age of 10–or 22 percent of the population.

In short, the United States became a youth culture. The baby boomers were doted on by parents determined to give them all the things they’d missed growing up in the Great Depression–from bikes and baseball gloves to college and cars. The boomers displayed astounding creativity, energy, and sheer precocious self-confidence. As teenagers and young adults, they voiced concern about nuclear weapons, pollution, and racial discrimination. But their larger-than-life qualities could also be weaknesses: self-assurance could turn to arrogance, self-expression to self-destruction. As a result, movements that began with good intentions often ended up far from their original goals. Maybe free love and psychedelic drugs weren’t the solution to the world’s problems after all?

It wouldn’t be fair to pin all of America’s problems on the boomers. Their parents–"The Greatest Generation"–let America’s leaders steer the country into Vietnam, resulting in the worst military defeat in U.S. history. On the other hand, these older adults shared their children’s concern about the nuclear arms race and also proved surprisingly supportive of the African-American civil rights movement–at least outside the South.

WHAT HAPPENED WHEN

WHAT HAPPENED WHEN

|

May 17, 1954 |

Supreme Court rules segregation of public schools illegal in Brown v. Board. |

|

January 10, 1956 |

Elvis Presley records his first RCA single, “Heartbreak Hotel.” |

|

October 4, 1957 |

Soviet Union launches Sputnik I, world’s first artificial satellite. |

|

November 8, 1960 |

Massachusetts Senator John F. Kennedy narrowly beats Vice President Richard Nixon in a presidential election marred by accusations of voter fraud. |

|

December 5, 1960 |

Supreme Court desegregates interstate bus facilities in Boynton v. Virginia. |

|

April 12, 1961 |

Soviet cosmonaut Yuri Gagarin becomes the first man in space. |

|

May 4, 1961 |

First “Freedom Ride” leaves Washington, D.C., to test Supreme Court desegregation ruling in Southern bus facilities. |

|

May 5, 1961 |

Alan Shepard becomes first American in space. |

|

October 14–October 28, 1962 |

Cuban Missile Crisis brings world to brink of nuclear war. |

|

November 22, 1963 |

JFK is assassinated in Dallas, Texas; Lyndon Johnson becomes president. |

|

January 11, 1964 |

Surgeon General releases report showing smoking causes lung cancer. |

|

February 9, 1964 |

First performance by the Beatles on the Ed Sullivan show. |

|

July 2, 1964 |

LBJ signs Civil Rights Act, outlawing segregation in public places. |

|

February 21, 1965 |

Malcolm X is assassinated in New York City. |

|

March 7–21, 1965 |

Martin Luther King Jr. leads three marches from Selma to Montgomery, Alabama, demanding voting rights for African-Americans. |

|

August 6, 1965 |

LBJ signs Voting Rights Act, banning obstacles to voting based on race. |

|

December 31, 1965 |

There are 180,000 U.S. troops in South Vietnam. |

|

January 31, 1968 |

Communists launch surprise attacks across South Vietnam: the “Tet Offensive.” |

|

March 31, 1968 |

LBJ announces he will not run for reelection in November 1968. |

|

April 4, 1968 |

MLK is assassinated in Memphis, triggering a wave of riots nationwide. |

|

June 5, 1968 |

Democratic presidential front-runner Robert F. Kennedy is assassinated in Los Angeles. |

|

November 5, 1968 |

Republican Richard Nixon elected president with 43.4 percent of the vote. |

|

December 31, 1968 |

There are 536,100 U.S. troops in South Vietnam. |

|

July 16–24, 1969 |

The Apollo astronauts travel to the moon and return. |

|

August 15–18, 1969 |

Some 400,000 people gather in Woodstock, New York, for an epic rock concert. |

|

June 30, 1971 |

Twenty-sixth Amendment lowers voting age from 21 to 18. |

|

February 21–28, 1972 |

Nixon’s historic visit to China leads to better relations. |

|

October 10, 1972 |

FBI uncovers massive political espionage involving Nixon officials. |

|

January 27, 1973 |

Paris Peace Accords end U.S. military involvement in Vietnam. |

|

June 25, 1973 |

White House counsel John Dean implicates Nixon in Watergate cover-up. |

|

August 9, 1974 |

Facing conviction on impeachment charges, Nixon resigns from office. |

LIES YOUR TEACHER TOLD YOU

LIES YOUR TEACHER TOLD YOU

LIE: The sixties were all about peace and love.

THE TRUTH: People who maintain that the 1960s were groovy and mellow were either so addled with drugs they didn’t notice what was going on, or so fried that they forgot about it later. The sixties brought violent upheaval, including assassinations, domestic terrorism by the KKK and crazy left-wing groups, clashes between anti-war protesters and police, and race riots that left hundreds dead. Not exactly “groovy.”

![]() The “V” hand sign–made with the palm out and the index and middle fingers extended–represented “victory” during World War I. By the mid-1960s, the same motion was being used to mean “peace. ”

The “V” hand sign–made with the palm out and the index and middle fingers extended–represented “victory” during World War I. By the mid-1960s, the same motion was being used to mean “peace. ”

One reason for the violence was the civil rights movement, in which African-Americans struggled to secure basic legal protections and political rights that were still denied to them by Southern states. The movement began with African-American veterans who’d been encouraged by the desegregation of the U.S. military in 1948. Seeking recognition of their service in World War II, they hoped that the nation was on the cusp of change. But attempts to gain full equality were rejected by many Southern whites, who had no interest in disrupting the traditional social order enshrined by Jim Crow laws.

One of the first steps came in 1944, when Irene Morgan, an African-American woman, refused to move to the back of a Greyhound bus traveling from Richmond, Virginia, to Baltimore, Maryland. Her protest resulted in a 1946 Supreme Court decision ending segregation on interstate buses. Morgan’s case–argued by Thurgood Marshall, an NAACP lawyer and future Supreme Court justice himself–helped inspire Rosa Parks, whose refusal to give up her bus seat sparked the municipal bus boycott in Montgomery, Alabama, in 1955.

Another blow to the extremists was delivered by the Supreme Court’s 1960 decision banning segregation in restaurants and waiting rooms in interstate bus facilities. This led to the “Freedom Rides” of 1961, when African-Americans challenged illegal segregation by riding interstate buses through Southern states, visiting lunch counters along the way.

The activists were highly successful in their quest to gain press coverage, which baited violence from angry whites and unwarranted crackdowns from Southern officials. From 1955 to 1968, at least 45 people were murdered for participating, or appearing to participate, in the civil rights movement, including at least 13 killed by Southern lawmen or legislators. The most notorious incident was a bomb attack on a church in Birmingham, Alabama, on September 15, 1963, a few days after city schools were desegregated. The event killed four young girls and wounded 14 other children arriving to hear a Sunday sermon. If the Klu Klux Klan thought bombing churches and killing kids was somehow going to win people over, they were sorely mistaken. These kinds of attacks were the beginning of the end: Klan violence alienated moderate whites, who might oppose integration but were even more appalled by the attack on children at a place of worship.

DISOBEDIENCE SCHOOL

The Freedom Rides and Montgomery bus boycott were instigated in part by African-American activists from the North and their white supporters, including leftist Jews. (Southern whites had a few choice labels for these folks, including “carpetbaggers,” “atheists,” and “communists.”) But the movement’s most important leader was a Baptist preacher from Atlanta. Still in his teens when Marshall helped integrate interstate buses, Martin Luther King Jr. was impressed by the power of non-violent civil disobedience to bring about social change, especially if it led to publicity or legal action (ideally both). In fact, King had paid careful attention to Mahatma Gandhi’s successes in undermining British rule in India, and he came away believing that peaceful action–designed to provoke violent reaction–could strip government of its legitimacy.

After earning degrees in sociology, divinity, and theology, in 1954 King (just 25 years old) took a traditional leadership role as the pastor of a Baptist church in Montgomery, with the goal of advancing civil rights. He knew he could count on the support of millions of African-Americans who had migrated to Northern cities, where they formed a powerful new political bloc; his main challenge would be winning white support. Organizing marches and boycotts through his Southern Christian Leadership Conference, King’s advocacy of non-violent resistance resonated with Christian doctrine and was basically guaranteed to win the sympathy of a religious society–provided there was media coverage.

And King made sure there was. Leveraging the national reach of newspapers, magazines, radio, and especially the brand-new medium of TV, civil rights leaders first built support among non-Southern whites, winning over the majority of the U.S. population. (For example, support for school integration among Northern whites increased from 40 percent in 1942 to 60 percent in 1956 and then 75 percent in 1963.) King then drove a wedge between moderate and extremist Southern whites, isolating and politically marginalizing the latter. Moderate Southern whites rejected vigilante violence by the Ku Klux Klan and also ended up opposing the strategy of “massive resistance” proposed by diehard segregationists who wanted to close public schools rather than accept integration. Although most moderate Southern whites still objected to integration, they placed greater importance on their kids learning to read, and sensibly decided to compromise.

When you give witness to an evil you do not cause that evil but you oppose it so it can be cured.

–Martin Luther King Jr., 1965

They are not the first Negroes to face mobs, they are merely the first Negroes to frighten the mob more than the mob frightens them.

–James Baldwin, August 1960

While African-American protestors did the heavy lifting, forcing confrontations “on the ground” at risk of injury and even death, many of the key legal decisions and enforcement came from Washington, D.C. Of course, the measures were just as much about asserting authority over the states as they were about guarding liberty. The Supreme Court, still dominated by FDR’s left-wing appointees, was especially aggressive: after integrating interstate transportation in 1946, it followed up with Shelley v. Kraemer (1948) banning “restrictive covenants” in home sales, Brown v. Board of Education (1954) declaring “separate but equal” school segregation unconstitutional, and Loving v. Virginia (1967) overturning laws against interracial marriage. Congress spiked Jim Crow–now approaching its hundredth birthday–with the 1964 Civil Rights Act, thus banning segregation in public places, work, and government.

Throughout this process, entrenched Southern opposition provoked more and more assertive action by the federal government. For example, when Arkansas Governor Orval Faubus vowed to prevent nine black students from enrolling at Little Rock High School in 1957, President Eisenhower personally warned him that he intended to see the Brown ruling enforced. Grandstanding for white voters, Faubus ignored Ike and sent the Arkansas National Guard to prevent the “Little Rock Nine” from entering the school. Bad move. Provoked, Eisenhower took the extraordinary step of “federalizing” the National Guard, removing it from the governor’s control, and sent the Army to escort the students into the school.

At times civil rights activists and the federal government seemed to be working almost hand in hand. In one classic example, Martin Luther King Jr. met with President Lyndon Johnson on February 9, 1965, to urge voting rights for African-Americans–but it seems like the meeting was basically a strategy session. One month later, from March 7 to 21 King led thousands of protesters attempting to march from Montgomery to Selma, Alabama, where they planned to register to vote. As expected, the marches provoked a brutal crackdown by Alabama state troopers, who attacked the marchers with clubs, police dogs, and fire hoses. Images of these attacks, televised nationally, gave President Johnson the congressional support he needed to get the Voting Rights Act passed in March 1965.

Even if we pass this bill, the battle will not be over. What happened in Selma is part of a far larger movement which reaches into every section and state of America. It is the effort of American Negroes to secure for themselves the full blessings of American life. Their cause must be our cause, too, because it is not just Negroes but really it is all of us who must overcome the crippling legacy of bigotry and injustice. And we shall overcome.

–President Johnson, March 15, 1965

It’s important to keep in mind that most Southern whites were not card-carrying KKK ghouls, and from early on “progressive” Southern whites, numbering about 5 percent–20 percent depending on the locale, supported the push for integration. Though outnumbered, their public support was important in encouraging African-American activists and persuading moderate whites to join the desegregationist camp. While 80 percent of white Southerners said they opposed their children going to school with African-Americans in 1956, this number dropped with remarkable speed to 62 percent in 1963, 38 percent in 1965, and just 16 percent in 1970.

Meanwhile, there were definite limits to Northern white support–especially when activism turned radical. Some African-American leaders had always rejected compromise with whites, advocating self-sufficiency or even separation. Marcus Garvey’s “African Redemption” movement in the 1920s inspired Wallace Fard Muhammad to found the Nation of Islam, an unorthodoxracist sect, in Detroit in 1930. W.F. Muhammad’s successor, Elijah Muhammad, encouraged African-Americans to leave Christian churches and adopt new names, with an “X” to denote their long-lost African heritage. But the Nation of Islam truly entered the spotlight when Elijah Muhammad’s most talented disciple came to prominence. Born in 1925 in Omaha, Nebraska, Malcolm Little started out a petty criminal. By 1948, he’d joined the Nation while in prison and adopted the surname X. After his release in 1952, the charismatic orator gained notoriety for fierce denunciations of white injustice and justifications of self-defense “by any means necessary"–a thinly veiled call to arms. While Malcolm X did adopt a more conciliatory tone shortly before his assassination in February 1965, the radical banner was taken up by others, including Huey Newton, who founded the militant Black Panthers in 1966.

The white majority found the idea of a black uprising alarming, and scores of violent outbursts in the 1960s did little to ease fears. In the week following the assassination of Martin Luther King Jr. in April 1968, 125 riots resulted in 46 deaths, 1,300 injuries and 20,000 arrests. But those only scratch the surface. From 1963 to 1969, there were 166 riots, which left 188 people dead, 5,000 injured, and 40,000 under arrest. Some infamous events include the 1965 Watts riot in Los Angeles, with 34 dead; the 1967 Newark riot, with 27 dead; and the 1967 Detroit riot, with 43 dead. Although most of the casualties were African-Americans, these events undermined white support for civil rights activism.

LIE: The president needs permission from Congress to undertake major hostilities.

THE TRUTH: That’s just, like, the Constitution’s opinion, man. The president can totally send hundreds of thousands of U.S. troops overseas to fight America’s enemies for years at a time without an official declaration of war by Congress. Because it’s not “war,” it’s a “police action.” See the difference? Most people don’t.

In no part of the Constitution is more wisdom to be found, than in the clause which confides the question of war or peace to the legislature, and not to the executive department.

–James Madison, 1793

The origins of this classic constitutional sleight of hand go back to 1798 and the Quasi-War with France, also called the Undeclared War or the Half War. But the first really egregious dodge came in 1950, when President Harry Truman sent a total of 480,000 U.S. troops to Korea without bothering to get a declaration of war. The presidential power grab (and congressional abdication of responsibility) got even bigger with President Lyndon Johnson. The 1964 Tonkin Gulf Resolution authorized the commander in chief to order whatever military action seemed appropriate in Southeast Asia after North Vietnamese forces (allegedly) took a couple of potshots at U.S. Navy ships. This open-ended resolution basically gave Johnson a blank check to escalate the conflict in Vietnam, setting in motion a textbook example of how not to conduct a war–er, that is, “police action.”

For one thing, it had nothing to do with America. Led by the charismatic Ho Chi Minh, the Vietnam war began as a nationalist uprising against French colonialists. It wasn’t until 1959 that it morphed into a civil war between Ho’s communist North Vietnamese (Vietminh) and pro-U.S. forces in South Vietnam. The sides were being led by two vastly different personalities. While Ho was both experienced in guerrilla warfare and widely revered as “the father of the country,” South Vietnam’s Ngo Dinh Diem was a devout Catholic who considered becoming a monk or priest before settling on the civil service. Nevertheless, U.S. officials psyched themselves up, convincing each other that Diem was a strong leader with popular support. Overall, the odds looked pretty good–in one corner, the most powerful nation on earth; in the other, North Vietnam, a poor, backward country with almost no industrial base.

Well, the odds looked good on paper. But in the ring was a different story. For over a decade, pro-communist Viet Cong guerrillas in South Vietnam staged surprise attacks on American and South Vietnamese military and civilian targets. Facing (or rather, failing to face) this elusive foe, U.S. forces were supposed to defend South Vietnamese villages, cut off Viet Cong supplies, and somehow, eventually, find and destroy the guerrillas. This proved much more difficult than it seemed from the comfort of Washington, D.C., especially since the guerrillas were sustained by a continuous flow of weapons, fuel, and reinforcements from North Vietnam, via the “Ho Chi Minh Trail.”

Because the trail snaked through the jungles of neighboring Laos and Cambodia, Johnson decided to expand the “police action” to Laos, triggering a cycle of failure and escalation that came to be known as “mission creep.” Under Kennedy there had been, at the highest, 16,300 troops in 1963. When relatively small deployments failed to stop communist infiltration, Johnson upped the ante. In 1964, there were 23,000 U.S. troops stationed there. In 1965, troop strength rose to 184,000, and it finally peaked at 536,000 in 1968. But the enemy just kept growing stronger and stronger, no matter how many troops America deployed. What went wrong?

BONUS LIE: Old people supported war in Vietnam, while young people opposed it.

This is one of the most enduring misconceptions about Vietnam. It turns out younger Americans (under the age of 30) were consistently more likely to support the war in Vietnam than those over the age of 49, with middle-aged folks falling somewhere in, well, the middle. In August of 1965, a Gallup poll found 76 percent of adults under 30 supported U.S. intervention in Vietnam, versus 51 percent of adults over 49. By July 1967, when 62 percent of the under–30 crowd still supported intervention, the over–49 crowd was down to 37 percent; and in January 1970 the numbers fell to 41 percent and 20 percent, respectively.

Public Support for Vietnam Intervention, by Age

What explains the difference? It’s anybody’s guess, but it may be due partly to the older generation’s personal experience of conflict in World War II and Korea–including shortages, long separations, and the loss of loved ones–which made them more cautious about rushing into new fights (especially if they had sons eligible for the draft).

The fact is, the United States misread almost everything about the war. To begin with, U.S. officials didn’t realize that many Vietnamese saw America as an imperial power, and Diem as its puppet. Worse, Diem alienated South Vietnam’s Buddhist majority by persecuting monks, touching off civil unrest. So in November 1963, the CIA changed its mind and organized a coup that toppled Diem, whose equally unpopular replacement was ousted in another (non-CIA) coup three months later. U.S. officials also failed to grasp that the North Vietnamese were truly fighting for their homeland’s independence, rather than just acting as pawns of Moscow or Beijing, so they underestimated the morale of the North Vietnamese–especially their ability to absorb casualties. Finally, U.S. advantages didn’t count for much in Vietnam: tanks couldn’t maneuver in the jungle, so “grunts” had to fight on foot against adversaries who knew the terrain much better.

You can kill ten of my men for every one I kill of yours, but even at those odds, you will lose and I will win.

–Ho Chi Minh, to a French official, 1946

But perhaps the biggest factor was that U.S. commanders weren’t allowed to send ground troops into North Vietnam–the enemy homeland–for fear of provoking a “real” war with China or Russia. In addition to basically guaranteeing defeat, this led to over-reliance on air power in the form of vicious bombing campaigns. Operations like “Rolling Thunder,” pulverizing North Vietnam from 1965 to 1968, failed to stop the enemy but did manage to convince civilians the United States wanted to exterminate them. Laos was also bombed mercilessly in a failed bid to cut the Ho Chi Minh Trail–and this paled in comparison to the bombing of South Vietnam, America’s ally. Altogether from 1964 to 1973, the United States dropped an incredible seven million tons of bombs–twice the total tonnage dropped by U.S. forces in World War II–on a region about half the size of Peru. The United States also sprayed 18 million gallons of the defoliant Agent Orange to expose enemy hideouts. Around 500,000 birth defects in Vietnam have been attributed to the spray campaign, and an unknown number of cancers in U.S. veterans.

![]() Agent Orange was so named for the colored markings on thebarrels in which it was shipped. Other similarly named herbicides

Agent Orange was so named for the colored markings on thebarrels in which it was shipped. Other similarly named herbicides

were

called Agents Purple, Pink, and Green.

Nonetheless, there were always enough successes to justify hopes for final victory: whenever the Viet Cong came out to fight pitched battles, they were annihilated, as in the Tet Offensive of 1968. But perversely, Tet became a psychological victory for the North Vietnamese, showing Americans that Pentagon “progress reports” were baloney. American support for the war waned, and privately Johnson’s advisors told him Vietnam was unwinnable. In March of 1968 the president–overwhelmed by the police action he’d made–announced he wouldn’t seek reelection that year. That November, Democratic Vice President Humphrey was defeated in the presidential election by Republican Richard Nixon, who won with the vague, improbable vow of “peace with honor.”

This turned out to mean “bomb the bejeezus out of Vietnam, Cambodia, and Laos, while making nice with the Soviets and Chinese.” The idea was to isolate North Vietnam and give South Vietnam a fighting chance, and it did allow Nixon to withdraw U.S. troops and turn over most of the fighting to South Vietnam. Knowing that South Vietnam wasn’t up to the task, Nixon tried to help out with air power, which stopped a big North Vietnamese push for Saigon in 1972.

We should declare war on North Vietnam…. We could pave the whole country and put parking strips on it, and still be home by Christmas.

–Ronald Reagan, 1965

However, Nixon’s “secret bombing” of Laos and Cambodia from 1969 to 1972 failed to halt communist infiltration of South Vietnam. It simultaneously outraged anti-war Americans–even in the pre-Internet era, you still couldn’t drop two million tons of bombs without someone noticing. Protests erupted on college campuses nationwide, including Kent State, where the Ohio National Guard shot and killed four unarmed protesters on May 4, 1970, galvanizing anti-war sentiment.

True, Nixon’s 1972 bombing of Hanoi in “Linebacker I & II” helped bring the North Vietnamese to the negotiating table, but the resulting Paris Peace Accords, signed in January 1973, were really just a fig leaf to let America retreat from Southeast Asia with a shred of dignity. Everyone knew the communists were biding their time before crushing South Vietnam and reunifying the country, completing their decades-long quest. Of course, that last shred of dignity was stripped away by the spectacle of South Vietnamese civilians desperately clinging to departing American helicopters during the final evacuation of the U.S. embassy in Saigon, as North Vietnamese forces closed in on April 29–30, 1975.

LIE: Technology makes life better.

THE TRUTH: Okay, this is definitely true if you’re rich. If you’re poor, well …

In the mid–1950s, the United States was still the largest industrial power on earth, but its dominance was being eroded by postwar recovery in Japan and Europe and new industrialization in Latin America and Asia. The U.S. share of global production fell from 35 percent to 25 percent between 1955 and 1975, with its share of steel output tumbling from 39 percent to 16 percent, and car production plunging from 70 percent to 27 percent. In other words, America now had competition. This was good for consumers (hooray for choices!) and workers in foreign countries (hooray for relatively high-paying jobs and a higher standard of living!)– but it was bad news for Americans who depended on factory work. With foreign labor cheaper than American labor, foreign manufacturers could offer comparable goods at lower prices, forcing American companies to lower prices too. To stay competitive, American companies had to make more stuff at lower cost. The solution? Robots.

Automation began during World War II, as companies worked to fulfill government contracts with a smaller workforce. At the time, labor was tight. In fact, the war period was the best time to be an unskilled worker. But after the war, companies like GM and Ford continued to up their automation. After all, they were facing significant foreign competition from hugely popular models like the Volkswagen Beetle and Toyota Corona.

UNIONIZE ME

The decline of manufacturing led to the decline of unions, at least in the private sector. The high wages dictated by unions weren’t sustainable in the face of foreign competition, and the remaining manufacturing jobs began moving from heavily unionized states in the North to nonunionized states in the South–or out of the country altogether.

From 1965 to 1975, the Northeastern United States lost over a million manufacturing jobs, while the South picked up 860,000–usually nonunion and at substantially lower wages (on average 20 percent less than Northern wages for comparable work). U.S. companies also began outsourcing simple manufacturing work to developing countries.

Overall, the proportion of American workers who belonged to a union fell from about 40 percent in 1955 to 30 percent in 1975–in the private sector. However, the situation was different in the public sector (meaning government jobs), thanks to JFK, who encouraged federal workers to unionize, setting the precedent for state and county employees not long after. From 1955 to 1975, the proportion of public employees who belonged to unions jumped from 12 percent to 40 percent. Insert jokes about the DMV here.

To keep up with the low foreign prices, U.S. manufacturers turned to a combination of two things: automation and layoffs. When it came time to cut positions, less-skilled, low-paying jobs were the first to go–these jobs were less likely to be unionized and were simply easier for machines to do. Adding insult to injury, laid-off workers had a hard time finding new jobs because automation was spreading from industry to industry like a robot plague. For example, beginning in 1956, hundreds of thousands of dockworkers lost their jobs after the introduction of container shipping and automation, which allowed shipping lines to consolidate cargo traffic in a couple of big ports.

These advancements in technology were especially devastating to African-American workers, who often lacked the education and skills for higher-paying jobs. The result was mass unemployment beginning in the 1950s, growing to crisis proportions by the 1960s. Poverty was accompanied by a wave of crime, drug addiction and–most ominously–the breakdown of African-American families. Faced with spiraling crime and plummeting property values, everyone who could afford it fled big cities for the suburbs. The resulting collapse of the tax base brought sharp declines in education and public services like mass transit, sanitation, and policing. Millions were trapped in a vicious cycle of poverty and social breakdown. In short, the inner cities had become ghettoes.

what’s so great about the great society?

Lyndon Johnson’s hero was Franklin Delano Roosevelt, and it shows in his policies. Believing that poverty contributed to crime and civil disorder, Johnson devoted his presidency to expanding the welfare system first introduced by FDR. As a follow-up to the New Deal, Johnson’s “Great Society” program was breathtakingly ambitious: it established an Office of Economic Opportunity to organize job training, created Medicaid and Medicare to help poor and elderly Americans pay for health care, provided federal funds to the states for poor school districts, established the U.S. Department of Housing and Urban Development (HUD) to finance low-cost housing, created a permanent Food Stamp Program, and provided preschool for low-income children through Head Start.

Johnson and his Democratic allies in Congress clearly had a thing for legislating, and the Great Society became the high-water mark of Democratic power after World War II. But critics say many of their well-intentioned reforms backfired. Federal assistance intended to lift people out of poverty instead fostered economic dependency, with welfare accepted as an ordinary part of life–the exact opposite of the original goal. The number of U.S. citizens receiving welfare skyrocketed from six million in 1967 to 18 million in 1974. What’s more, the (white) news media–always ready to pander unhelpfully–created the false impression that most welfare recipients were African-American, when in fact the majority were white, reinforcing prejudices among whites and negative self-image among African-Americans.

White recipients, Aid to Families with Dependent Children, 1975:5.7 million Black recipients, AFDC, 1975:4.9 million White households receiving food stamps, 1975:2.8 million Black households receiving food stamps, 1975:1.6 million

TRENDSPOTTING

TRENDSPOTTING

Getting It On

Nothing says “I love you” like a sexually transmitted disease, except maybe an illegitimate child. And during the era of “free love” from 1965 to 1975, these tokens of affection became more and more common. But what caused the sudden increase in fooling around?

The twentieth century had brought big social changes: the extended family gave way to the nuclear family, everyone became more mobile thanks to new modes of transportation, and more women worked outside the home. Meanwhile, religious belief was coming into conflict with the new cults of science and technology. All of this, combined with the widespread availability of condoms–legalized in 1918–enabled people to control their sexual reproduction. As a result, traditional sexual mores gave way to more permissive attitudes post-World War I. In the 1940s, the U.S. military entertained no illusions about the virtue of enlisted men: by the end of World War II, the armed forces were distributing a remarkable 50 million condoms per month along with a short “educational” film popularizing the slogan, “Put it on before you put it in.”

![]() Hoping to stop the spread of venereal diseases, in 1935 Connecticut became the first U.S. state to require premarital blood tests.

Hoping to stop the spread of venereal diseases, in 1935 Connecticut became the first U.S. state to require premarital blood tests.

After the war Americans were pleasantly scandalized by the first large-scale sex studies. From 1948 to 1953 Alfred Kinsey, a University of Indiana zoologist, conducted pioneering surveys documenting American sexual behaviors and attitudes. Though his methodology and data were later questioned, Kinsey certainly succeeded in getting Americans to talk about sex, especially since his findings suggested widespread sexual deviance. According to Kinsey’s research, 37 percent of American men had at least one homosexual experience, 50 percent of married men had at least one extramarital encounter, and 22 percent of men and 12 percent of women reported themselves aroused by sadomasochism. Not coincidentally, Hugh Hefner’s scandalous and highly successful Playboy launched the same year as Kinsey’s last study, with early issues devoting a great deal of attention to Kinsey’s kinky findings. Then in 1957 William Masters and Virginia Johnson began their physiological studies on subjects like sexual arousal, male impotence, female frigidity, and a host of other naughty subjects. Their findings–based on observations of about 10,000 sexual acts involving a total of 694 subjects in a laboratory setting (382 women and 312 men, never all at once)–were summarized in the best-selling Human Sexual Response, published in 1965, and the best-selling follow-up, Human Sexual Inadequacy, which appeared in 1970.

With all this academic activity, it’s not surprising that the “sexual revolution” began on college campuses, just as female coeducational students (“coeds”) were gaining admission to previously all-male bastions. Still, collegiate sex remained fairly conservative at first: although 40 percent of male students reported having premarital sex in the late 1950s, it was often with prostitutes. Only one out of five college women reported having premarital sex–and usually only if she was engaged or in a long-term relationship. Young women (and to a lesser degree, young men) who regularly had premarital intercourse were considered “low class.” But by the time the sixties rolled around, everything was about to change, thanks to “The Pill.”

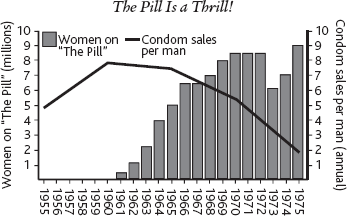

Developed by an American biologist named Dr. Gregory Pincus, the combined oral contraceptive pill quickly emerged as the most important advance in birth control since the condom, with an estimated failure rate of only 0.3 percent without human error, compared to 2 percent for condoms. The Food and Drug Administration approved The Pill for contraceptive use in 1961, and indeed, it’s hard to overstate its popularity: the number of American women taking The Pill surged from 400,000 in 1961 to 10 million in 1975, making it the most popular form of birth control. In 1973 there was a temporary dip in sales after studies confirmed that the drug caused blood clots in a small number of users, but for most, the benefits still outweighed the risks. The only real problem with The Pill stemmed from its success. It was so effective as birth control that people stopped using pesky, inconvenient condoms. As “full-contact” premarital sex and promiscuity increased, so did the rates of sexually transmitted diseases.

With all of this promiscuous sex going on, there were plenty of other things to worry about too … like the decline of marriage. In 1969 California became the first state to allow “no-fault” divorces, and by 1975 all but five states had some kind of no-fault divorce statute. As the divorce rate doubled from 1955 to 1975, the number of people getting married in the first place declined by 15 percent, reflecting a new skepticism about the purpose and benefits of matrimony. During the same time period, the number of children born out of wedlock jumped from 4.5 percent of all births to 14.2 percent. Despite the availability of The Pill and other forms of contraception, there was also a huge increase in the number of legal abortions, facilitated by a handful of states adopting more liberal laws. In 1973 the Supreme Court made abortion legal in Roe v. Wade, overturning laws banning abortion in 30 states and touching off a bitter ideological conflict that still rages today.

The Pill Is a Thrill!

Happy Meals

Like many gifts from America to the world, McDonald’s is a mixed bag: on one hand, it’s gloriously greasy and delicious junk food that isn’t so good for you; on the other hand, it’s gloriously greasy and delicious junk food that isn’t so good for you.

Richard (Dick) and Maurice (Mac) McDonald opened their first restaurant in San Bernardino, California, in 1940. Initially, the McDonald’s Bar-B-Que was a pretty standard drive-in joint with waitresses serving guests who ate in their cars. But the brothers soon elevated the humble burger to an art form. In 1948 they revamped the restaurant as a self-service drive-in–losing the waitresses, replacing silverware with plastic, and ditching most of the menu to focus on their nine best sellers, especially the 15-cent hamburger. The next year, they replaced potato chips with French fries, another instant classic, and started offering milkshakes.

The McDonald’s Bar-B-Que remained a local operation until 1954, when the brothers were visited by Ray Kroc, a restaurant equipment salesman who was impressed by the restaurant’s efficiency and popularity. Kroc struck a deal with the brothers to turn McDonald’s into a national franchise, and in 1961 he bought them out for $2.7 million. Under his leadership, the McDonald’s Corporation expanded like the American waistline: from 9 restaurants in 1955, the number jumped to 710 in 1965 and 3,076 in 1975.

![]() The Big Mac was added to McDonald’s national menu in 1968. It was intended to compete with the pop

The Big Mac was added to McDonald’s national menu in 1968. It was intended to compete with the pop

u

lar Big Boy double-

decker

The chain’s success was due largely to Kroc’s obsessive management style. He standardized everything from the length and width of French fries to the architectural finishes of restaurants, including the iconic Golden Arches. Franchises got uniform ingredients from Kroc’s central supply and distribution system, and in 1956, he established control over new restaurant locations with a commercial real estate business, the Franchise Realty Corporation. This new corporation not only acted as a landlord for new branches, but it also provided a new corporate revenue stream. But Kroc was hardly done. In 1961 he established “Hamburger University,” a professional training program in Elk Grove Village, Illinois. Kroc also encouraged enterprise on the part of franchise managers–giving them free rein with local marketing while supporting their efforts with national advertising campaigns. And through a new series of television ads in 1966, a character named Ronald McDonald joined the cultural lexicon.

As the popularity of fast-food joints skyrocketed, American diets began to change. Per capita consumption of soft drinks surged from 11 gallons per year in 1955 to 30 gallons per year in 1975, the annual intake of red meat jumped from 107 pounds to 130 pounds, and “added fats” (like cooking oil, margarine, and butter) went from 45 pounds to 53 pounds. Unsurprisingly, the proportion of obese Americans increased from 10 percent in 1955 to 15 percent in 1975. Luckily, medical advances helped limit the damage as the number of fatal heart attacks decreased from five per 1,000 people in 1955 to four per 1,000 in 1975.

Ad Men

Science is great and all, but apparently, it’s got nothing on ad campaigns. When the U.S. Surgeon General finally confirmed in 1964 that smoking cigarettes–wonderful, stimulating, relaxing, sexy cigarettes–greatly increased the risk of dying of lung cancer, heart disease, and emphysema, tobacco companies responded with more sophisticated advertising. And Americans responded by lighting up.

The ill effects of tobacco, including its association with cancer and respiratory diseases, were suspected as soon as Europeans began using it regularly in the sixteenth century. But there was no way to measure the precise impact: most people didn’t live long enough for the effects of chronic tobacco use to show up, and the medical profession was only beginning to apply scientific methods to understanding disease. In the twentieth century, however, longer lives and the growing popularity of machine-rolled cigarettes led to an increase in tobacco-related disease.

After German researchers conducted pioneering investigations at the urging of Adolf Hitler (who hated cigarettes, on top of everything else) more than a dozen studies in the 1950s by the American Cancer Society (ACS) and others demonstrated a connection between smoking, lung cancer, and heart disease. But the American news media mostly neglected to report these findings–maybe because tobacco advertising revenues were the single biggest ad category in print and broadcast, ahead of automobiles. Meanwhile, tobacco companies mounted a furious counterattack, playing up tobacco’s patriotic association with American history and funding studies that blamed the rising prevalence of lung cancer on other plausible culprits, like increasing air pollution. In 1953 the industry came together to create the Council for Tobacco Research, which sought to win over the scientific community with generous research grants, followed in 1958 by the Tobacco Institute, whose main mission was neutralizing negative PR. Beginning in 1954, the industry also trumpeted the cigarette filter, which supposedly made tobacco “safe” (it didn’t).

In 1957, U.S. Surgeon General Leroy E. Burney publicly stated the U.S. Public Health Service’s belief that smoking causes lung cancer. But the ACS and the American Heart Association–dissatisfied with media coverage–demanded stronger statements and regulation. Encouraged by a scathing report from Britain’s Royal College of Physicians in 1962, U.S. Surgeon General Luther L. Terry convened an expert committee, which reviewed thousands of scientific studies and in 1964 concluded, again, that smoking indeed causes lung cancer. This time, however, there was no way the media could dodge the news: the report made newspaper headlines and was the lead story on every broadcast news program. Among other things, the report warned of a 20-fold increase in the risk of lung cancer for heavy smokers, but also noted greatly diminished risk if they quit.

The report certainly got people’s attention, but that doesn’t mean everyone took it to heart. In 1966 one Harris Poll found less than half of adults believed smoking was a “major” cause of lung cancer. Meanwhile, the tobacco industry opened the advertising floodgates even further, with total ad spending jumping from an already huge $115 million in 1955 to a whopping $263 million in 1965. Thanks to its efforts, total cigarette sales soared from 386.4 billion to 521.1 billion over the same period. Shameless as always, tobacco companies became especially effective at marketing the cigarettes to women, touting cigarettes for weight loss and positioning them as part of women’s liberation (really).

![]() Babe Ruth, Lucille Ball, Ronald Reagan, The Flintstones, and even Santa Claus all appeared in cigarette ads on tele

Babe Ruth, Lucille Ball, Ronald Reagan, The Flintstones, and even Santa Claus all appeared in cigarette ads on tele

vi

In 1964 the Federal Trade Commission banned deceptive advertising by tobacco companies and called for mandatory warnings of tobacco’s health effects in advertising and on cigarette packaging–but tobacco industry lobbyists were able to derail this legislation with help from pro-tobacco legislators, delaying the new warnings and watering down the wording. Congress finally mandated stronger wording in 1969 and banned TV and radio ads for tobacco products in 1970. But the industry still enjoyed free rein in print media and billboard ads and managed to come up with ingenious substitutes for broadcast advertising, including sponsorship of sporting events, concerts, contests, sweepstakes, and point-of-sale promotions (like eye-catching posters and displays in stores). By 1975 the ad spending on marketing campaigns was at nearly $500 million.

Once again, it was money well spent: from 1965 to 1975, cigarette sales increased steadily from 521.1 billion to 603 billion. Of course, there were terrible human consequences. The death rate from lung cancer more than doubled from 17 per 100,000 people in 1955 to 37 in 1975, and this was just part of the total toll; overall 1955–1975 saw seven million tobacco-related deaths in the United States. Meanwhile, during the same period, total industry revenues almost tripled, from $5.3 billion to $14.8 billion, for total revenues of over $200 billion or about $28,500 per fatality.

This Is Your Country on Drugs

As if gonorrhea, cheeseburgers, and cigarettes weren’t unhealthy enough, Americans also experimented with a colorful cornucopia of illegal drugs in the 1960s and 1970s, breezily disregarding the fact that many of them were illegal for a reason. While a lot of people had a good time, and some even “expanded their minds,” there were also thousands of cases of fatal overdoses, nasty blood diseases, and terrible accidents, and God only knows how many bad trips (literally: he was there for many of them).

The most popular illegal drug in the United States, hands down, was and is marijuana–also known as pot, bud, Buddha, cheeba, chronic, dank, dolo, dope, endo, ganja, grass, green, hay, herb, jive, kaya, leaf, lobo, loco, Mary Jane, reefer, rope, sess, skunk, smoke, sticky icky, tea, weed, and whacky tobaccky. After being introduced to America by Mexican laborers, who passed the pipe with poor blacks in New Orleans around 1910, the herbal cigarette use followed jazz musicians to Chicago and then radiated to Eastern cities in the 1920s–1930s, where it was a popular alternative to alcohol in the Prohibition era.

During this period, marijuana use was limited almost exclusively to African-Americans and Mexican immigrants, who made easy targets for a law enforcement apparatus left idle by the end of Prohibition. Newspapers helped whip white fears into a frenzy with lurid, largely fictional reports of"crazed Mexicans” and “wild-eyed Negroes” raping and murdering unsuspecting victims while in the thrall of the drug’s “delirious hallucinations.” While this makes strange reading juxtaposed with the current “stoner” stereotype, most readers didn’t know the first thing about the drug (or Mexicans, for that matter). The result was a federal law banning marijuana in 1937, which conveniently gave the newly created Federal Bureau of Narcotics something to do.

Marijuana use didn’t cross over to white America until the late 1940s, when it was adopted by members of the emerging “Beat” subculture, and it remained fairly uncommon until the 1960s, when it became ubiquitous almost overnight in the hippie subculture. The number of first-time users tripled from 1960 to 1965 to 600,000, then surged to 2.5 million new users in 1969 and nearly 3.5 million in 1972, and continued at that rate for the rest of the 1970s. In total, from 1960 to 1975 over 28 million Americans experimented with marijuana, equaling 13 percent of the population in 1975, with a good number–around 14 million–returning for follow-up experiments.

That same period saw a 10-fold increase in the number of heroin addicts in the United States, from about 55,000 to 550,000. While the majority of users lived in poor urban areas, with especially high rates of addiction among inner-city African-American populations, heroin also made inroads in the white middle class, particularly among teenagers and young adults, who experimented with the drug on college campuses. Popular Beat writers like Jack Kerouac gave heroin cultural cachet as a cool, glamorous drug. Hundreds of thousands of young men were also exposed to cheap, high-grade heroin in Vietnam, with one study estimating that 15 percent of U.S. troops were regular users in 1971. Though most returning soldiers kicked the habit, some hard-core addicts introduced it to friends back home, especially in the ghettoes, where heroin use was already established. By 1971 the U.S. government estimated that American heroin addicts were spending over $2.7 billion a year on the drug. Heroin-related deaths in New York City (home to almost half the country’s addicts) soared from 199 in 1960 to a peak of 1,409 in 1972, thanks to a spike in overdoses and also increasing prevalence of two dangerous new diseases, hepatitis A and B, which spread by junkies sharing needles.

![]() The CIA helped a former Chinese Nationalist, Khun Sa, carve out an opium-growing empire in Burma and transported his high-grade heroin to local markets aboard the agency’s secret air force, “Air America.” The CIA used its share of the profits to fund off-the-books

The CIA helped a former Chinese Nationalist, Khun Sa, carve out an opium-growing empire in Burma and transported his high-grade heroin to local markets aboard the agency’s secret air force, “Air America.” The CIA used its share of the profits to fund off-the-books

The most emblematic drug of the 1960s had to be lysergic acid diethylamide, or LSD. While marijuana and heroin were about feeling good, LSD transformed people by “opening their minds.” The effects of this man-made hallucinogen can only be described as bizarre, including vivid visual and auditory hallucinations, novel corporeal sensations, out-of-body experiences, synesthesia (“hearing colors” or “tasting sounds”), and heightened sensitivity to time and space. People took LSD in the hope of grasping profound truths, and some reported success, relating feelings of union with nature, revelations of their own individuality, and healing of old emotional traumas.

Awesome, right? Well, except for the plethora of terrifying “bad trips"–seemingly endless waking nightmares characterized by paranoia, despair, alienation, and the loss of sense of self. This wasn’t a rare occurrence: there were so many bad trips at Woodstock the organizers set up a row of tents where doctors and volunteers could take care of hundreds of people who were “freaking out” (including one of the organizers). Some doctors have also suggested that psychedelic drugs can trigger full-blown schizophrenia.

Facing a rising tide of illegal drug use, in 1971 Richard Nixon declared “war on drugs,” calling the abuse “public enemy No. 1.” In 1973 he created the Drug Enforcement Administration, the successor to the Federal Bureau of Narcotics, to coordinate the efforts of Customs, Treasury agents, the FBI, and local law enforcement to stop the flow of drugs into the United States from abroad. But the feds were no match for the huge demand and profit potential of illegal drugs: while marijuana seizures along the Mexican border soared from 6,432 pounds in 1963 to 451,800 pounds in 1973, the latter figure represents just 2.5 percent of the estimated 8,750 tons of marijuana consumed in the United States that year.

![]() Illegal drugs weren’t the only substance being abused by Americans in the 1960s: the number of Valium prescriptions written per year in the United States rose from 4 million in 1964 to 61 million in 1975.

Illegal drugs weren’t the only substance being abused by Americans in the 1960s: the number of Valium prescriptions written per year in the United States rose from 4 million in 1964 to 61 million in 1975.

All the Single Ladies

Although the 1950s and early 1960s are usually thought of as a dormant period in the women’s rights movement, they saw an acceleration of the trends that began during World War II. Where 19.3 million women worked in 1955, making up 33 percent of the U.S. workforce, by 1965 the number rose to 28 million, or 39 percent of the workforce. The number of women who attended college also rose sharply, thanks to the integration of previously all-male universities: female undergrads more than doubled from 900,000 in 1955 to 2.15 million in 1965–increasing from 35 percent to 39 percent of the total.

These trends set the stage for yet another social upheaval. As working women enjoyed greater economic independence, giving them more say in their relations with men, they couldn’t fail to notice the barriers to professional advancement and economic discrimination–on average women made just 61 percent of what men earned for comparable work in 1960. Likewise, women who graduated from college were certainly better-educated, but with little hope of landing higher-paying jobs or academic positions.

The first salvo in this new feminist movement was fired by Betty Friedan, the daughter of a jeweler in Peoria, Illinois. Friedan, who experienced anti-Semitism as a young woman, was first swept up by radical leftism. After attending Smith College and Berkeley in the 1930s–1940s, she wrote for a left-wing union publication but was fired in 1952 because she was pregnant. At loose ends, in 1957 she organized a survey of female college graduates from the University of California system and found a deep current of dissatisfaction with their lives as housewives. This outpouring of unhappiness inspired her groundbreaking work, The Feminine Mystique; published in 1963, the book criticized modern society for confining women to the home when their education and ambition would allow them to do much more, and let women in this position know they weren’t alone. Friedan also attacked theories of “penis envy” and other notions as proposed by Sigmund Freud, then the dominant thinker in American psychology.

The problem lay buried, unspoken, for many years in the minds of American women. It was a strange stirring, a sense of dissatisfaction, a yearning [that is, a longing] that women suffered in the middle of the twentieth century in the United States. Each suburban wife struggled with it alone. As she made the beds, shopped for groceries … she was afraid to ask even of herself the silent question–"Is this all?”

–Betty Friedan, The Feminine Mystique, 1963

Meanwhile, women had been paying close attention to the civil rights movement, which provided a model for organized action to combat widespread discrimination. In 1966 Friedan became co-founder of the National Organization for Women (NOW), which lobbied for legislative reforms. Some of the reforms piggybacked on bills first proposed by black civil rights activists, including a clause prohibiting discrimination on the basis of gender added to the Civil Rights Act of 1964. But like the civil rights activists, NOW lobbyists found that legislative reforms that looked good on paper were often difficult to enforce: the 1963 Equal Pay Act, which aimed to abolish wage discrimination based on gender, didn’t have a visible impact for more than 20 years.

Frustrated by the limited success of legislative reform, the feminist movement became increasingly radical over the course of the 1960s, again paralleling the civil rights and anti-war movements. In fact, many feminist leaders experienced their “political awakening” pursuing these other causes: Gloria Steinem, another Smith alum turned freelance journalist, became involved in politics in 1968 while working on the Democratic primary campaign of George McGovern. In 1969 Steinem won fame as a feminist leader with her uncompromising defense of abortion rights, now at the center of the “Women’s Liberation” movement. She also testified to Congress in support of the Equal Rights Amendment (ERA), which stated, “Equality of rights under the law shall not be denied or abridged by the United States or by any state on account of sex.”

Although the ERA was never ratified by all the states, feminists won a huge victory in 1973 with the Supreme Court’s decision in Roe v. Wade, effectively legalizing abortion in all 50 states. In its wake the National Abortion Rights Action League partnered with the ACLU to bring lawsuits forcing public hospitals to offer abortions–but Roe v. Wade remains very controversial, bitterly opposed by “pro-life” activists and conservatives who argue that the Supreme Court overreached.

![]() Roe v. Wade didn’t pass quickly enough to help Norma McCorvey, aka Jane Roe. She gave birth to a baby girl, who was given up for adoption. McCorvey, who later accepted baptism and communion in the Catholic Church, is now a pro-life advocate.

Roe v. Wade didn’t pass quickly enough to help Norma McCorvey, aka Jane Roe. She gave birth to a baby girl, who was given up for adoption. McCorvey, who later accepted baptism and communion in the Catholic Church, is now a pro-life advocate.

Otherwise, progress during this period was slow but significant, as women came to occupy more management and academic positions. But there were still blatant disparities: in 1975 women earned just 62 percent of what men were paid–and men still expected working spouses to handle traditional duties like housework and raising kids.

other people’s stuff

other people’s stuff

Hooray for Race Wars

Not that kind of race war, silly! Because the Cold War never involved direct armed conflict between the rival superpowers, the United States and the Soviet Union sublimated their aggression into “races” that gave them an excuse to spend lots of money, while allowing them to measure “progress” with enough ambiguity that both sides could claim to be winning.

The Arms Race

If you can’t actually declare war and whup on your enemy, the next best thing is accumulating colossal military power and loudly declaring that if there were a war, you would totally whup them worse than they could whup you. The great thing about this strategy is you never have to find out if it’s true or not.

The idea of the “arms race” is as old as hating your neighbor, but with its long history of isolationism, the United States was a relative newcomer to the concept. On those few occasions that the United States had previously faced a serious military challenge, like World War I, it quickly assembled formidable armed forces that were then dismantled when peace returned. But World War II and the ensuing Cold War–where the global march of communism seemed to repeat the pattern of Axis aggression–changed this mindset forever. From now on, the United States would have to maintain a large standing army, navy, and air force to keep “the Reds” from getting any ideas.

The centerpiece of the “deterrence” strategy was a large nuclear arsenal, and we do mean large: from six fission bombs in 1945, the U.S. stash grew to include 3,057 fission and fusion warheads by 1955 and 31,642 by 1965. The number of high-yield (multi-megaton) devices peaked around 1960; supposing 500 high-yield bombs could wipe out human life on earth, it seems the U.S. nuclear arsenal had enough firepower that year to destroy civilization five times over, give or take an apocalypse.

![]() In 1951 President Truman announced the launch of CONELRAD, or the Control of Electromagnetic Radiation system of emergency notification. If Russia homed in on American radio signals to use them as beacons for its atomic missiles, all radio stations would cease broadcasting after an alert from the White House.

In 1951 President Truman announced the launch of CONELRAD, or the Control of Electromagnetic Radiation system of emergency notification. If Russia homed in on American radio signals to use them as beacons for its atomic missiles, all radio stations would cease broadcasting after an alert from the White House.

A GOVERNMENT BY THE PEOPLE, FOR THE PEOPLE, HIDDEN VERY FAR BENEATH THE PEOPLE

In case the Soviets pulled off a first strike, the U.S. government had plans to carry on the fight. Presuming they had enough warning, senior officials would be evacuated by helicopter from Washington, D.C., to top-secret bunkers equipped with their own power plants and stockpiles of food, water, and fuel, to direct the continuing war and reconstruction. The Pentagon had plans to relocate to “Site R,” a 700,000-square-foot complex beneath 650 acres of rolling hills at Raven Rock in Pennsylvania’s Blue Ridge Mountains. Meanwhile, the president, his cabinet, and the Supreme Court would be flown to “High Point,” a 600,000-square-foot bunker under Mount Weather, Virginia, equipped with a hospital, TV studio, and five-foot-thick, 34-ton steel blast doors, completed in 1958. As for the U.S. Congress, members were to be evacuated to “Casper,” a 112,000-square-foot bunker located beneath the exclusive Greenbrier resort in White Sulphur Springs, West Virginia, where precise replicas of the House and Senate chambers were completed in 1962.

Part of the reason for this absurd overproduction was the American military’s fear that the Soviets might try to take out the U.S. arsenal with a surprise attack. This paranoia led the Strategic Air Command (SAC) to carry out the “Airborne Alert,” one of the most remarkable logistical operations in history. From 1958 to 1968, long-range jet-powered B–52 bombers flew from SAC bases in the Midwest over Canada to the North Pole. In the event of war, they were to continue onward to targets in the Soviet Union. To ensure that the United States was always prepared to strike, from 1958 to 1968 several waves of B–52s were continuously taking off, flying as far as the North Pole, and then turning around and heading home. There were always at least a dozen bombers in the air at any time of night or day for 10 years.

By the early 1960s, the Americans and Russians had come up with an even better way of delivering horrific mass destruction: long-range ballistic missiles. Werner von Braun’s team of ex-Nazi rocket scientists (relocated to Huntsville, Alabama, by the U.S. military after World War II) designed a series of progressively more impressive nuclear missiles: the short-range Redstone deployed by the U.S. Army in 1958 was limited to targets within a 200-mile radius, but by 1962, the Minuteman I had a range of 8,100 miles. By the end of the 1960s, most of the SAC’s nuclear strike capability had shifted to intercontinental ballistic missiles (ICBMs), which could reach Soviet targets from missile bases in the United States in 30 minutes. The United States also deployed Jupiter and Pershing intermediate-range missiles in Western Europe and the submarine-based Polaris. In keeping with the arms race mentality, however, the U.S. Air Force retained a large fleet of B-52s, because you can never have too many ways to kill everyone five times over.

Of course nuclear weapons were only part of the arms race: both sides also prepared to fight good old-fashioned “conventional” wars with each other or with each other’s proxies (pawns) as in the Vietnam War But the Vietnam disaster eroded public support for the U.S. armed forces, leading to a decrease in defense spending, especially after Congress cut off all financial support for military operations in Southeast Asia in 1971. By 1970, Soviet defense spending had passed U.S. defense spending, although the Kremlin’s notorious secrecy meant American analysts didn’t figure this out until later that decade.

The Space Race

The arms race wasn’t the only U.S./Soviet pissing contest. The space race was another great excuse to spend boatloads of taxpayer money to demonstrate to the world how much bigger and manlier the United States was.

The Soviets kicked off the space race by delivering a series of humiliating defeats that wounded American pride. In 1957 they launched the first man-made satellite, Sputnik, and put the first living creature in orbit (the space dog Laika). But their greatest triumph came on April 12, 1961, when a 27-year-old test pilot, Yuri Gagarin, became the first human being in space. The U.S. news media freaked out over every Soviet victory: Orbiting nukes! Russian moon bases! Communist dogs in space! America couldn’t afford to have the universe go Red. There was some serious catching up to do.

![]() Space dog Laika bravely gave her life for the people’s revolutionary cause. The first Soviet space dog to return to Earth safely, Strelka, later gave birth to six “pupniks.” Nikita Khrushchev gave one of them to First Daughter Caroline Kennedy in 1961.

Space dog Laika bravely gave her life for the people’s revolutionary cause. The first Soviet space dog to return to Earth safely, Strelka, later gave birth to six “pupniks.” Nikita Khrushchev gave one of them to First Daughter Caroline Kennedy in 1961.

The first move was the 1959–1963 Mercury Project, in which the new National Aeronautics and Space Administration (NASA) carried out the first manned American spaceflights. Astronauts recruited from the U.S. Air Force and Navy were carried into outer space in small capsules lifted by Redstone rockets. Alan Shepard became the first American in space, with a brief flight on May 5, 1961, and on February 20, 1962, John Glenn became the first American to orbit the Earth. Separately, in December 1962 the Mariner 2 probe became the first spacecraft to visit another planet with a flyby 22,000 miles from Venus (in solar system terms, that’s a visit).

All this was a preamble to the main event, 1960–1972's Apollo Program, which fulfilled the ancient human quest to travel to the moon. This epic effort required nine years of intensive research and development, which produced hundreds of useful inventions, including the flight computer (boasting a massive 36K of memory), computer-controlled machine manufacturing, the first practical electrolytic fuel cell, water purification and dialysis, flameretardant synthetic fabrics, radiation shielding, and freeze-dried food. But the most formidable engineering achievement was the Saturn V rocket. Standing at 36 stories tall and 33 feet wide, and weighing in at 3,350 tons, the Saturn V was actually three stacked rockets, which fired in sequence before dropping back to the planet’s surface. The first stage alone was powered by five engines with a total 160 million horsepower, equivalent to about 300,000 big rigs.

After various test missions, on July 16, 1969, the first two stages of the Saturn V rocket lifted the 32-ton Apollo 11 command and lunar landing modules (named Columbia and Eagle) into Earth orbit with astronauts Neil Armstrong, Michael Collins, and Edwin “Buzz” Aldrin aboard. The third stage pushed the spacecraft beyond Earth’s immediate gravitational field to arrive in orbit around the moon on July 19. Over the next 60 hours, while Collins remained in Columbia making photographic surveys, Armstrong and Aldrin descended to the lunar surface in Eagle to collect moon rocks, set up equipment for seismic measurement and laser telemetry from Earth, and observe the effects of the low-gravity environment. During the 2.5-hour moonwalk, they also left behind an Apollo 11 mission patch, a gold olive branch, a silicon disk with messages of goodwill from world leaders, a memorial plaque, and–perhaps most importantly–an American flag.

![]() Neil Armstrong’s astronaut application arrived almost a week past the June 1, 1962, deadline. A friend of his who worked at the Manned Spacecraft Center slipped the tardy form into the pile before anyone noticed the postmark.

Neil Armstrong’s astronaut application arrived almost a week past the June 1, 1962, deadline. A friend of his who worked at the Manned Spacecraft Center slipped the tardy form into the pile before anyone noticed the postmark.

The lunar landing was a foreign policy triumph. At a time when televised images of the losing battle in Vietnam were broadcast around the world, Apollo 11 provided undeniable proof of American wealth, power, and technical skill in the form of a riveting broadcast event. The event was watched live by about 600 million people around the world.

Havana Terrible Decade

The United States gets blamed for a lot of things it didn’t do, but in the case of Cuba, the accusations are almost all true. Like, putting the mob in charge of an entire country. That’s pretty much what happened under the rule of General Fulgencio Batista, an ex-president of Cuba who seized power in 1952 with the backing of both the U.S. government and the powerful Jewish mob financier Meyer Lansky. Batista appointed Lansky “minister of gambling” and offered to match any outside investment of $1 million or more in the hotel business (read: casinos), while police turned a blind eye to prostitution and drug smuggling. From 1952 to 1959, Havana was a Mafia playground, with Batista taking a 10 percent–30 percent “skim” off the brothels (which employed 10,000–12,000 prostitutes), the 13 mob-controlled casinos, and the various allied criminal enterprises.

If you’re wondering what was going on in the rest of Cuba in this period, you’ve already shown more initiative than the mobsters, who displayed a remarkable lack of curiosity about everything outside of their Havana fiefdoms. This was shortsighted, because what was going on was revolution. Outside the cities and towns, Cuba remained backward and divided, with large numbers of peasants exploited by a small group of landowners. Basic services in the countryside were primitive or nonexistent. Three-quarters of the children in rural areas didn’t attend school, and 43 percent of agricultural workers were illiterate. Three-quarters of rural dwellings lacked running water.

The spread of communism in the 1920s added an incendiary new element to the already volatile mix. But the figure most associated with Cuban communism, Fidel Castro, didn’t embrace the ideology until late in his revolutionary career. In fact, Castro’s political mentor, Eduardo Chibas, was a staunch anti-communist, whose Ortodoxo Party simply advocated reforms against corruption and an end to U.S. domination. As for Castro, he married the daughter of a rich family who lavished money on him, funding a long trip to New York–which he seemed to enjoy without qualms–and he was also happy to receive gifts from his friend Batista.

But when Batista’s 1952 coup frustrated Castro’s political ambitions, Castro turned against his friend and was forced into exile in Mexico. When he returned to Cuba with the veteran guerrilla fighter Che Guevara in December 1956, there was still no trace of communism in his revolutionary program. As Castro’s guerrilla army battled Batista’s troops in 1957, he benefited from favorable coverage by the American media, who were encouraged by his 1958 promise not to seize foreign investments. After several rebel victories, Batista fled the country on January 1, 1959, taking with him an astounding $300 million. Oh, and he made sure his old friend Lansky got out safely too.

I don’t agree with communism. We are democracy. We are against all kinds of dictators … That is why we oppose communism.

–Fidel Castro, 1959

In the first half of 1959, Castro still presented himself as a nationalist rather than communist revolutionary–but when President Eisenhower rebuffed the new Cuban government’s requests for aid, Castro turned to the Soviets, who were only too happy to get a foothold right off the coast of the United States. Although cooperation was limited at first, Soviet aid to Cuba set off alarm bells in Washington, D.C., and Eisenhower responded by breaking off diplomatic relations in February 1960 … which of course pushed Castro further into the Soviet camp. In June of 1960, the United States imposed a trade embargo; Castro retaliated by seizing American businesses in Cuba. He also started receiving weapons from the Soviet Union. With the rift now unbridgeable, in April of 1961 President John F. Kennedy gave the green light for a CIA-created army of Cuban exiles to invade the island with the goal of toppling Castro. The far-fetched plan ended in debacle: it turned out the spot selected for the landing, the Bay of Pigs, was Castro’s favorite fishing spot. Big mistake. The “secret” incursion was discovered immediately, and JFK sealed its doom by withholding critical air support. The tiny invasion force was mopped up by the Soviet-armed Cuban military in three days.

Kennedy scrambled to contain the public outcry over this defeat (it was especially embarrassing in light of a pledge he’d made just five days earlier not to invade Cuba), but things were only going to get worse. Distrusting the United States more than ever, in September of 1962 Castro allowed the Soviet premier, Nikita Khrushchev, to secretly station medium-range nuclear missiles on Cuba, where they threatened the entire American East Coast. Ironically, Castro believed this would deter future invasions.

On October 15, 1962, an aerial reconnaissance by a U-2 spy plane revealed the existence of the missiles, and it quickly became clear that Cuba had miscalculated. Already humiliated, Kennedy was not going to tolerate another communist affront in “America’s backyard.” The Cuban Missile Crisis was now in full swing, and for two weeks in October 1962, the world teetered on the brink of nuclear war. On Sunday, October 21, Kennedy ordered a naval blockade to prevent Soviet ships believed to be carrying nuclear weapons from reaching the island. He also ordered a general mobilization in preparation for a full-scale invasion. As follow-up U2 flights revealed the presence of Soviet fighter planes, bombers, and cruise missiles in Cuba, and the U.S. Navy prepared for a standoff with Soviet ships in the mid-Atlantic, Kennedy and his advisors weighed their options.

On Monday, October 22, Kennedy advised the American public of the unfolding crisis in a televised address. This triggered mass hysteria as Americans mobbed supermarkets to stock hastily improvised fallout shelters. On Tuesday, new reconnaissance showed some of the Soviet missiles were ready to launch, but Kennedy decided to pull the naval blockade back, giving Khrushchev more time to negotiate. On Wednesday, most of the Soviet ships slowed down or reversed course, in a small matching concession.

On Thursday, Kennedy sent a letter to Khrushchev blaming Soviet actions for creating the crisis and demanding the removal of all offensive missiles from Cuba. On Friday, Khrushchev appeared ready to compromise, offering to withdraw the missiles from Cuba in exchange for a public pledge from Kennedy never to invade Cuba. Forcing everyone to keep working all weekend, on Saturday Khrushchev also demanded removal of American Jupiter nuclear missiles from Turkey as an added condition. Kennedy sent a letter to Khrushchev in which he agreed to the first condition (promising not to attack Cuba). Finally, on Sunday, October 28, Khrushchev announced in a radio broadcast that he would remove the missiles from Cuba. A few months later–without much commotion–American Jupiter rockets were quietly withdrawn from Turkey as well.

Coup and the Gang

Oh, the leaders you’ll shoot. Since both the United States and the Soviets were sure that the fate of the world depended on them, they felt justified in using pretty much any methods they pleased. Here are a few of the CIA’s lowlights:

* Congo, 1960. The Belgian Congo was led to independence in June 1960 by 34-year-old Patrice Lumumba, a democratically elected leader whose socialist rhetoric alarmed U.S. officials. President Eisenhower told CIA chief Allen Dulles that Lumumba should be “eliminated.” Dulles sent two teams of assassins to Congo, armed with guns and poison. Neither team got a shot at Lumumba, but the CIA still got their man. In September of 1960, he was overthrown by Colonel Joseph Mobutu with help from the CIA. He fled but was captured and murdered in January 1961 by Belgian troops at the behest of the CIA. Mobutu (who took the name Mobutu Sese Seko) went on to be one of the most brutal and corrupt dictators of the twentieth century, which is saying a lot.