2

The Universe started to explode as hot, dense material made up of fundamental particles. Our comprehension of just how those particles interacted has developed with advances in scientific understanding of atomic, nuclear and particle physics since the last century. The first recognizably modern notions were inspired by what happened in much smaller explosions – those of atomic bombs.

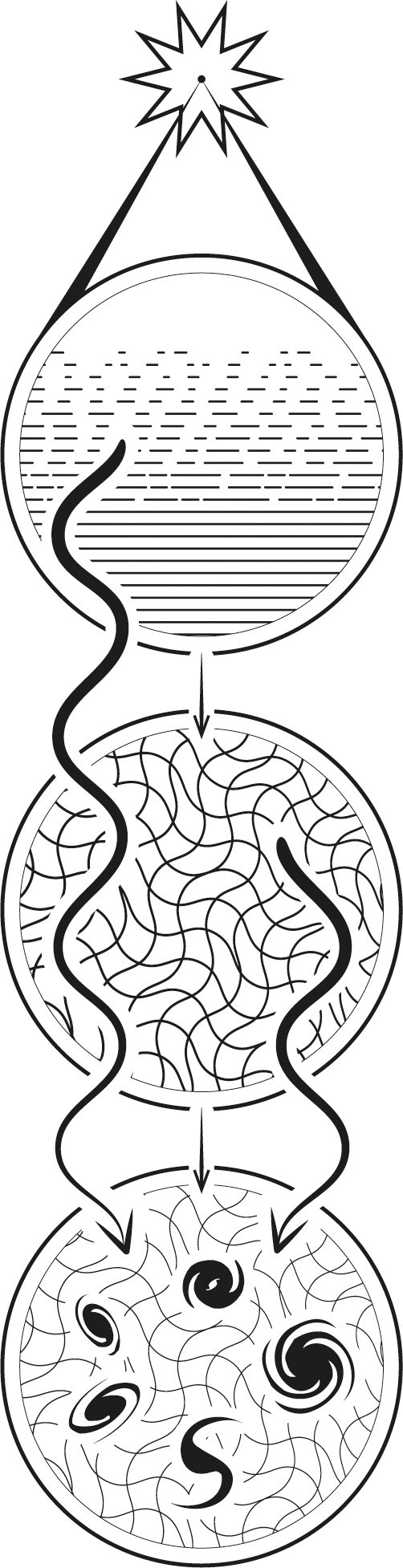

At birth, the Universe rapidly inflated and exploded, creating gravitational radiation. Later, the explosion cooled and released the Cosmic Microwave Background radiation, showing the embryonic patterns in which galaxies formed.

Ylem: the formation of matter

George Gamow (1904–1968) was a Russian-American theoretical physicist, educated in Russia in the 1920s at the time that the theories of relativity and quantum mechanics were being developed. As a university student he became interested in nuclear physics and tried more than once to leave the poverty-stricken and oppressive life in the Soviet Union. On one occasion he set out with his wife to paddle across the Black Sea from the Crimea to Turkey in a kayak made of rubber stretched on a frame of sticks. They carried food for five days, including strawberries and two bottles of brandy, indicating Gamow’s joyful attitude to life, his daring confidence and his profound, and in this case unfulfilled, optimism. On the second day, a storm arose and they were blown back onto shore, requiring them to concoct a story to cover up their escape attempt.

In 1933, as a respected Russian intellectual, Gamow was ordered by the Soviet authorities to attend a scientific conference in Brussels and he managed to arrange for his wife to accompany him. They both defected and moved through a succession of temporary academic jobs in France and England, before ending up in the USA. Gamow applied his knowledge of nuclear physics to astrophysics and then, during the Second World War, to nuclear explosions. Like Georges Lemaître, he turned away from his employment in warlike activities towards cosmology and brought together these various kinds of physics into the study of the explosion of Lemaître’s primeval atom in the Big Bang (see Chapter 1). He worked with a number of distinguished scientists, becoming influential both in the work that he did himself and in the way that he affected the wider development of cosmology.

Gamow’s most famous work on the nature of the Big Bang was carried out through his association with his American student Ralph Alpher (1921–2007), with the participation of American scientist Robert Herman (1914–1997): in the 1940s they all worked in various capacities at what was then a US government defence laboratory, the Applied Physics Laboratory in Maryland, a suburb of Washington DC. Alpher and Herman were employed to work on proximity fuses for torpedoes and antiaircraft gunfire; Gamow was a consultant on explosions. By its association with defence, their work on the Big Bang took place behind incongruously guarded gates.

The three of them started out by imagining that for the first milliseconds of its existence, the Universe was a dense maelstrom of incredibly hot fundamental particles bathed in hot, energetic radiation. It expanded and grew. The particles moved quickly and collided fiercely, interacting, changing dynamically from one to another. The characteristics of the mixture of particles altered progressively as the explosion expanded, cooled and became less dense.

Alpher called this material ylem, a term derived from a Latin word used by medieval theologians for the primordial substance from which everything was made. In modern terms, ylem was a hot plasma of fundamental particles: quarks, gluons, electrons and neutrinos (see Chapter 13).

Dark matter

There was another constituent of the material of the Universe that was made in the Big Bang; we know far less about it although it was crucial to the way the Universe developed immediately afterwards, as well as in the way the Universe behaves to the present day. That component is known as dark matter.

Dark matter is similar to ordinary matter in the respect that it generates and responds to the force of gravity in the same way. In interactions between particles of matter, energy is often released in the form of radiation or light, so if dark matter does not interact, it therefore generates no light. This is why it is called ‘dark’: it betrays little sign of its existence apart from its effects through the force of gravity. There is more dark matter than ordinary matter, so it is very important in ruling the Universe through gravity, but, except by gravity, it interacts very little indeed with ordinary matter.

The gravitational effect of dark matter on ordinary matter – stars and galaxies – is the way that it was discovered. The first indications came in 1933 through the work of Swiss-American astronomer Fritz Zwicky (1898–1974) at the California Institute of Technology (Caltech) in Pasadena. Zwicky was a man who was known for both his originality of thought and his irascible manner. He studied the largest agglomerations of matter in the Universe, namely clusters of galaxies.

Galaxies agglomerate into groups ranging in numbers from a few to many thousands. A group of a few galaxies can best be described as galaxies in orbit through and around each other. The larger clusters of galaxies number thousands and are abuzz like a swarm of bees. Zwicky examined such a cluster of galaxies in the constellation of Coma. He looked at the light from the stars of the galaxies and estimated the amount of mass that he could see. He also determined how fast the galaxies were moving and estimated the mass whose gravitational attraction would make them move that quickly. He found that the motions of the galaxies implied that their mass was four hundred times greater than expected from their luminosity, meaning that most of the matter in the cluster must be dark.

At first, the dark matter was thought to be dark stars, like black holes or neutron stars, but attempts to identify the precise type of star were negative. Most astronomers formed the easier conclusion that there was something wrong with Zwicky’s work, whose conclusions were controversial. Zwicky did not readily accept criticism, however, and rebuffed his critics.

As it turned out, Zwicky was right about dark matter in principle, although the numbers that he derived in 1933 have had to be updated. His estimate that there is four hundred times more dark matter than luminous matter turned out to be a considerable overestimate, even allowing for what is now known as the intra-cluster medium (hot gas revealed by its emission of X-rays, which was invisible to Zwicky at the time, there being no X-ray telescopes). Nevertheless, eventually, his general conclusion that there is much more dark matter than luminous matter in the Universe became accepted. Some of the key confirmatory work was carried out by Gamow’s student Vera Rubin, working at the Carnegie Institute in Washington DC.

American astronomer Vera Rubin (1928–2016) also had a career that included difficult relationships with some co-workers, although her problems lay in a completely different area from Zwicky’s. Naturally curious and observant as a child, she was educated at Vassar College in Poughkeepsie, New York State, then a college for women only where the concept of women astronomers was unremarkable, but after completing her education she found it hard to carry out research on equal terms with men. She struggled to break into the top level of astronomy, dominated by men as it was in the USA in the 1960s. She chose a specialism that seemed to be relatively uncontroversial, where she could get on with her work with less gender politics to fight. She investigated galaxies and their motions by use of spectroscopy and homed in on the problem of how galaxies rotate. Little did she realize that this would propel her into a deep controversy of the sort that she had wanted to avoid.

Working out how galaxies rotate had become a problem with the development of radio astronomy in the 1950s. When radio telescopes recorded the hydrogen gas moving in galaxies, radio astronomers found that generally the gas was moving too quickly compared with what would be expected from the mass that the galaxies contained. However, the interpretation of the radio observations was confused by limitations in the technology of radio telescopes, which were able to look at the entire galaxy all at once but were not able to compare the motions of individual parts of the galaxy (taking part in the rotation of a galaxy, the stars on one side of a galaxy move towards the Earth and stars on the opposite side move away and thus can reveal the rotational speed and period of the galaxy). Optical observations do not have as much of a limitation as radio telescopes and Rubin decided to investigate the problem by using a spectrograph (a spectroscope that records a spectrum) on large optical telescopes – a suitable tool to use because it can measure the motion of stars in different parts of a galaxy.

Rubin’s investigation took on new capability when she teamed up with a talented maker of spectrographs, Kent Ford. His spectrograph was many times more sensitive than previously attained and could investigate the motions of stars in the very faint outskirts of spiral galaxies, the parts furthest from the centre of rotation. Rubin overcame the problems she encountered in gaining access, as a woman, to the observatories to which she could take the spectrograph and make the investigations she thought necessary. She recounted how she found that one observatory had no female toilets, so she commandeered a male toilet by cutting a skirt from a piece of black paper to stick onto the trousered male silhouette on the door to change its designation.

To her surprise she found that the stars in a typical spiral galaxy (see page 77) moved much faster than she would have anticipated based on her estimates of the mass of the galaxy. This was particularly true of the stars in the most distant reaches, where it would have been thought that, the gravity of the galaxy having been diminished by the inverse square law (see page 14), the stars would be moving slowest. In fact, they were typically moving at the same speed as, or even faster than, the stars further in. The stars in general, particularly the outermost ones, were moving so fast that they should have been flying off into space rather than circling their galaxy. The mass of the galaxy had to be much greater than she had estimated in order to keep the stars bound in their orbits, so there seemed to be something wrong with her calculation.

Rubin had estimated the galaxy’s mass by measuring the light that came from its stars, then calculating back to the mass of the stars that could produce that light. If there was more mass in the galaxy than that, it was not stars – it was dark.

Since Rubin’s work, the techniques of radio astronomy have improved and radio telescopes have been able to study the rotation of hydrogen gas clouds within spiral galaxies in their outermost regions where there are no stars at all, just tenuous gas moving with the stars in orbit around the galaxies. This confirmed and extended Rubin’s conclusion.

There was more of a contradiction than Rubin thought: light from the stars diminished with distance from the centre of the galaxy, but the rotation of many galaxies did not reduce at all. There must be proportionately more dark matter in the outermost parts of a galaxy, compared to the mass in the form of stars: a typical galaxy of stars is embedded in an outer halo of dark matter.

There is typically at least five times more mass in dark matter in the Universe than in stars and gas. This is not so dramatic a conclusion as Zwicky’s estimate of four hundred times, but a factor of five is still a large measure of our ignorance about the constituents of the Universe. The best modern guess about the composition of dark matter is that it comprises individual particles known as WIMPs (weakly interacting massive particles) or axions. It is thought that the particles were made in the Big Bang and, like the hydrogen made at the same time, persist even now. For dark matter to have the effects on the motion of galaxies that are calculated, there must be enormous numbers of these particles; perhaps 100 million of them pass through each of us every second. We do not notice this, so they truly must be ‘weakly interacting’.

WIMPs and axions were not conjured up by cosmologists out of the blue: they had been conjectured to solve some other problems in particle physics. They have never been successfully made or detected in particle physics machines, even the most powerful ones like the Large Hadron Collider at CERN in Switzerland, so their properties are almost entirely enigmatic, but, although their very existence is not proven, most physicists and astronomers want to believe in them, or something like them, to solve otherwise intractable problems in astronomy and cosmology.

Neutrinos in the cosmic fireball

Quarks came together to make the ordinary material of the Universe so that in the first second of its existence it consisted of neutrons and atoms of hydrogen in a bath of photons and neutrinos. The hydrogen atoms were in fact split apart into their constituents, their orbital electrons freed from their nuclei. Each hydrogen atom was split into a proton and an electron, floating freely. The interactions of the neutrons, protons and electrons contributed to the vast numbers of neutrinos in the first second of the cosmic fireball.

Neutrinos are tiny, electrically neutral particles that feature in nuclear reactions of various kinds; radioactive decay is the kind in which neutrinos were first found. When the nucleus of a radioactive element like radium decays, it emits an electron, which carries off energy in a process called beta decay. It was natural for physicists studying beta decay in 1911 to try to measure the energetics of this process as a clue to how it happens. This revealed that energy was also being carried off by another particle, of a type not identified until then.

The two who made the fundamental discovery that led to the recognition of neutrinos were German chemist Otto Hahn (1879–1968) and Austrian-born physicist Lise Meitner (1878–1968), working in Berlin in 1911. They were both members of German physicist Max Planck’s research group. Hahn had a straightforwardly brilliant and influential scientific career, including the award of a Nobel Prize. Meitner’s career was more challenging. She joined the group from Vienna where she had grown up as a member of a large Jewish family; she was educated privately because, as a woman, she was not allowed to attend a university. She went on to become a senior, although under-supported, scientist in the Berlin group. After her work on beta decay, she and Hahn turned to work on nuclear fission. It was for this work that Hahn was later awarded the Nobel Prize in Chemistry in 1944, but not Meitner.

Although Meitner had converted from Judaism to Christianity and was an Austrian citizen, her position in Nazi Germany became progressively untenable and in 1938 she fled to Sweden. Looking back on her life in Berlin, she was highly critical of those German scientists, her former colleagues including Hahn, who, effectively, collaborated with the Nazi regime if only by remaining silent; she did not exempt herself from the criticism. By contrast, Hahn was praised by Albert Einstein as ‘one of the very few who stood upright and did the best he could in these years of evil’. Having discovered and revealed the science of nuclear fission, Hahn was certainly tortured by the destruction and loss of life caused by the use of atomic weapons in Japan in 1945 and assumed the blame on his own shoulders. Looking back at this period of history, I am happy not to have been tested by being stretched on these ethical racks.

Meitner’s life was one of achievement, in spite of everything that conspired to make her life very difficult. In 1911, she and Otto Hahn found that the energy of electrons emitted in beta decay was typically less than the energy that the radioactive nucleus had lost. It seemed that the reaction violated the principle of the conservation of energy – energy seemed to have disappeared. Where had the lost energy gone?

The conundrum was solved in 1930 by Austrian physicist Wolfgang Pauli (1900–1958), then working in Switzerland. In a daring hypothesis, Pauli suggested that radioactive decay must emit not only an electron, but also an undetected second particle, which carried off the missing energy. Looking at all the other constraints, Pauli concluded that the particle had to be electrically neutral and (almost) massless. Pauli was aware of how outrageous this claim was, saying: ‘I have done a terrible thing, I have postulated a particle that cannot be detected.’ Nevertheless, he was correct. Even if he had not made other even more important discoveries in quantum mechanics, for which he was given the Nobel Prize in Physics in 1945, it seems likely that it would have been given for this work.

The newly identified particle was electrically neutral like a neutron. Discussing the new particle with fellow Italian physicist Enrico Fermi, physicist Edoardo Amaldi coined the name neutrino (Italian for ‘little neutron’); Fermi gave the word currency. The neutrino was eventually detected in 1953 by American physicists Clyde Cowan and Frederick Reines by studying the particles emitted from the Savannah River nuclear reactor in South Carolina. Reines was belatedly awarded the Nobel Prize in Physics for this discovery in 1995, but so long had passed before the importance of the discovery was recognized that Cowan had died before the award was made, so he missed out (under the rules, a Nobel Prize cannot be awarded posthumously).

It is now known that there are three kinds (or ‘flavours’) of neutrino. They transmute spontaneously from one flavour to another in what are known as neutrino oscillations. Neutrinos scarcely interact with matter at all; one estimate for the neutrinos made in nuclear reactions in the Sun is that they would on average travel through light years of solid lead before being absorbed by it, so travelling through billions of light years of empty space is not a problem for them. Even in the incredibly dense Big Bang material, neutrinos were liberated about 1 second after the Big Bang. Once made and liberated, these neutrinos have journeyed through the Universe without being absorbed and survive even up to the present. There are calculated to be about 300 Big Bang neutrinos in every cubic centimetre (0.06 cubic inch) of space in our part of the Universe at this time.

It would be important to detect some of these ancient neutrinos because they are some of the oldest messengers about the Big Bang, carrying information from when it was about 1 second old. An experiment at Princeton University in the USA called PTOLEMY is being developed to try to do this. The acronym has been contrived for the clumsy name ‘Princeton Tritium Observatory for Light, Early-Universe, Massive-Neutrino Yield’ and refers to the Greek astronomer of the second century CE who lived in Alexandria in Egypt and promulgated what was – until Copernicus proposed the heliocentric theory in 1543 (see page 37) – the most widely accepted geocentric theory of the solar system, or the Universe as it was then understood. The acronym effectively lays the claim that the neutrinos that it hopes to detect will be similarly foundational for modern cosmology.

The first chemical elements

In his doctoral thesis, Alpher studied the properties of what he and Gamow called ylem. In 1946, he discovered that the elements could be made by nuclear fusion, the same process that occurs in a hydrogen bomb and in stars like the Sun. In that process, Alpher envisaged that neutrons are successively bonded together to build heavier and heavier elements. This could happen for as long as the Universe was both hot enough and dense enough – it had to be hot because heat made the neutrons move quickly and collide violently, and it had to be dense so that collisions took place frequently.

Gamow and Alpher wrote a paper describing this. Gamow was so struck by the sequencing of the process as neutrons built up elements one by one that he invited another physicist, Hans Bethe, to join the authorship of the paper, so that the authorship would be cited as Alpher, Bethe and Gamow (alpha, beta and gamma are α, β and γ, the first three letters in sequence of the Greek alphabet). Gamow added a playful footnote to the paper saying that Bethe was an author in absentia, giving the game away that Bethe was not an author at all. However, the footnote was omitted in the publication process. Bethe later regretted being added to a scientific paper on which he had done no work and that he afterwards had reservations about.

Alpher and Gamow thought that the fusion process would continue right down the list of chemical elements, building up quantities of all of them. This, they believed, was the origin of all the elements – all of chemistry would be traceable to the first few minutes of the Universe, in the effects of the Big Bang on its constituents. However, they were working with incomplete knowledge of nuclear physics and the characteristics of the Big Bang material. As a result of more detailed analysis, scientists concluded that, after less than a quarter of an hour, the temperature and density of the Universe had fallen to the point where nuclear processes stopped. The Universe would have subsequently expanded an enormous amount and cooled the radiation bathing the matter of the Universe to a low temperature. Gamow and Herman calculated that the temperature at the present time should be 5 degrees Kelvin above absolute zero. It was a good estimate: the radiation was detected in modern times and its temperature measured to be 2.725 degrees Kelvin.

The building of the elements in the Big Bang did not get much past lithium (element 3), with hydrogen by far the most abundant (element 1; 96 per cent) and helium in second place (element 2; 4 per cent); lithium, beryllium (element 4) and boron (element 5) were present only in traces. The list of chemical elements at this time was thus, effectively, only two members long, only one of which is chemically active (helium is chemically inert). The Big Bang brought matter into the Universe and started to mould it into the first few chemical elements, but it was only through its later consequences that the remaining elements came into being, giving us the total of ninety-four naturally occurring elements we have today, and others that are too short-lived to persist and were or are only temporary.

The Universe had to evolve for hundreds of millions of years before chemistry became interesting. There was hydrogen but certainly none of the other elements that make chemistry, including biochemistry, work. However, almost two-thirds of the number of atoms in the human body, and 10 per cent of its mass, are hydrogen atoms. Most of them were formed in the first few minutes of the Big Bang. Much of the material of our flesh is thus directly traceable back through the life of the Universe to its birth. Our chemical origins are, in part, literally in the Big Bang, although the Big Bang was not in itself enough to make all the chemical elements needed for us to exist.

The cosmic fireball and the Cosmic Microwave Background

In the cosmic fireball, all the newly created particles, both ordinary matter and dark matter, were rushing about in the Big Bang material. The dark matter WIMPs did not interact much, but the atomic particles from ordinary matter interacted with each other in frequent collisions, with the electrons absorbing and re-emitting photons. The photons were highly energetic light and X-rays. There is a similarity between the cosmic fireball and the intense flash of light and heat in a hydrogen bomb.

Unlike the brief flash of a nuclear weapon, however, the cosmic fireball lasted for about 380,000 years, the photons bottled up inside the cloud of electrons. But as time passed, the cloud expanded and became cooler and less dense. The electrons settled onto the hydrogen and helium nuclei and formed atoms. At that stage, the photons hitherto trapped inside the cloud by the free electrons were able to escape, the cloud having become transparent. Those photons were able to travel across space from then to now, a length of time of about 13.8 billion years. Travelling undisturbed, they thus preserved an imprint of what the Universe looked like when they became free and escaped and so carry a picture to us of the Universe as it was, aged 380,000 years.

There was one major change in the photons that did not disturb the image they carried but did alter the way we can detect them. They were degraded by the expansion of the Universe during their travel. The wavelengths of the photons were lengthened by the expansion, so that they became less energetic, changing progressively from X-rays to ultraviolet light to light to infrared radiation and ending up here and now as microwave radiation. This is the same kind of radiation that powers a microwave oven and is used to link the towers of telecommunication networks. Microwaves are radio waves that have a wavelength within the range of about 1 millimetre to about 1 metre. The microwave photons that originated in the Big Bang impinge on us from all directions in space and form what is known as the Cosmic Microwave Background radiation (CMB).

The CMB can be detected by radio telescopes and was first discovered in 1965 by two American radio engineers, Arno Penzias (b. 1933) and Robert Woodrow Wilson (b. 1936). They were systematically trying to identify all the sources of noise in a very sensitive receiver-antenna combination working at a wavelength of 7.3 centimetres. Working at the Bell Telephone Laboratories site in Holmdel, New Jersey, which was used for early experiments on communication satellites, they were able to eliminate or measure all the instrumental effects (including the effect of droppings from two pigeons that had decided to nest in the horn), but they always saw excess noise, which was not accounted for by any possible local source. They thought that it must have a natural origin and began to consider whether it was astronomical.

By chance, Penzias and Wilson stumbled across the origin of the microwaves when they were told by a colleague about remarks made by Princeton University physicist Jim Peebles (b. 1935) at an astronomy lecture at Johns Hopkins University in Baltimore. Peebles had described Gamow’s ideas about the fireball of the Big Bang and how the radiation that it generated would look now. This set Penzias and Wilson to think about the cosmic fireball as the source of the natural noise that they had measured. They had made a double Nobel Prize-winning discovery. One award was given to Penzias and Wilson in 1978 for finding the radiation, one to Peebles in 2019 for explaining it even before it had been found.

The CMB is remarkably uniform. It comes almost equally from every direction and its temperature is almost the same everywhere. In fact, these are the main reasons why astronomers concluded that the CMB comes from the Universe as a whole. This uses a line of argument called the Copernican principle, reflecting the way that Polish cleric and astronomer Nicolaus Copernicus (1473–1543) proposed a change to the then accepted position of the Earth. His theory, published in 1543, displaced the Earth from the centre of the Universe, with everything in orbit around our planet, to a position as one of the planets that orbited around the Sun. A succession of scientific advances has confirmed our mediocrity ever since: we are not at the centre of the solar system, we are not at the centre of our Galaxy, we are not at the centre of the Local Supercluster of the galaxies that surround us. We are inside the Universe, but there is no particular significance to our viewing point. Seeing something that is almost completely uniform all around us could lead us to think that we are at its centre, but if we think that this is ruled out, as it is by the Copernican principle, it can only look uniform if it is uniform everywhere, the same throughout the entire Universe – the microwave background must be something that pertains to the cosmos as a whole; that is what led to the term ‘Cosmic Microwave Background’.

Embryonic clusters of galaxies: the first structures revealed

Although the CMB is extremely uniform, it is not exactly uniform. It could not be: the material of the Big Bang was composed of fundamental particles and radiation to which quantum mechanics applies, and quantum mechanics is probabilistic. There is no definite state in quantum mechanics for a material to be in, only a range of possible states, which are occupied each with a certain probability. So, one patch of the Big Bang material existed in one set of conditions (temperature, energy, density, pressure and so on) and another patch was different, although only slightly. As the Big Bang developed, the differences between patches intensified as the denser patches contracted under their own gravity. The minute fluctuations in the brightness, temperature, and so on, of the Big Bang material from one place amplified and grew into larger fluctuations. At a certain point the Big Bang material became so rarefied that it became transparent, letting out the radiation that became the CMB, and, as described above, this carried images of the Big Bang at the time it was released, including whatever structure was there at that time.

The size of the brighter and fainter patches and the level of non-uniformity were predicted theoretically by Russian astrophysicists Rashid Sunyaev (b. 1943) and Yakov Zel’dovich (1914–1987) in 1966–70. The prediction could not be very precise, but suggested that the level of the variation in brightness was about the same as the patchiness across the most uniform white paper – not very patchy.

Sunyaev is a large, affable bear of a man. Born in Tashkent, now the capital of Uzbekistan, he left in 1960 aged seventeen to study in Moscow, after promising his grandmother he would not work on bombs. Setting out on his PhD studies, he was told that astrophysics was useless and became intent on research into particle physics, but he was taken under Zel’dovich’s wing in 1965 and was inspired by him to work on astrophysical problems; this redirected his career. That year, Penzias and Wilson discovered the CMB. Zel’dovich until then espoused a theory of the origin of the Universe that started from dense material at a cold temperature. He immediately abandoned his own ideas and adopted the hot Big Bang model, starting to work out its properties. Together the two men worked to identify processes occurring in the Big Bang material and the way that small irregularities developed into large-scale structure.

Sunyaev eventually became the director of the Max Planck Institute for Astrophysics in Garching, near Munich. He is gifted with considerable powers of persuasion, and he used them effectively to inspire numerous teams of scientists to measure the irregularities that he expected in the CMB. There were many unsuccessful searches by telescopes based on the ground to discover these, targeted at various levels within the range of uncertainty, and an equally unsuccessful attempt by a Soviet scientific mission, Relikt-1, in 1983. These efforts narrowed down all the possibilities, so that there was only one range where the fluctuations could be: if they had not then been found, the Big Bang theory would have been in big trouble. The fact that fluctuations existed was established by NASA’s Cosmic Background Explorer (COBE) satellite in 1989. The work was recognized by the award of the 2006 Nobel Prize in Physics to the leaders of the COBE satellite team, American astrophysicists John Mather, who coordinated the entire process and measured the properties of the radiation, and George Smoot, who measured its small variations.

COBE showed that the variations existed and measured them, but did not map them. The first map was made by NASA’s follow-up mission to COBE, the Wilkinson Microwave Anisotropy Probe (WMAP; 2001–10). The Planck space satellite, which was launched by the European Space Agency (ESA) in 2009 and orbited until 2013, has provided the most accurate map (pl. IV). The measurements obtained by the satellite achieved the ultimate limit of accuracy at which the fluctuations can be measured (because of complicating interference from other astronomical radiations).

Maps of the CMB show graininess at the level of 1 part in 100,000. The graininess represents differences in density in the material of the Big Bang, originating from inevitable random fluctuations. The maps represent the Universe at an age of 380,000 years. As we shall see in the next chapter, the dense spots are embryonic clusters of galaxies.

The expanding Universe

In Chapter 1, the Big Bang was identified as the moment of birth of the Universe, but perhaps in some ways it would be better to call it its conception. The time of the cosmic fireball was the equivalent of its development as an embryo. Before the generation of the CMB it was a completely alien place of elementary particles and very energetic radiation. From 380,000 years old, it could be regarded as a child, beginning to function like a person, maturing and starting to become more familiar. The period starting from then has been one of progressive growth, in what will for the Universe be a never-ending expansion. Cosmology is the science of the Universe as a whole and attempts to describe the significant characteristics of that growth. It puts forward detailed, often speculative, descriptions of what the Universe is made of, like hydrogen gas, radiation and dark matter. It develops descriptions of how the Universe assembles its component materials into structures, like galaxies, and what then happens, such as the ways the structures interact. It also tries to fit everything into a moving picture showing how everything develops. This overall framework is called a model.

In past times the model would have been literally a model – a physical miniature toy made of wooden struts and metal gears, like a mechanical clock. In fact, in medieval times clocks were indeed actual models of the Universe as it was known then. They harked back to the way a sundial works, each made with a pointer rotating once in twenty-four hours and representing the motion of the Sun rotating around the Earth. Nowadays, a cosmological model is a set of mathematical equations that are given form as diagrams and graphs, but seldom if ever given a tangible form as a physical device.

Modern cosmology began in 1917 with Albert Einstein’s paper about general relativity, ‘Cosmological Considerations in the General Theory of Relativity’ (see Chapter 1). Dutch mathematician Willem de Sitter, German physicist Karl Schwarzschild (see page 67) and Russian mathematician Alexander Friedmann developed cosmological models built on Einstein’s foundation. It was found that general relativity admitted the idea of an expanding Universe, and this was almost immediately confirmed by the realization that galaxies were mileposts that delineated the great distances in space and were receding from our own Galaxy. In 1929, Edwin Hubble discovered that galaxies were receding in such a way that the speed of recession of a galaxy was proportional to its distance (see page 20). The constant of proportionality is called Hubble’s constant.

Until 2013, astronomers used direct measurements of speeds and distances of galaxies to determine Hubble’s constant. They can do this only for nearby galaxies by measuring the apparent brightness of Cepheid variable stars and supernovae, whose actual brightness is known somehow. In the same way, if you see a light at night, you can estimate its distance if you know that it originates in the dim flame of a match, the beam of a car headlight or the glare of a lighthouse. The technique was developed over the previous century, first for Cepheid variable stars, pioneered by American astronomer Henrietta Leavitt (1868–1921).

Leavitt was one of a large number of women scientists employed by Edward Pickering (1846–1919), the director of the Harvard College Observatory in Cambridge, Massachusetts. Williamina Fleming was another (see page 164). Leavitt, who was deaf, attended Radcliffe College where she was attracted to astronomy and spotted by Pickering. She came to head the department within Harvard College Observatory, which was carrying out a major photographic project organized by Pickering to measure the brightness of stars in the Milky Way and the two nearest galaxies – the Magellanic Clouds (see page 107). Using a technique called fly-spanking (looking through a microscope at the black spots left by the light from stars on photographic plates – they look like flies that have been swatted – and estimating their size, i.e., their brightness), Leavitt found and measured 1,777 variable stars on pictures of the Magellanic Clouds taken at the Harvard Southern Station in Arequipa, Peru.

The stars were variable stars of all classes, but some of them were similar to those in the Milky Way known as Cepheids (pronounced ‘seff-ids’; Delta Cephei is the prototype star). Cepheids vary in brightness periodically in a recognizable regular cycle. In 1908, Leavitt plotted the average brightness of the Cepheid variable stars in the Large Magellanic Cloud versus their periods. There was a clear correlation. The period-luminosity (P-L) relationship was not detectable in Cepheids in our Milky Way because the stars were all at different distances as well as having differing intrinsic brightnesses, but the Cepheids in the Large Magellanic Cloud are all at the same distance. Leavitt’s sample of Cepheids eliminated the distance variable in the plot and the P-L relationship revealed itself.

The P-L relationship became a way to estimate the intrinsic brightness of a Cepheid star – equivalent to some indicator about the origin of a light seen at night, such as observing whether it flickers (a flame), shines steadily (a headlight) or flashes periodically (a lighthouse). The method for this was to determine the period of variation of the star and use the P-L relationship to estimate its intrinsic brightness, then relate its intrinsic brightness to its apparent brightness and deduce the distance of the Cepheid and the galaxy that it inhabits (if it is intrinsically very bright and looks dim it must be far away, but if it is feeble and looks bright, it must be close). The discovery was the key to determining the distance to galaxies in terms of the distance to the Large Magellanic Cloud, and so a tool for establishing the distance scale of the Universe and therefore Hubble’s constant.

Recent determinations of Hubble’s constant have used the Hubble Space Telescope to redetermine the distance scale by the Cepheid technique. In fact, the telescope was launched in 1990 for this as its main purpose. Astronomers have also found parallel ways to tackle the same problem, including with the Gaia space mission (see pages 100–102). Cutting a longer story short, astronomers seem agreed that these techniques give a value for Hubble’s constant of 73.5 kilometres per second per megaparsec, plus or minus 1.4 (a parsec is a measure of astronomical distance equivalent to 3.3 light years; a megaparsec is 1 million parsecs). So, if there are two galaxies, one of them 3.3 million light years further away from us than the other, then, because of the expansion of the Universe, the further galaxy is expected to move away from us at a speed that is 73.5 kilometres per second faster than the closer galaxy.

The expansion is the outcome of an explosion: the Big Bang. In an explosion in which various fragments are thrown out at different speeds, in a given time the faster bits have moved further than the slower ones. In fact, the distance travelled, divided by the speed of any fragment, is the time since the explosion. The significance of Hubble’s constant is that it is related to the age of the Universe, with the value of 73.5 corresponding to an age of 12.7 billion years.

A completely independent way to determine Hubble’s constant became available in 2009 when the Planck satellite was launched to investigate the CMB. It collected data until 2013 and it then took five years for a big team of astronomers to analyse the data to everyone’s satisfaction. First off, astronomers had to decide exactly which cosmological model to use. Then they had to adjust the chosen model to fit the image and see how changing the value of Hubble’s constant affects the outcome. This enabled them to bear down on the most appropriate model.

The model that worked the best, called Λ-CDM, was the one that had become the standard model even before Planck was launched, where Λ is the Greek capital letter lambda and CDM stands for ‘cold dark matter’. Nearly all astronomers believe that dark matter exists but some believe in one kind of dark matter, others in another. Cold dark matter is made of fundamental particles that move at less than the speed of light, and has the favoured property that it helps produce galaxies and clusters of galaxies by growing them from merging smaller lumps. Λ is the cosmological constant that Einstein proposed in 1917 and imagined would help prop up the Universe and keep it static (see page 16). In modern cosmological models, it represents a similar push, but not one that keeps a static universe static. It is in effect a force that pushes on an already expanding Universe to make it expand faster.

The cosmological constant labels mathematically a feature of the expansion of the Universe that was discovered nearly a century later, in 1998–99. The Universe is accelerating. This is completely contrary to expectation. The galaxies and dark matter in the Universe mutually pull one another, a process that should slow the expansion down. When astronomers used the time machine that is the Hubble Space Telescope, they found the contrary. Gazing into the distant Universe, astronomers looked back in time, because light travels at a finite speed. The furthest galaxies, representing the earlier Universe, should be moving more quickly than nearby ones. In 1998–99, astronomers from the Supernova Cosmology Project and the High-Z Supernova Search Team used supernovae observed with the Hubble Space Telescope to check the distances of distant galaxies, coordinating with the largest ground-based optical telescopes to check how fast they were moving. The two teams discovered the reverse of what was expected and the three leaders, American astrophysicists Saul Perlmutter, Brian P. Schmidt and Adam G. Riess, were awarded the 2011 Nobel Prize in Physics for the discovery.

The expansion of the Universe is speeding up, not slowing down. There is some progressive input of energy into the expansion of the Universe. It goes under the name of ‘dark energy’ and its nature is a mystery; it is even more of a mystery than dark matter. In a Universe with both matter (whether dark or not) and dark energy, there is a competition between the tendency of Λ and dark energy to cause acceleration and the tendency of gravity and matter to cause deceleration. This has a big effect on the ‘formation of structure’, the term that astronomers give to the way that the earliest irregularities in the material of the Big Bang grew. The balance between gravitation and Λ controlled the way our Universe is developing.

The Millennium Simulation, a major calculation by astronomers at the University of Durham and the Max Planck Institute for Astrophysics (see also Chapter 3), showed how the formation of a cluster of galaxies starts weakly because the fluctuations in the Big Bang material are not very pronounced. Gravity, principally from dark matter, draws in surrounding material, but then the release of dark energy tends to stabilize the infall, which peters out as the galaxies orbit one another.

The characteristics of the picture of the CMB drawn from the Planck satellite data suggested that the Λ-CDM model is correct: the standard model of cosmology survived all the tests. Tongue in cheek, scientists praised the Universe because the real data fits their model so well and acclaimed the Universe as ‘almost perfect’. The fit gave a value for Hubble’s constant: namely 67.4 kilometres per second per megaparsec, plus or minus 0.4, which corresponds to an age for the Universe of 13.8 billion years. The Planck result for Hubble’s constant is near to the value from measurements using the Hubble Space Telescope and was a cause for early celebration. A closer look is more sobering, however; in fact, the Planck value is worryingly different from the Hubble Space Telescope’s.

On the one hand, it is fantastic that two completely different ways of tackling the problem – one focusing on the nearby, old Universe, the other on the distant, young Universe – are close to each other. On the other hand, the two figures do not agree to within their respective uncertainties and that constitutes a discrepancy to be resolved. The discrepancy might be due to some problem of a technical nature. Or, it might mean that there is something fundamentally wrong with the science behind the Λ-CDM model – we may need ‘new physics’. The problem has not been settled.

The Planck data provides values for other parameters in the cosmological models that are interesting answers to basic questions, for example:

•Will the Universe expand forever or will it eventually coast to a halt and collapse? This depends on the density of the Universe, and the Planck data suggests that the Universe is just on the borderline and will slow its expansion but never completely stop (this is explored further in Chapter 12).

•How much dark matter is there in the Universe in proportion to ordinary matter? There is five and a half times as much.

•How much matter, dark matter and dark energy are there in the Universe? Ordinary matter makes up 4.9 per cent of the energy in the Universe, dark matter 26.8 per cent and dark energy 68.3 per cent.

Planck has initiated the present scientific era in which cosmology has become an accurate science rather than speculative theories. Cosmologists have become more confident about their subject. However, we are disappointingly ignorant about the content of 95 per cent of our Universe. At least we know how ignorant we are.